TikTok cracks down on AI celebrity scams

TikTok Takes On AI-Powered Celebrity Scams

In response to growing concerns about artificial intelligence being used to create fake celebrity endorsements, TikTok's Chinese counterpart Douyin has launched a major crackdown. The move comes after several high-profile cases where AI-generated versions of public figures appeared to hawk products they never endorsed.

The Impersonation Problem Goes Digital

Douyin Vice President Li Liang addressed the issue head-on during a recent press briefing. "While some reported cases didn't actually occur on our platform," Liang clarified, "we've definitely seen content where AI technology mimics celebrities for sales purposes."

The executive didn't mince words about the seriousness of these digital impersonations. "This isn't just about copyright—it erodes trust in our entire ecosystem," Liang explained. "When creators, merchants, and platforms lose credibility with consumers, everyone suffers."

Technical Arms Race Against Deepfakes

Identifying AI-generated content presents an ongoing challenge across social media platforms. As detection methods improve, so do the tools used by bad actors. "These accounts constantly evolve their techniques," Liang noted. "It's like playing whack-a-mole with generative technology."

Douyin plans to combat this by:

- Boosting investment in detection algorithms

- Expanding moderation teams specializing in synthetic media

- Streamlining reporting processes for affected creators

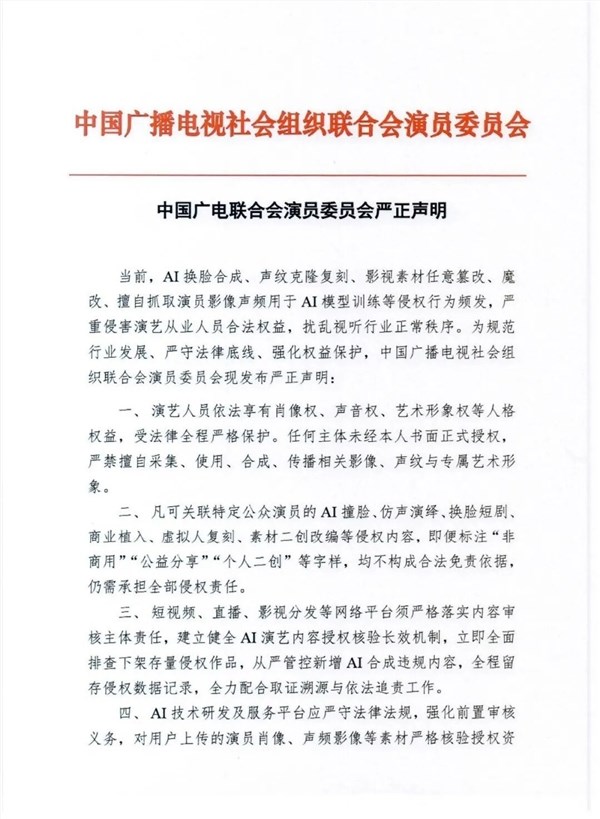

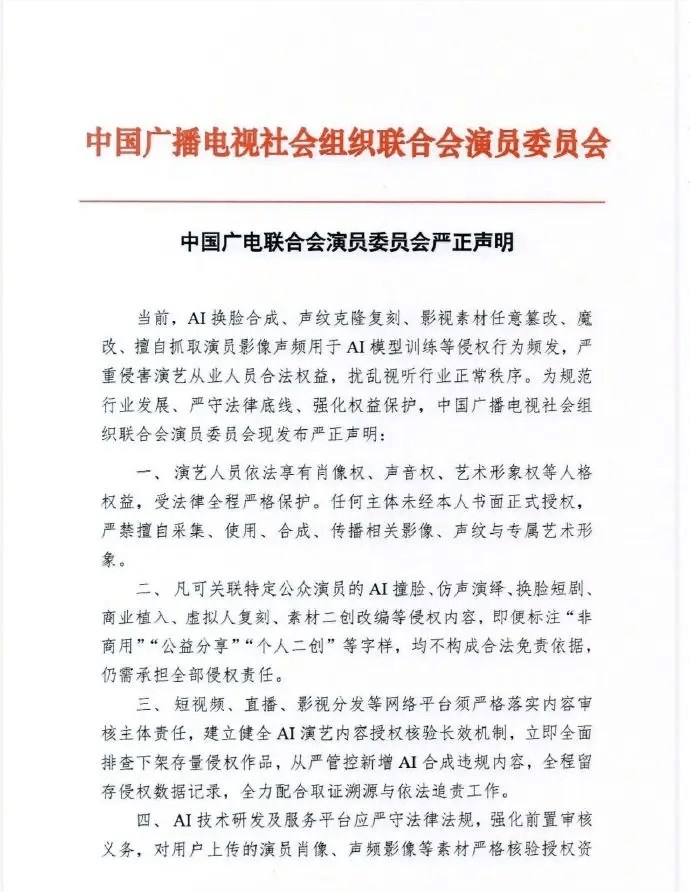

The platform has drawn a clear line in the sand: any use of AI to impersonate others—especially authoritative figures like military or police—for marketing purposes constitutes serious misconduct.

Protecting Creators And Consumers Alike

For influencers worried about their digital likeness being stolen, Douyin offers dedicated channels to report impersonations. The company promises swift action on verified cases, though specifics about response times remain unclear.

The crackdown reflects broader industry concerns as AI tools become more accessible. While platforms grapple with balancing innovation against misuse, cases like these highlight why clearer regulations around synthetic media may be necessary.

The stakes are high—not just for individual creators whose reputations could be damaged by unauthorized endorsements, but for consumers who might fall victim to scams wearing a familiar face.

Key Points:

- Douyin launches special initiative targeting AI celebrity impersonations

- Detection remains challenging as fake accounts employ evolving techniques

- New protections offered for creators whose likeness gets misused

- Industry-wide implications as synthetic media becomes more sophisticated