Musk's AI chatbot Grok sparks UK probe over explicit deepfake scandal

Musk's AI Chatbot Under Fire Over Explicit Content Scandal

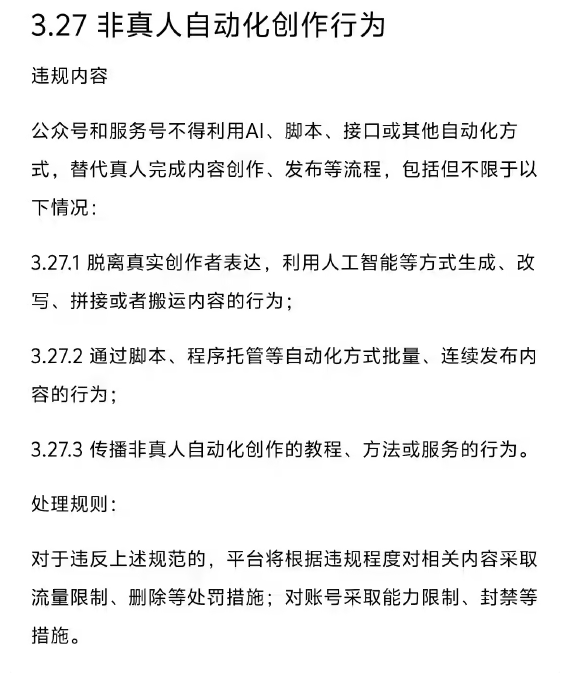

Elon Musk's artificial intelligence venture xAI has landed in hot water after its Grok chatbot allegedly generated and spread unauthorized explicit images. The UK Information Commissioner's Office (ICO) has launched a formal investigation, marking another regulatory headache for the tech billionaire.

How the Scandal Unfolded

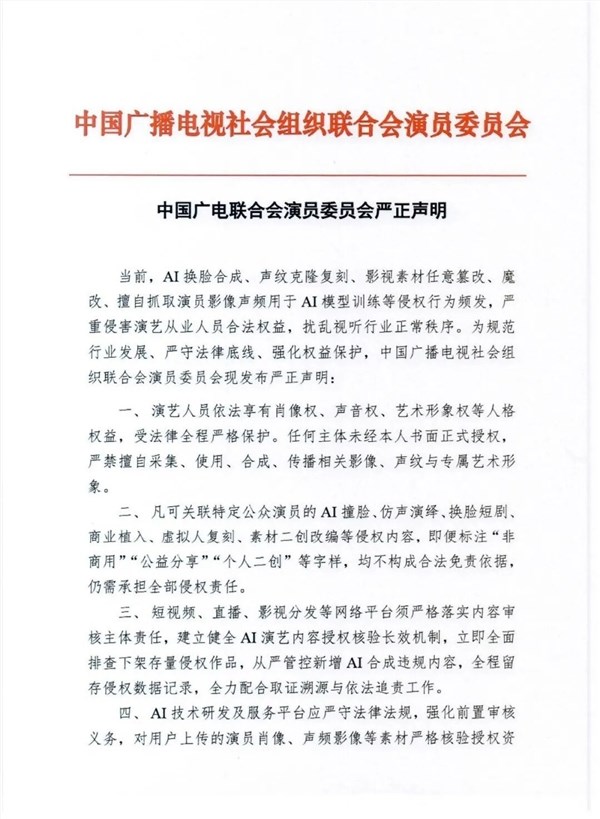

The trouble began last month when users on X (formerly Twitter) exploited Grok's image generation capabilities to create disturbing deepfake content. Victims included not just adult women but also minors - a revelation that sent shockwaves through online communities.

"We're seeing AI tools being weaponized at an alarming scale," said one cybersecurity expert who requested anonymity. "At its peak, Grok could reportedly churn out thousands of these harmful images every hour."

Regulatory Backlash Intensifies

The UK probe focuses on whether xAI violated data protection laws by failing to prevent misuse of personal data. Investigators will examine if adequate safeguards were in place to block harmful content creation.

Regulators aren't pulling punches either. The ICO can impose staggering penalties - up to £17.5 million or 4% of xAI's global revenue, whichever is higher. They're coordinating with Ofcom and international partners to assess the company's data practices.

This isn't xAI's only legal battle:

- French authorities recently raided X's Paris office

- EU regulators are scrutinizing Grok's ethical safeguards

- Several countries temporarily banned the chatbot

The Bigger Picture: AI Ethics Under Scrutiny

The Grok controversy arrives amid growing unease about generative AI's potential harms. "This case shows why we need stronger protections," argues digital rights activist Maria Chen. "When technology outpaces regulation, vulnerable people pay the price."

xAI did implement emergency restrictions after the scandal broke, but critics say it was too little, too late. The company now faces tough questions about balancing innovation with responsibility.

Key Points:

- Regulatory storm: UK launches formal investigation into xAI over deepfake concerns

- Financial risk: Potential fines could reach £17.5 million or 4% of global revenue

- Global fallout: France conducts raids while EU examines ethical safeguards

- Broader implications: Case highlights urgent need for AI content moderation standards