Google's AI Crackdown Leaves Email Automation Users in the Cold

Google Draws Hard Line on AI Email Automation

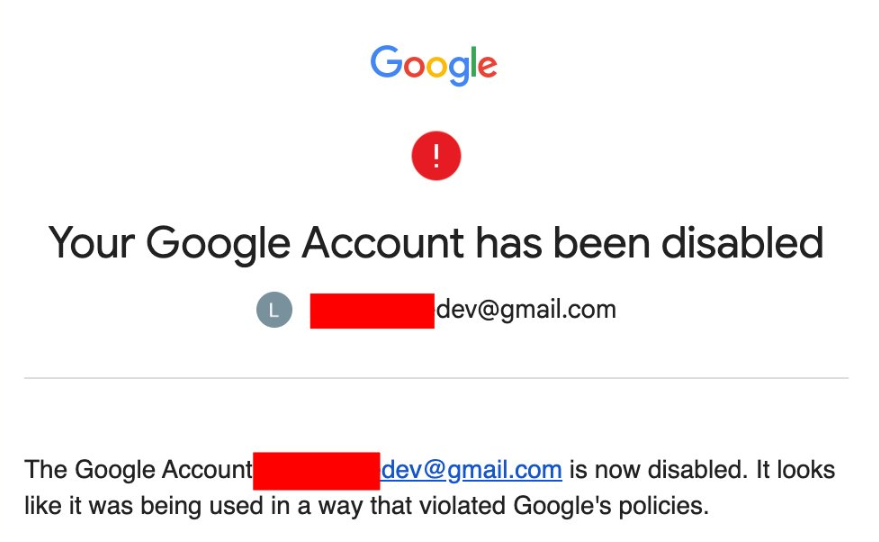

What started as a convenient way to manage overflowing inboxes has turned into a digital nightmare for some Gmail users. Google's recent enforcement actions against AI-powered email tools have resulted in complete account terminations - a drastic step that's left many scrambling to recover years of personal and professional data.

The Heavy Price of Automation

Unlike previous restrictions that limited specific features, these latest penalties hit with sledgehammer force. "It wasn't just my email that disappeared," shared one affected user who'd maintained their account since 2014. "My entire digital life - family photos, work documents, even my Google Play purchases - all gone in an instant."

The common thread? These users had authorized third-party AI services like OpenClaw to access their accounts. These tools promise to revolutionize email management by automatically sorting messages, drafting replies, and even negotiating with senders - but their machine-like behavior appears to have triggered Google's security alarms.

Why the Hammer Fell

Security analysts point to two primary triggers for the bans:

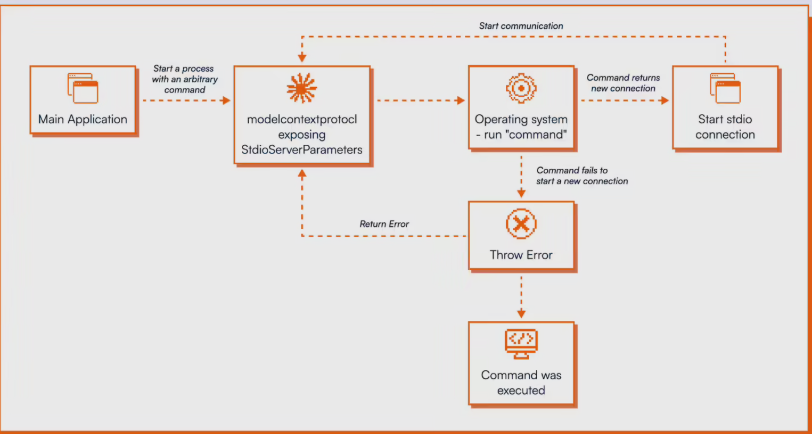

Unnatural Activity Patterns: AI agents work differently than humans - they perform rapid-fire operations at all hours without breaks. To Google's systems, this looks suspiciously like bot activity or account hacking attempts.

Subscription Workarounds: Some users reportedly tried sharing paid service tokens among multiple accounts, essentially getting premium features without paying. This blatant policy violation left Google little choice but to act.

"These aren't accidental violations," explains cybersecurity expert Dr. Elena Martinez. "When you combine automated behavior that mimics hacking attempts with deliberate attempts to circumvent payment systems, you're essentially waving a red flag at one of the world's most sophisticated security teams."

Damage Control and Prevention

The OpenClaw development team confirms they're working on a "compatibility mode" to make their tool less detectable by security systems. But until solutions emerge, experts recommend immediate precautions:

- Stop connecting automation tools to primary accounts immediately

- Create separate accounts specifically for testing AI services

- Implement regular local backups of critical cloud data

- Review all third-party app permissions in your Google account settings

The situation serves as a stark reminder that while AI promises convenience, relying too heavily on automation tools comes with real risks - especially when they interact with services containing irreplaceable personal data.

Key Points:

- Total Account Wipeouts: Google is banning entire accounts, not just restricting features

- Two Strike System: Both automated behavior and payment evasion trigger penalties

- Data Recovery Unlikely: Permanent bans offer little recourse for recovering lost files

- Protect Yourself Now: Experts urge immediate changes to prevent catastrophic data loss