Critical Flaw in AI Protocol Leaves 200,000 Servers Vulnerable

AI Security Crisis: 200,000 Servers at Risk from Protocol Flaw

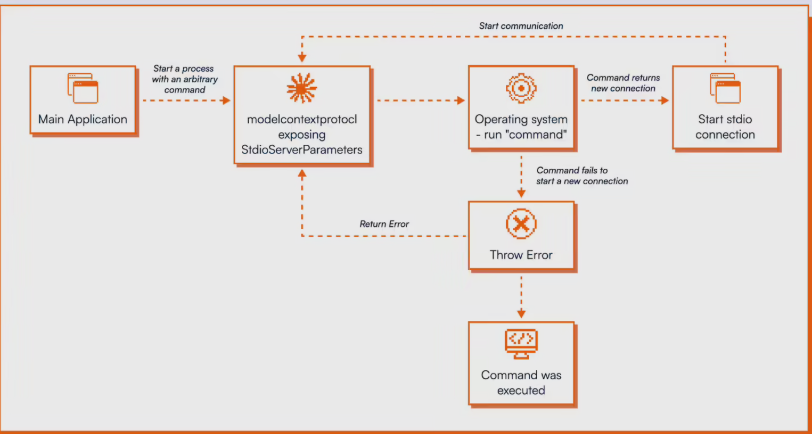

The AI development community is sounding alarms after cybersecurity firm OX Security exposed critical vulnerabilities in Anthropic's Model Context Protocol (MCP). This widely adopted standard, designed to connect AI models with external tools, contains what experts call "a ticking time bomb" in its architecture.

The Heart of the Problem

At fault is MCP's STDIO interface, which blindly executes any incoming operating system commands - even when server startup fails. "This isn't just a coding error," explains OX Security lead researcher Daniel Chen. "It's like building a house where every window automatically unlocks when the front door jams."

The flaw affects all 11 programming languages officially supported by MCP, from Python to Rust. Chen's team spent months testing real-world attack scenarios with frightening results:

- LangFlow systems could be hijacked without login credentials

- Letta AI servers fell prey to man-in-the-middle attacks

- Flowise's security filters proved easily bypassed

- Windsurf IDE users risked infection just by visiting malicious sites

Industry Response Falls Short

When notified last January, Anthropic surprisingly dismissed the issue as "expected behavior." Their only action? Updating documentation to suggest "caution" when using the STDIO adapter. Meanwhile, tests showed 9 of 11 major MCP marketplaces accepted malicious servers without review - only GitHub's registry caught the threat.

"We're seeing the equivalent of leaving master keys under doormats," Chen warns. "Except these keys work on hundreds of thousands of digital doors worldwide."

What This Means for Developers

With no architectural fix forthcoming, experts urge immediate precautions:

- Audit all MCP implementations

- Isolate MCP-dependent services

- Monitor for unusual command activity

The cybersecurity community has assigned the vulnerability a CVE number, but the ball remains in Anthropic's court for a permanent solution.

Key Points

- 200,000+ servers vulnerable to remote attacks

- All 11 supported languages affected

- No architectural fix from Anthropic since January

- 9 of 11 marketplaces fail basic security checks

- Researchers confirm real-world exploitability