Critical Security Flaws Found in Widely Used AI Protocol

AI Security Alert: Fundamental Flaws in Popular Protocol

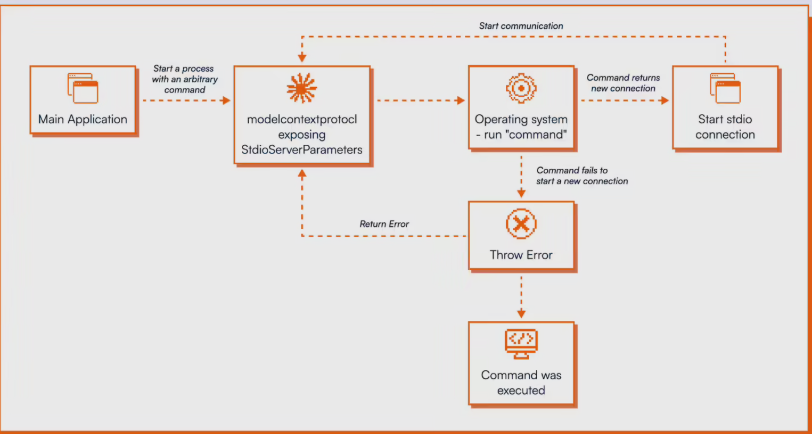

Security researchers from OX Security have sounded the alarm about critical vulnerabilities in Anthropic's Model Context Protocol (MCP), a standard used by major tech firms including Microsoft and Google. The findings reveal not just surface-level bugs but deep architectural issues that could compromise entire AI systems.

The Scope of the Problem

The vulnerabilities, now assigned multiple CVE identifiers, stem from fundamental design choices in MCP's architecture. Researchers identified four primary attack methods:

- Unauthenticated UI injection

- Security hardening bypasses

- Prompt injection vulnerabilities

- Malicious plugin distribution channels

What makes these findings particularly concerning is their presence not in implementation code but in the official SDKs themselves. This means projects built with Python, TypeScript, Java, or Rust - essentially all MCP implementations - share these vulnerabilities.

Real-World Impact

Several high-profile open-source projects including LiteLLM, LangChain, and IBM's LangFlow have already been confirmed vulnerable. Worse still, researchers demonstrated successful exploits in actual production environments, not just controlled test scenarios.

"When we found these issues, we expected Anthropic would treat them with urgency," said one researcher who asked to remain anonymous. "Instead, we were told these were intentional design decisions."

Industry Response and Recommendations

Security experts are urging immediate action:

- Isolate AI systems - Don't expose LLMs or related tools directly to public networks

- Treat all MCP input as untrusted - Implement strict validation measures

- Use sandbox environments - Contain potential breaches

- Update software promptly - Apply all available security patches

"These aren't just theoretical risks," warns cybersecurity analyst Mark Chen. "We're seeing active exploitation attempts already."

The Controversy Continues

Anthropic's stance has divided the AI community. While some argue protocol stability outweighs security concerns, others see this as a dangerous precedent. With MCP's widespread adoption, the debate has implications far beyond any single company.

Key Points

- MCP protocol contains fundamental security flaws in its architecture

- Vulnerabilities affect all major implementation languages

- Multiple high-profile projects already confirmed vulnerable

- Anthropic maintains these are 'intended design features'

- Security experts recommend immediate protective measures