Anthropic's GitHub Cleanup Backfires, Wiping Thousands of Legit Repos

When Code Protection Goes Too Far

Anthropic found itself in hot water this week after an aggressive attempt to remove leaked source code from GitHub spiraled out of control. The AI company's cleanup operation mistakenly flagged and removed thousands of legitimate repositories, leaving developers scrambling to recover their work.

The Leak That Started It All

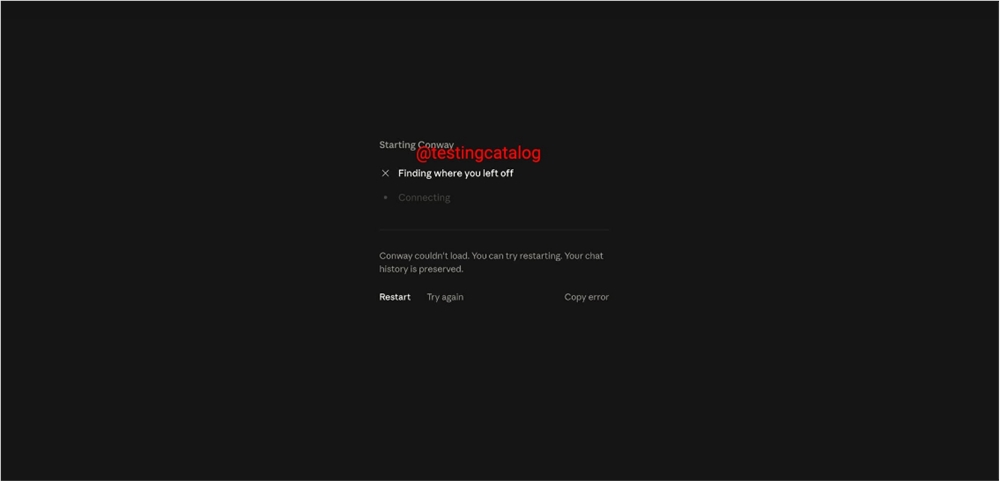

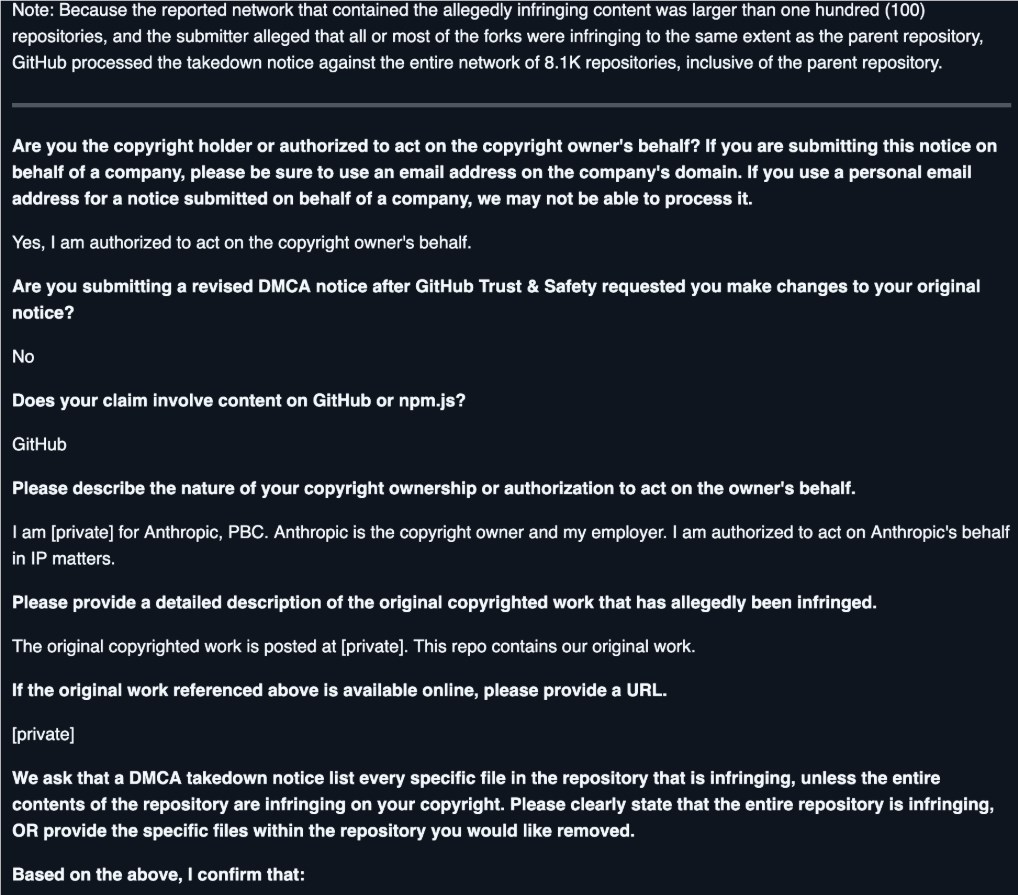

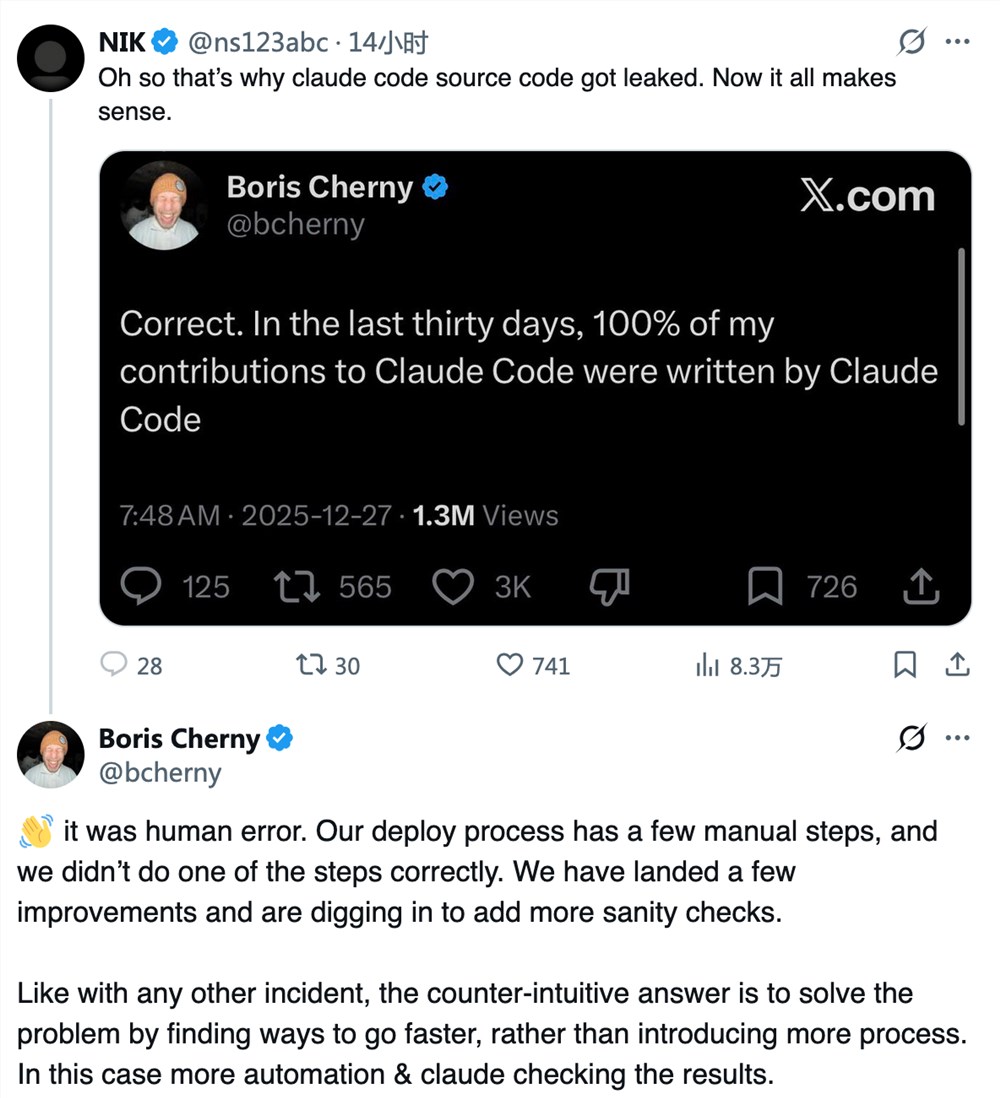

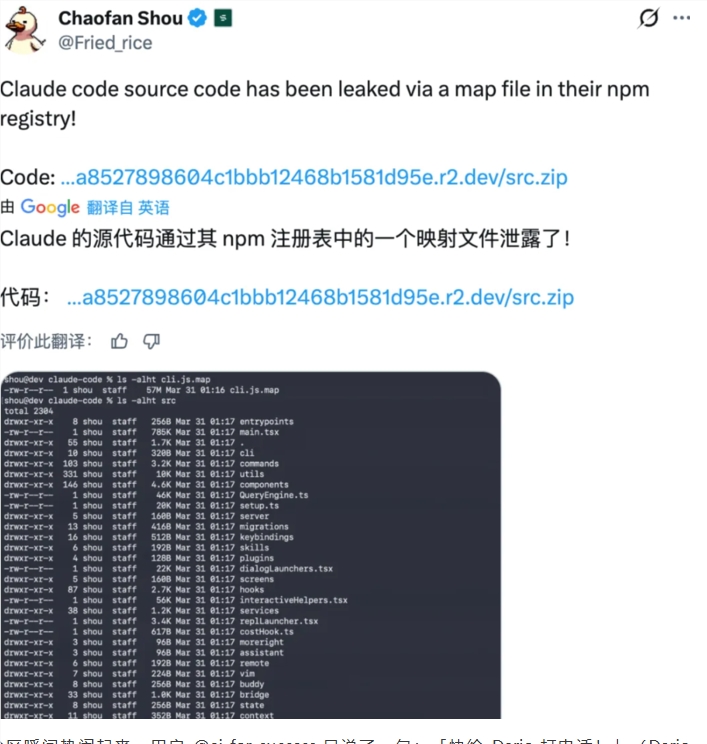

The chaos began when Anthropic accidentally published the source code for its Claude Code tool. While the company moved quickly to contain the leak, copies had already spread across GitHub. In their rush to mitigate the damage, Anthropic deployed automated tools to identify and remove repositories containing the leaked code.

"We were facing a serious security breach," an Anthropic spokesperson later explained. "But in our urgency, we failed to properly calibrate our detection systems."

Collateral Damage in the Open-Source Community

The automated scripts didn't discriminate between actual copies of the leaked code and projects that merely referenced it. Developers woke up to find their accounts suspended and repositories gone - victims of what some are calling a "digital scorched earth" approach.

Open-source contributor Mark Reynolds described the frustration: "One day my project was fine, the next it vanished without warning. No email, no chance to appeal - just gone."

A Security Blunder Compounded by Poor Crisis Response

Industry experts point out that the original leak resulted from what should have been an avoidable mistake - private TypeScript code accidentally packaged into public npm modules. But many argue Anthropic's heavy-handed response did more harm than good.

"This isn't just about a technical error," says open-source advocate Lisa Chen. "It reveals how little some tech giants understand about nurturing developer ecosystems. You can't treat community platforms like your private playground."

Damage Control Mode

Anthropic has since apologized and is working with GitHub to restore affected projects. But trust within the developer community may take longer to rebuild. The incident serves as a cautionary tale about balancing security concerns with respect for collaborative platforms.

Key Points:

- Anthropic's leaked code cleanup accidentally removed thousands of legitimate GitHub repositories

- Automated tools failed to distinguish between actual leaks and unrelated projects

- Developers express anger over account suspensions without warning

- Incident highlights tension between corporate security and open-source values

- Company now working to restore mistakenly deleted content