Engineer's Firing Claim Turns Out to Be Clever Marketing Stunt

The Great AI Code Leak That Wasn't

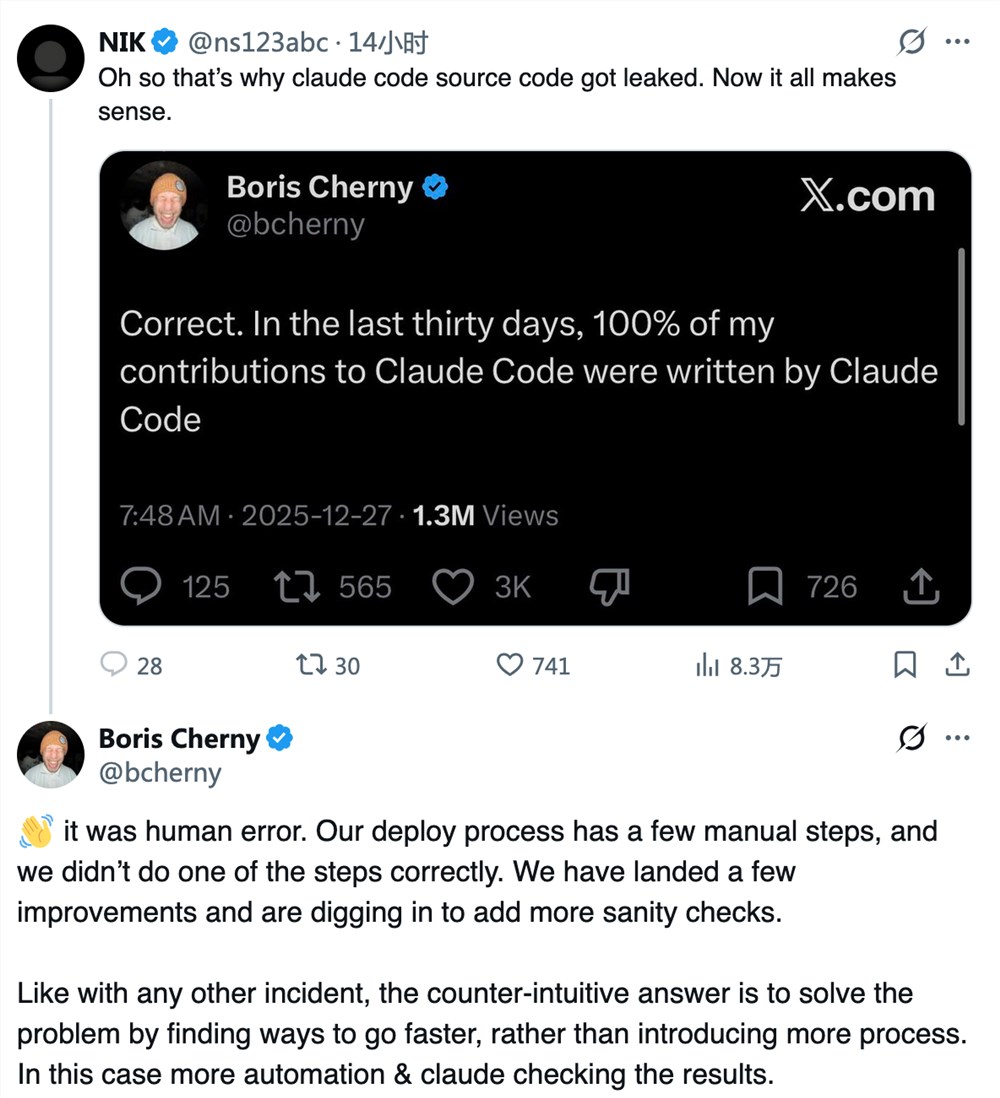

The tech world was buzzing when Kevin Naughton Jr. claimed he'd been fired from Anthropic for accidentally leaking sensitive source code. But as it turns out, this was all part of an elaborate marketing ploy - Naughton never worked at Anthropic at all.

Truth Stranger Than Fiction

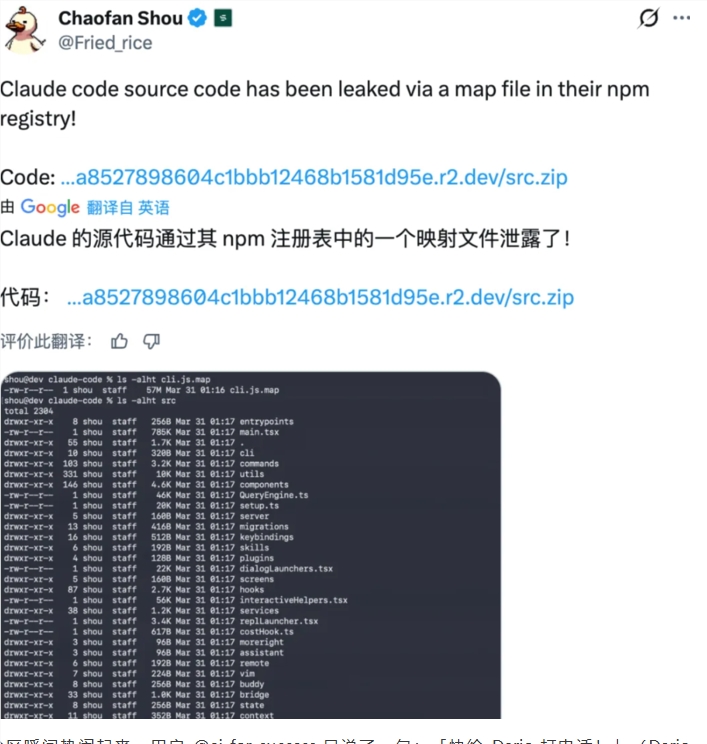

Here's where things get interesting: while Naughton's story was pure fiction, there actually was a real code leak from Anthropic. The company accidentally exposed about 500,000 lines of Claude Code's source material when they included .map files in an npm repository release.

Security experts note this created the perfect conditions for Naughton's stunt. "He basically surfed on a real wave of concern," said one industry analyst. "The leak was genuine, so his story gained traction before anyone could verify his employment status."

What the Leak Actually Revealed

The exposed code offered developers a rare peek behind Anthropic's curtain:

- Automated Proxy Commands: Detailed how agents handle complex local instructions

- Prompt Engineering Secrets: Showed the company's system prompt matrix architecture

- Hidden Testing Modes: Included references to "Undercover" and "Bypass Permissions" modes being developed

Marketing Gone Viral

Naughton turned out to be quite the entrepreneur - he used his viral moment to promote his startup Ferryman, even dropping discount codes in comment sections. While effective, this approach drew sharp criticism from the tech community.

"It's one thing to market your product," tweeted a prominent developer. "It's another to fabricate a whole identity crisis around someone else's mistake."

Bigger Than One Stunt

This incident raises important questions about:

- Tech companies' vulnerability to social engineering

- How easily real issues can be co-opted for personal gain

- The blurred lines between clever marketing and deception

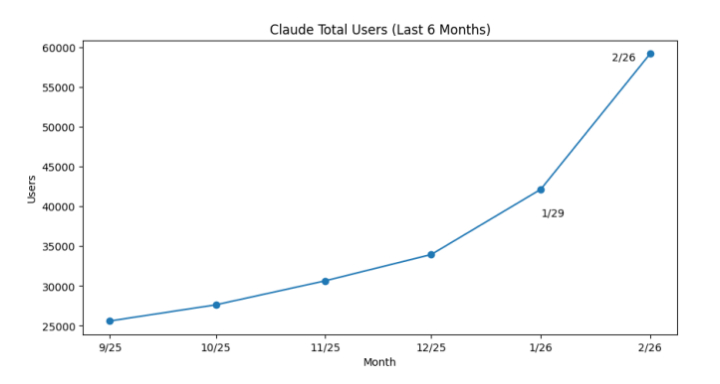

- Security practices at fast-growing AI firms

Key Points:

- Anthropic did experience a real source code leak through npm repository files

- The "fired engineer" story was fabricated as marketing for startup Ferryman

- Leaked code revealed advanced Claude features under development

- Incident highlights security and PR challenges facing AI companies