Claude Code Leak Exposes AI Industry's Automation Gaps

How a Simple File Caused Major AI Headaches

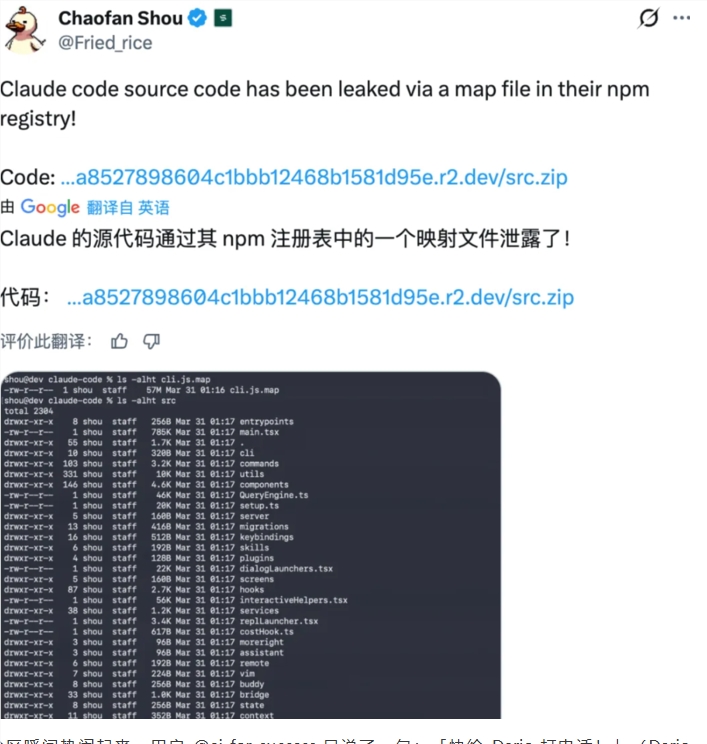

In the high-stakes world of artificial intelligence, sometimes the biggest problems come from the smallest oversights. Anthropic, creator of the Claude AI system, recently learned this lesson the hard way when an un-obfuscated MAP file slipped into a production release, exposing sensitive source code to the public.

The Domino Effect of One Mistake

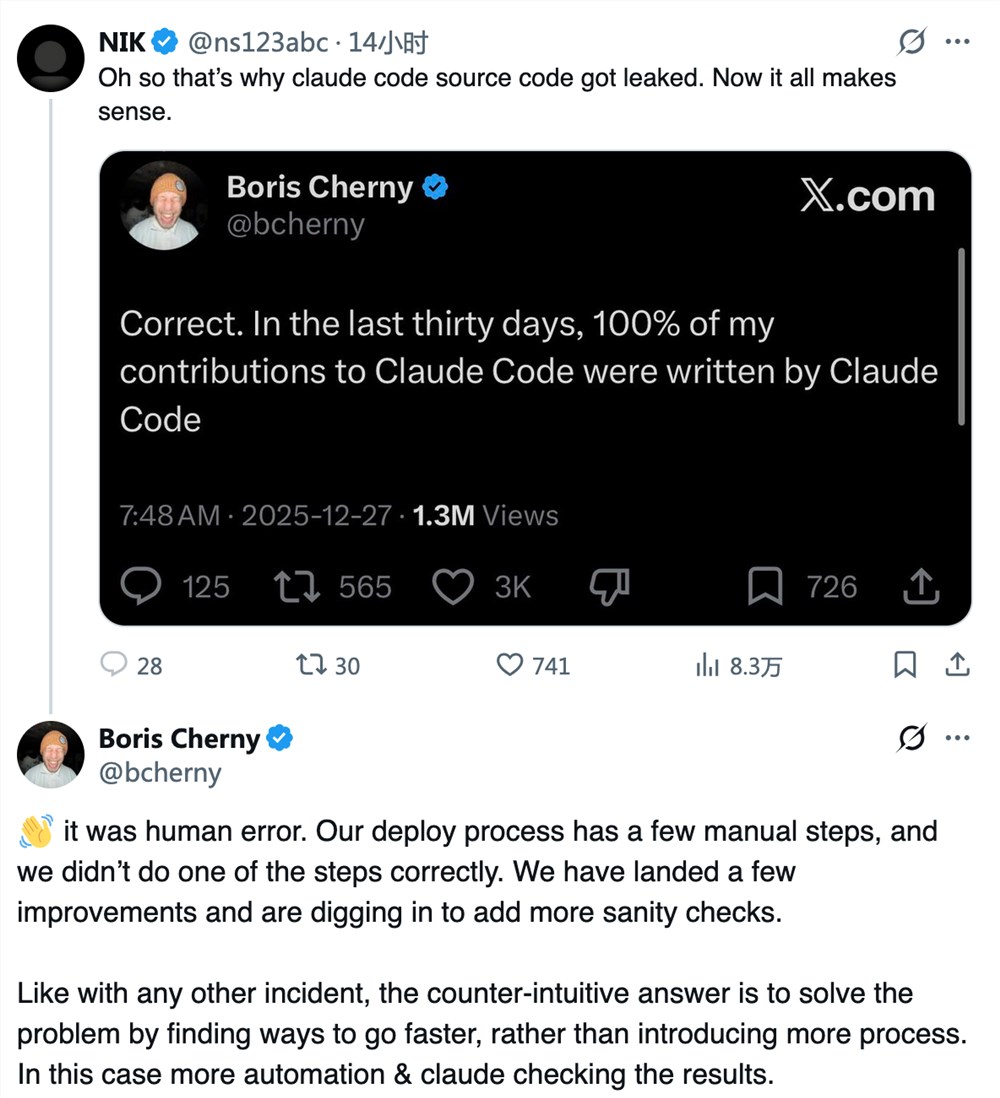

Core developer Boris Cherny described what went wrong in surprisingly frank terms: "We packaged our product like we always do, but this time we forgot one crucial step - scrubbing the MAP file clean." This technical oversight gave developers worldwide an unexpected peek under Claude's hood.

The consequences were immediate. Within hours, GitHub hosted over 8,100 repositories containing portions of the leaked code. While some developers treated it as an educational opportunity, Anthropic had to move quickly to contain the damage.

Damage Control Mode

The company's response combined legal action with technological soul-searching:

- Legal Takedowns: Flooding GitHub with DMCA notices to remove offending repositories

- Process Overhaul: Identifying manual steps in deployment as critical failure points

- Automation Push: Planning to use Claude itself to verify future deployments

"Irony isn't lost on us," Cherny admitted. "We build tools to prevent these exact mistakes, yet here we are."

Bigger Than One Company's Problem

This incident isn't isolated. From OpenAI to smaller startups, rapid AI development often outpaces security measures. The leak raises uncomfortable questions:

- Are current deployment practices adequate for complex AI systems?

- How much should we rely on humans versus automation?

- Can any company truly secure their AI assets in today's environment?

The developer community remains divided. Some see it as a rare learning opportunity; others warn it sets dangerous precedents for intellectual property in AI.

Key Points:

- Root Cause: Unfiltered MAP file exposed during standard deployment

- Response: Legal takedowns combined with process automation plans

- Industry Impact: Highlights tension between rapid innovation and security

- Future Steps: Anthropic betting on more automation to prevent human error