Anthropic's Safety Reputation Takes a Hit After Back-to-Back Data Leaks

Anthropic's Security Stumbles Raise Industry Eyebrows

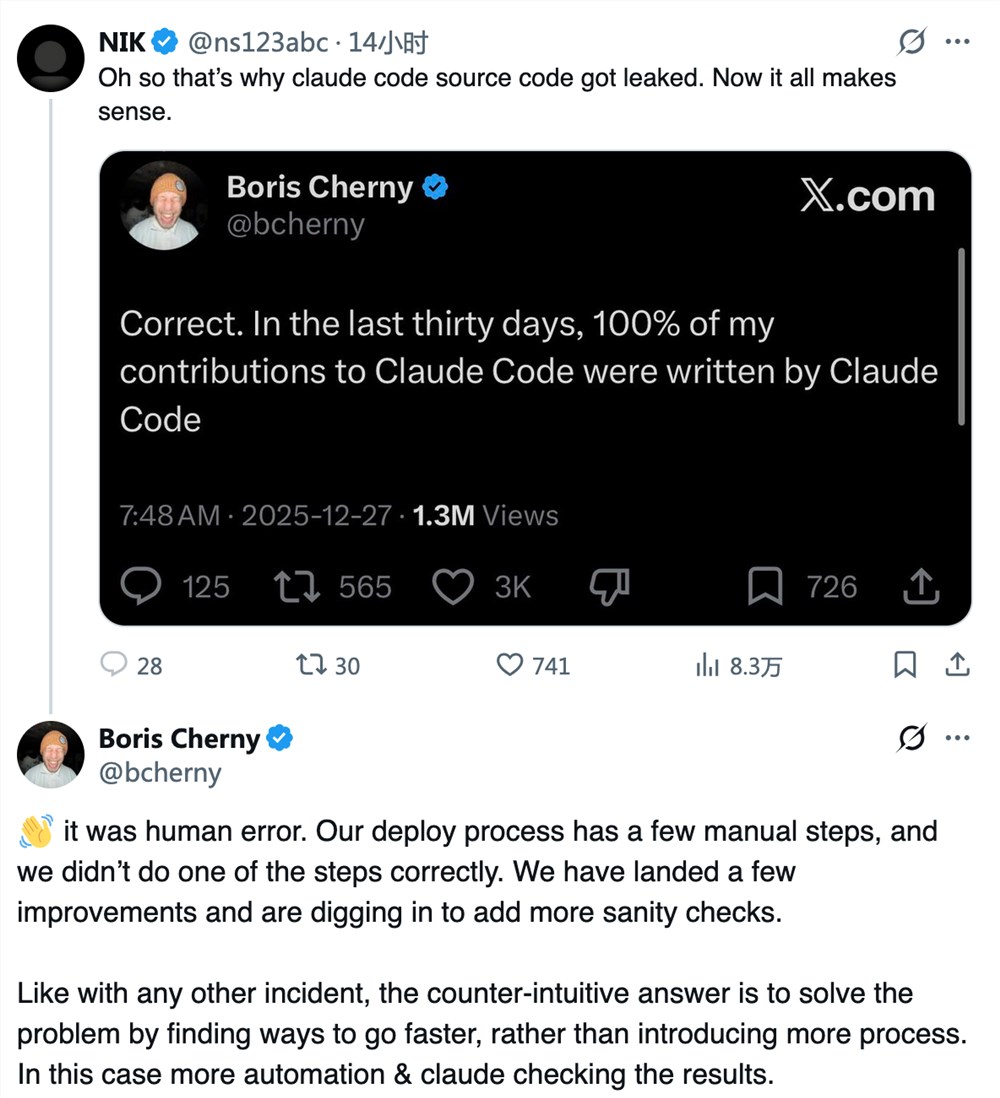

The AI world is buzzing after Anthropic - long considered the gold standard for responsible AI development - suffered not one, but two significant data leaks within days. What makes these incidents particularly shocking isn't just their scale, but that they came from simple human errors rather than sophisticated cyberattacks.

The Leaks That Shook Silicon Valley

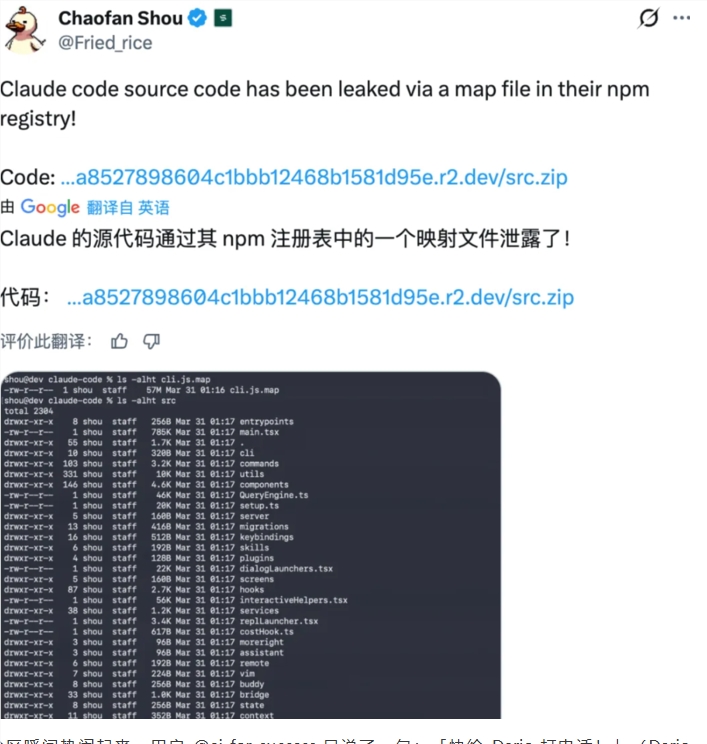

Last week's accidental disclosure of 3,000 internal documents might have been written off as an isolated mistake. But Tuesday's source code leak changed everything. Due to what the company describes as a "release packaging issue," more than half a million lines of proprietary code spilled onto the internet - including sensitive model behavior instructions and tool restriction logic.

"This wasn't just some API wrapper," one developer analyzing the leaked code told us. "We're looking at production-grade development tools with deep integration capabilities. The technical sophistication here explains why competitors are sweating."

Ripple Effects Across the Industry

The leaks sent shockwaves beyond Anthropic's headquarters:

- OpenAI reportedly paused its Sora video generation tool just six months after launch, with insiders citing competitive pressure from Claude Code as a factor

- GitHub repositories containing the leaked code multiplied faster than Anthropic's legal team could issue takedowns

- Security experts expressed concern that such fundamental errors came from a company positioning itself as an AI safety leader

A Crisis of Confidence

At stake is more than just proprietary technology - it's Anthropic's carefully cultivated identity as the responsible adult in the AI room. These incidents couldn't have come at a worse time, as the company engages in high-stakes policy debates about AI regulation with U.S. government agencies.

"When you're telling policymakers you should be trusted with existential AI safety," noted one industry analyst, "you can't be fumbling basic software packaging checks."

The leaks reveal an uncomfortable truth: Anthropic's engineering rigor may not have kept pace with its rapid growth and technical ambitions. As one former employee put it: "Research brilliance doesn't automatically translate to operational excellence at scale."

Key Points:

- Double whammy: Two major leaks (documents + source code) within one week

- Self-inflicted wounds: Both incidents resulted from internal errors, not external hacks

- Competitive fallout: Leaked code revealed Claude Code's strength, impacting rivals like OpenAI

- Reputation risk: Incidents undermine Anthropic's position as an AI safety leader during critical policy debates