Anthropic's Code Leak Exposes AI Secrets and Surprise Features

Anthropic's Major Security Blunder Exposes AI Secrets

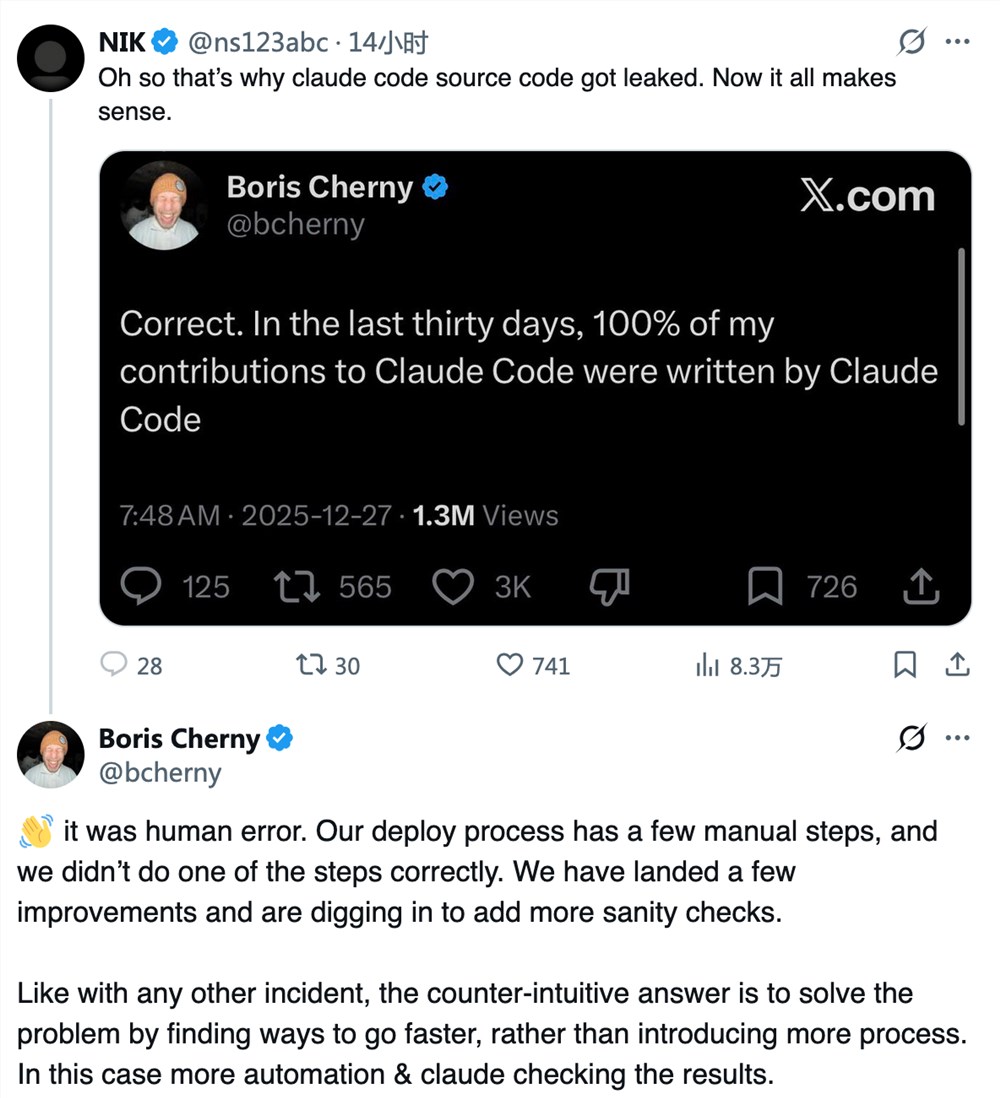

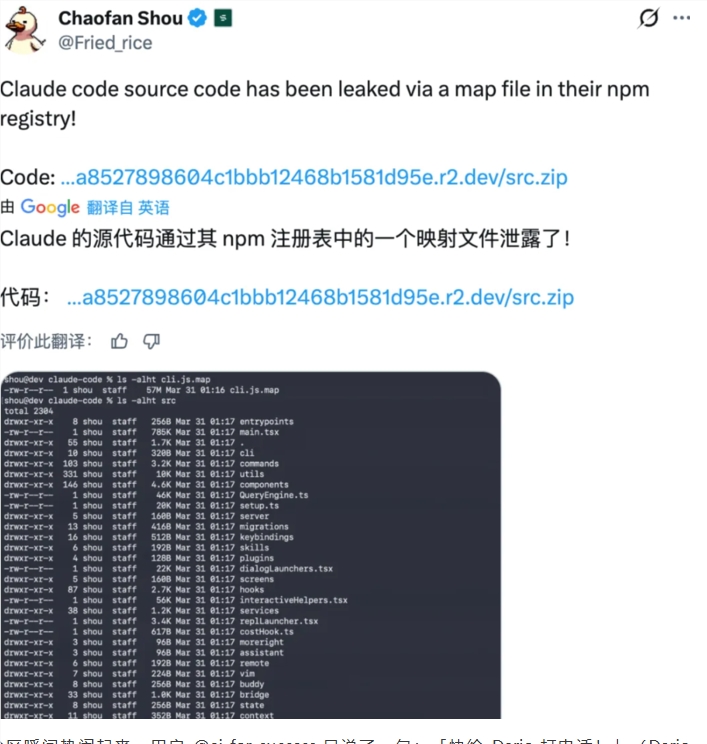

In what experts are calling a "basic but catastrophic" mistake, artificial intelligence firm Anthropic has accidentally exposed nearly half a million lines of proprietary code. The leak occurred when the company failed to remove critical .map files during a routine npm package publication.

The Scale of the Leak

The exposed material includes approximately 2,000 files containing over 500,000 lines of TypeScript code that power Anthropic's command-line tool Claude Code. Despite the company's rapid response to pull the compromised package, copies had already spread across developer communities on GitHub and other platforms.

"This isn't just embarrassing—it's potentially damaging," says cybersecurity analyst Mark Chen. "When core AI algorithms get exposed this way, it gives competitors an unfair advantage and could compromise user security."

Unexpected Revelations in the Code

While the leak represents a significant security failure, it also revealed some surprising features Anthropic had been developing in secret:

- BUDDY Project: A system that generates unique pixel-art "cyber pets" for developers. These digital companions adapt their personalities (including sarcasm levels) based on user behavior.

- KAIROS Function: An experimental "always-on" mode where the AI appears to "dream" at night—processing daily interactions to improve its understanding of users over time.

"The creativity here is impressive," notes AI researcher Dr. Elena Rodriguez. "But it makes you wonder—if they can build such advanced features, how did they miss such basic security protocols?"

Industry-Wide Implications

The incident has sparked renewed debate about safety standards in AI development. Anthropic, which positions itself as a leader in responsible AI, now faces tough questions about its internal processes.

Key concerns include:

- The vulnerability of AI systems to human error

- The challenge of balancing rapid innovation with security

- Potential risks when AI agents gain system-level access

As one anonymous developer put it: "This shows that even the smartest AI companies can make dumb mistakes. Maybe we need machines checking our human errors now too."

Key Points:

- Security Breach: 500k+ lines of proprietary AI code exposed due to

.mapfile oversight - Hidden Features: Leak revealed unreleased projects including digital pets and 'dreaming' AI functions

- Industry Impact: Incident raises concerns about safety practices in fast-moving AI sector

- Response: Code already circulating despite Anthropic's attempts to contain leak