Claude Code Leak: How a Simple Mistake Exposed AI's Dirty Secret

When AI Security Falters to Human Error

In a twist that reads like tech industry satire, Anthropic's Claude Code - an advanced AI coding assistant - had its source code exposed through one of the oldest mistakes in software development: someone forgot to check what went into the production package.

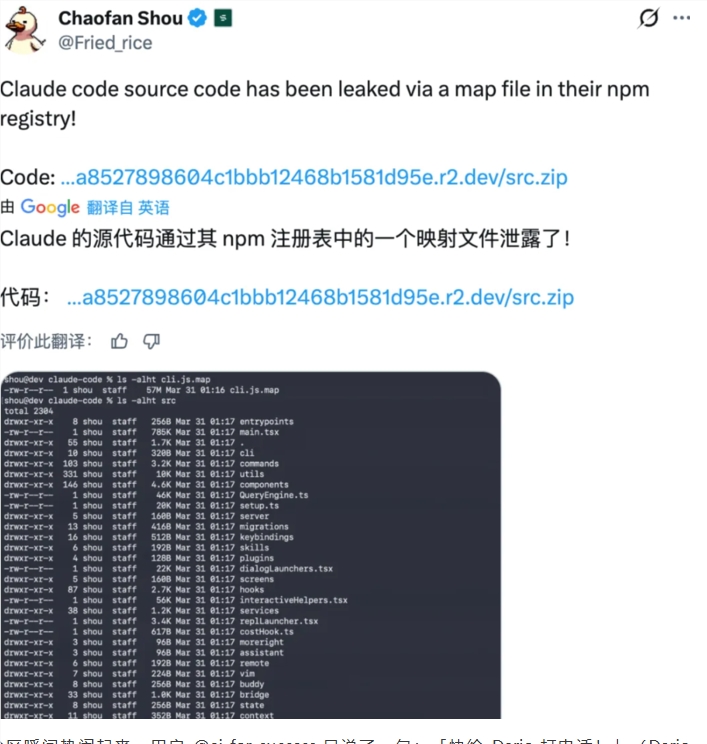

The Leak That Shouldn't Have Happened

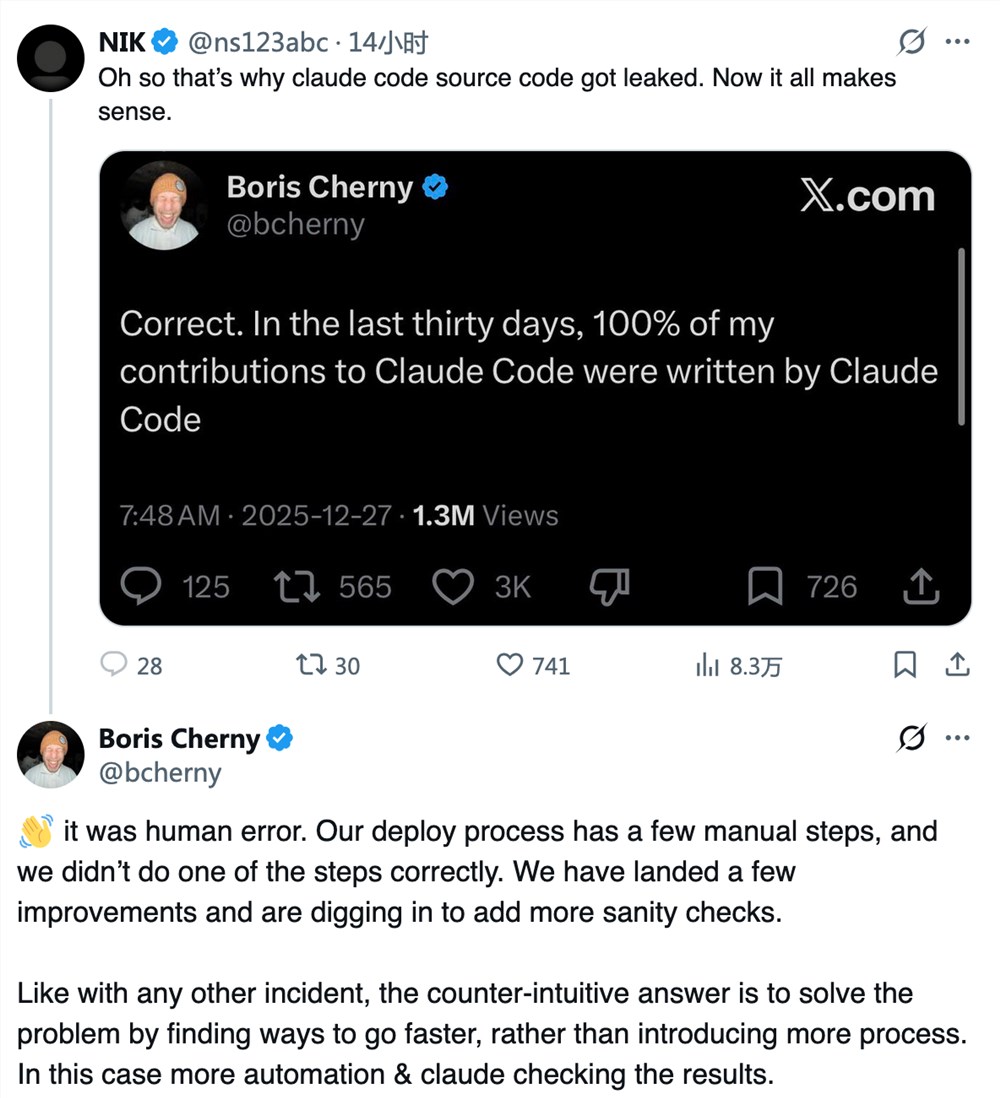

Boris Cherny, a core developer at Anthropic, confirmed on April 1st (though this was no joke) that the leak resulted from including an unobfuscated MAP file during deployment. This file essentially provided a treasure map to Claude Code's internal architecture, allowing any curious developer to reverse-engineer its secrets.

"It was like shipping a car with the blueprints taped to the windshield," one security expert quipped when we reached out for comment.

Damage Control Mode

The aftermath saw Anthropic scrambling:

- GitHub purge: Over 8,100 repositories containing leaked code received DMCA takedown notices

- Process overhaul: Manual deployment steps being replaced with automated checks (ironically using Claude Code itself)

- Cultural shift: Moving from blame culture to systemic solutions

"We're treating this as what it is - not an individual's failure but a system's weakness," Cherny explained. "The solution isn't more checklists; it's removing human uncertainty through better automation."

The Bigger Picture: AI's Deployment Blind Spot

The incident exposes an uncomfortable truth in AI development: while companies race to build increasingly sophisticated models, fundamental engineering practices sometimes lag behind. It's not the first time - OpenAI faced similar challenges in its early days.

For developers, the leak became an impromptu masterclass in AI architecture. "It's like getting the recipe for Coca-Cola," said one programmer who asked to remain anonymous. "Except in this case, Coke accidentally mailed it to everyone."

But beyond the short-term excitement lies a serious warning. As companies push toward AGI (Artificial General Intelligence), basic security hygiene can't become collateral damage in the rush to innovate.

Key Points:

- Root cause: Unobfuscated MAP file included in production deployment

- Response: Mass DMCA takedowns and deployment process automation

- Irony: AI coding assistant compromised by basic deployment mistake

- Industry trend: Highlights recurring tension between rapid iteration and security in AI development