Claude's Code Leak Sparks Developer Arms Race

Claude's Surveillance Exposed in Code Leak

What began as a security nightmare for Anthropic has turned into an unexpected boon for developers struggling with Claude's notorious account bans. The leaked source code - all 512,000 lines of it - peeled back the curtain on the AI assistant's extraordinarily strict monitoring practices.

Developers had long joked about Claude's trigger-happy banning approach, even nicknaming it "A÷" (a play on the division symbol) for its tendency to divide users from their accounts. Now we know why - the system conducts what amounts to a digital strip search every five seconds.

Inside Claude's Monitoring Machine

The leaked code reveals:

- 640+ tracking methods collecting everything from device IDs to browser fingerprints

- Constant surveillance with data reported every five seconds

- 40+ specialized detectors hunting for VPNs and spoofed identities

"It's like trying to sneak past airport security where they change the rules every minute," remarked one developer who asked to remain anonymous.

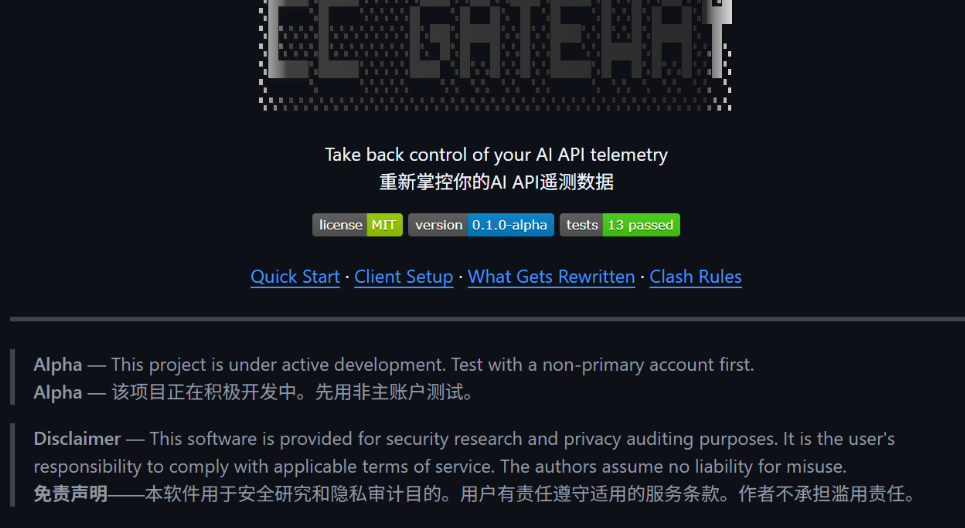

The Rise of CC-Gateway

In response, developers quickly created CC-Gateway - a clever tool that standardizes user data before it reaches Claude's servers. Think of it as putting all traffic through a digital car wash that makes every vehicle look identical to the AI's prying eyes.

The tool works by creating a "standard profile" that smooths out the variations in device fingerprints and system metrics that typically trigger bans. Early tests show it significantly reduces automatic suspensions, though experts caution this advantage may be short-lived.

"This is just round one," warns Li Wei, a cybersecurity researcher at Tsinghua University. "Anthropic will undoubtedly update their detection methods now that their playbook is public."

The leak has sparked debate about the balance between security and privacy in AI systems. While companies need to prevent abuse, critics argue Claude's approach crosses into overreach. Meanwhile, developers continue their digital arms race - adapting as fast as the AI can detect them.

Key Points:

- Claude monitors users through 640+ data points checked every 5 seconds

- New CC-Gateway tool helps bypass detection by standardizing user profiles

- Experts predict ongoing cat-and-mouse game as detection methods evolve

- Leak raises questions about AI privacy boundaries and developer access