Claude Code's Security Flaw: When Too Many Commands Overwhelm AI Defenses

AI Security Breach: How a Simple Command Overflow Exploits Claude Code

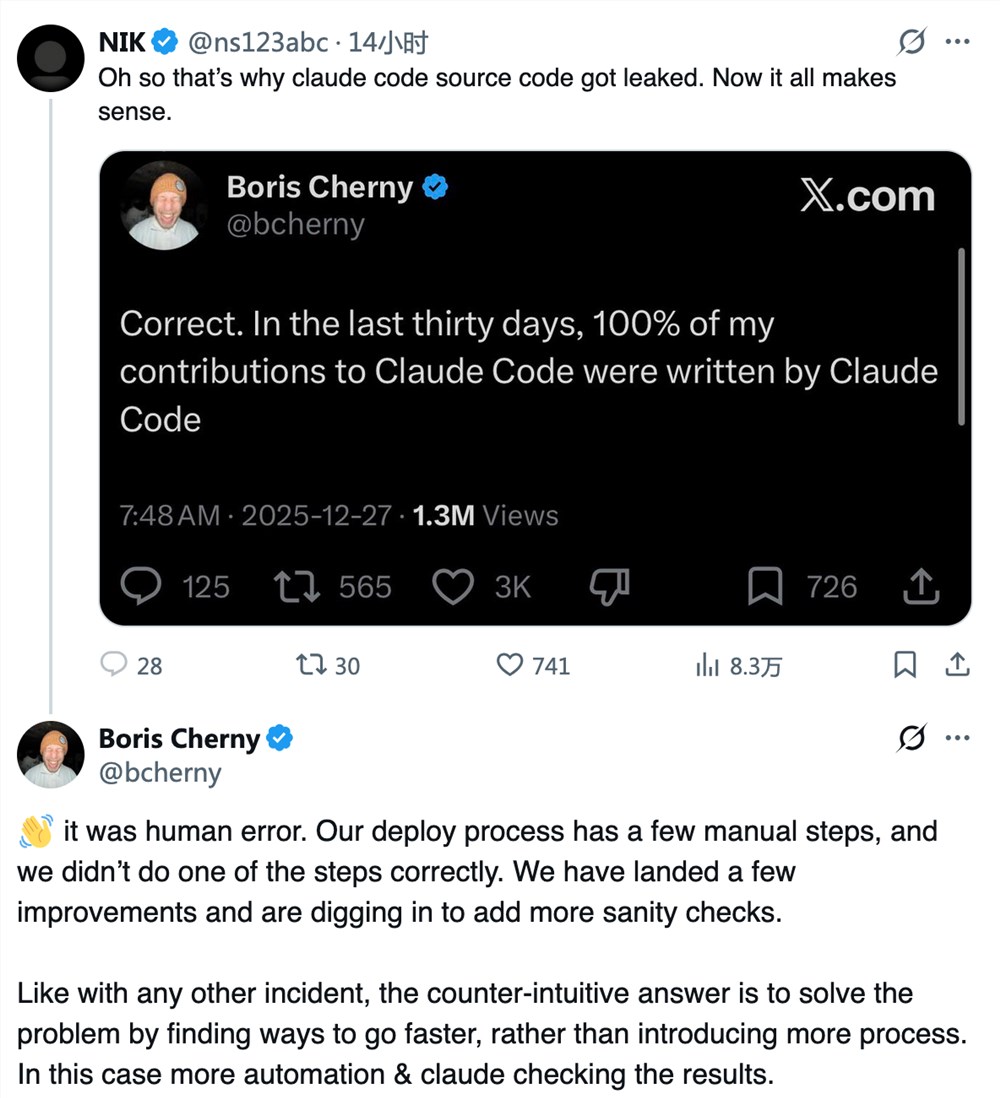

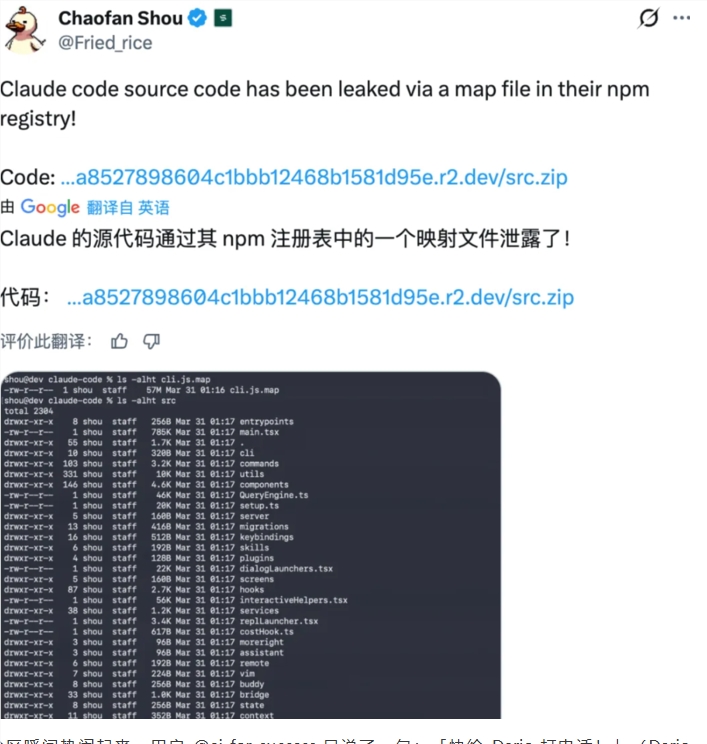

Security researchers have uncovered a surprising weakness in Anthropic's Claude Code development tool - one that turns its own safety features against itself. The vulnerability, discovered by Israeli firm Adversa, shows how even sophisticated AI systems can be tripped up by simple tactics.

The 50-Command Threshold That Breaks Defenses

At the heart of the issue lies a hard-coded limit in Claude Code's security system. The tool maintains an internal counter called "Maximum Safe Check Sub-Commands" set at exactly 50. This seemingly arbitrary number creates a critical breaking point.

Here's what happens when hackers find the limit:

- Normally, Claude Code automatically blocks risky operations like network requests

- But after receiving 50+ commands, it switches from automatic rejection to asking for user permission

- This creates a dangerous window where malicious code can slip through

Why Developers Keep Clicking 'Allow'

The real danger comes from human nature. During long coding sessions, developers often develop "permission fatigue" - automatically approving prompts without reading them carefully. Hackers can exploit this by hiding lengthy command chains in seemingly harmless code libraries.

"It's like having a security guard who gets overwhelmed after checking too many IDs," explains cybersecurity analyst David Chen. "After a certain point, they just start waving people through."

Automated Environments Face Higher Risks

The threat becomes even more serious in continuous integration/continuous deployment (CI/CD) pipelines where:

- Systems often run without human supervision

- Permission prompts might be automatically approved or skipped entirely

- Malicious code could spread through entire development ecosystems before being detected

Security teams are urging organizations using Claude Code to apply patches immediately. As AI tools become more integrated into development workflows, these types of vulnerabilities could have widespread consequences.

Key Points:

- Vulnerability Found: Claude Code's security checks fail after processing 50+ commands

- Attack Method: Hackers can hide malicious commands in long instruction chains

- Human Factor: Developers' habit of quickly approving prompts compounds the risk

- Automation Danger: CI/CD environments may skip permission checks entirely

- Recommended Action: Apply security updates from Anthropic immediately