Anthropic's Conway: The AI Assistant That Never Sleeps

Anthropic's Ambitious Play: Conway Redefines AI Assistance

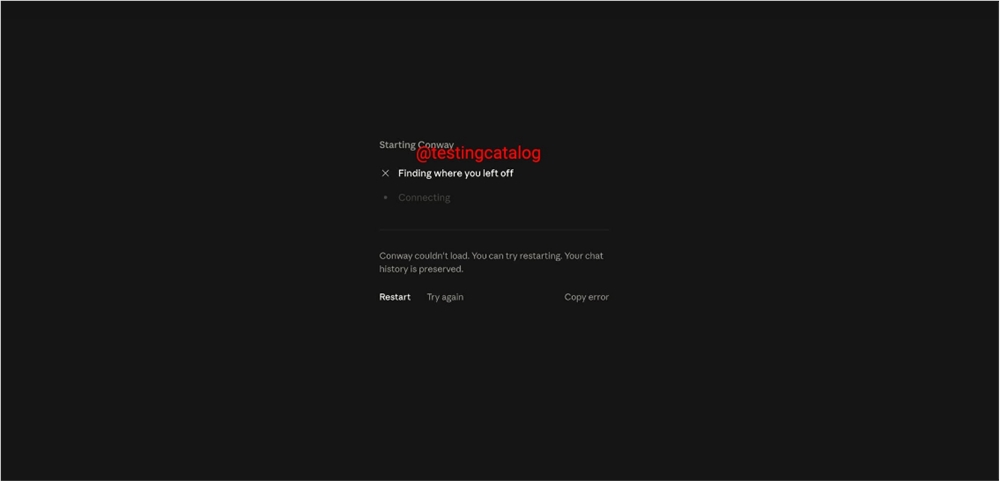

In a move that could reshape how we interact with artificial intelligence, Anthropic is quietly developing Conway - a persistent agent solution designed to keep Claude working around the clock. Unlike traditional chatbots that wait passively for user prompts, Conway creates what the company describes as "an always-on intelligent environment" where Claude can operate continuously.

Breaking Free From the Chatbox

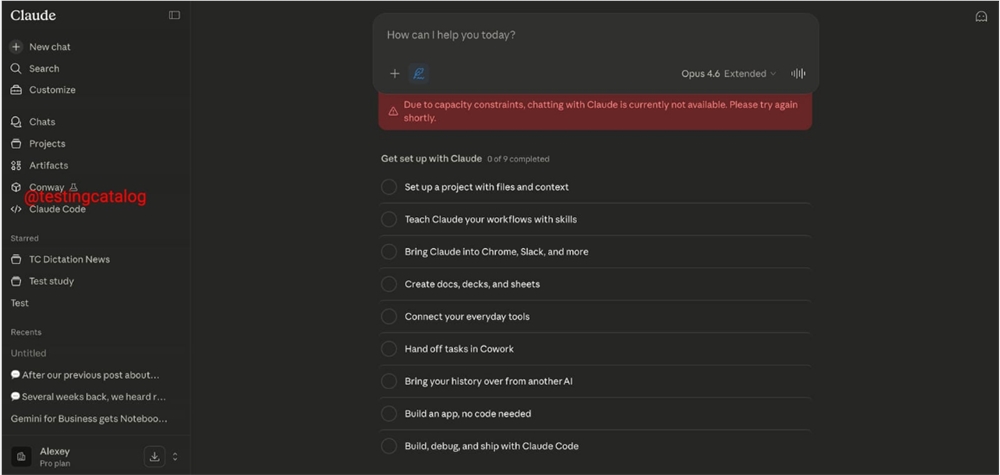

The most striking departure from current AI interfaces is Conway's independent UI instances. Instead of being confined to chat windows, Claude will operate in what Anthropic calls "agent workspaces" - self-contained environments where it can manage multiple tasks simultaneously. These workspaces allow direct browser operation and integration with external connectors, potentially turning Claude into a powerful automation hub.

"This isn't just about better conversations," explains one industry analyst familiar with the project. "Conway represents Anthropic's vision for Claude to become a true digital colleague - one that remembers context between sessions and picks up where it left off."

Always On Call: Webhook Integration

Conway introduces webhook support, enabling external services to trigger Claude's capabilities automatically. Picture this: your project management tool could ping Conway when deadlines approach, or your smart home system might alert it when unusual activity is detected. The potential applications span from business automation to personal productivity.

An Ecosystem in the Making

Perhaps most exciting for developers is Conway's upcoming extension system. Anthropic plans to release the CNW ZIP standard, allowing third parties to build custom tools, interface elements, and context processors. Early documentation suggests these extensions could range from specialized data processors to entirely new interface tabs - effectively creating an app store for Claude capabilities.

"The extension framework could be Conway's killer feature," notes tech journalist Maya Chen. "If executed well, it might give Claude an edge over competitors by letting users tailor exactly how their AI assistant works."

The Bigger Picture

Conway signals Anthropic's ambition to move beyond conversational AI into persistent assistance. By combining browser automation, event-triggered responses, and deeper integration with Claude Code (the company's code generation and execution system), Conway aims to handle complex, multi-step tasks without constant user supervision.

Industry observers are already drawing comparisons to OpenAI's rumored OpenClaw project, suggesting we're entering an era of "always-on" AI assistants. As one VC investor put it: "The assistant that sleeps when you do might soon seem as quaint as dial-up internet."

Key Points:

- Persistent operation: Conway keeps Claude active between sessions

- Workspace model: Independent UI instances replace traditional chat interfaces

- Webhook triggers: External events can activate Claude's capabilities automatically

- Extension ecosystem: CNW ZIP standard will let developers build custom tools

- Code integration: Native connection with Claude Code enhances problem-solving