AI Startup Secures $13M to Fight Rising Deepfake Threats

The Arms Race Against Digital Deception

In a significant move against the rising tide of synthetic media threats, Resemble AI has closed a $13 million funding round. The Toronto and San Francisco-based company brings its total funding to $25 million as it expands its arsenal against increasingly sophisticated deepfakes.

Cutting-Edge Detection Technology

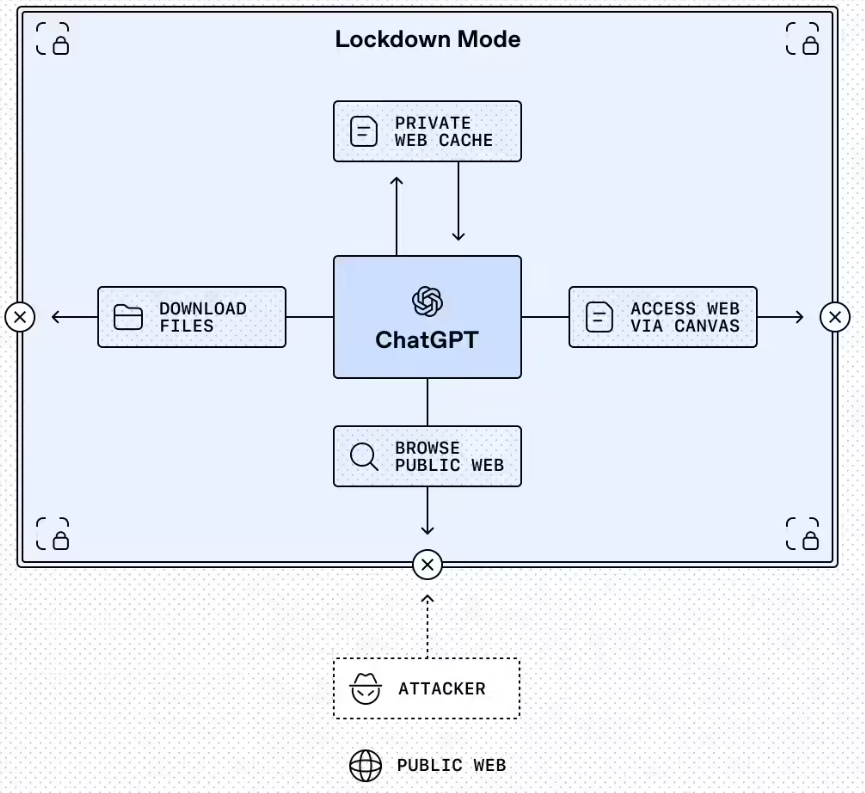

The startup's secret weapons? Detect-3B Omni - their multilingual detection model claiming 98% accuracy across 40+ languages - and Intelligence, their contextual analysis platform. Together, these tools scan audio, video, images and text in real-time, hunting for telltale signs of digital manipulation.

"We're not just flagging fakes," explains company materials. "We're helping users understand why content was created and whether it can be trusted."

The Staggering Cost of Digital Deception

The funding comes as deepfake-related fraud reaches alarming levels. Resemble AI estimates businesses lost $1.56 billion this year alone to synthetic media scams. Without effective countermeasures, projections suggest U.S. losses could balloon to $40 billion by 2027.

The threats span industries: financial institutions face forged voice approvals for wire transfers; corporations battle executive impersonation scams; even government officials contend with fabricated statements.

From Cloning to Cybersecurity

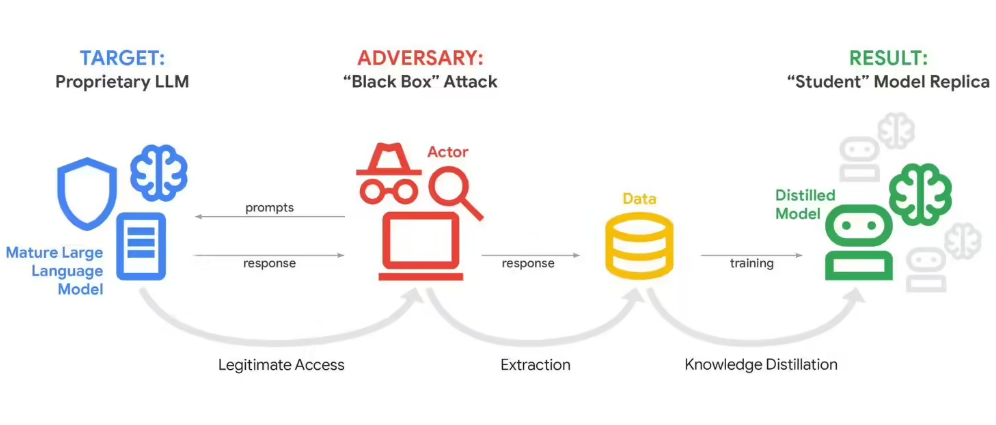

Founded in 2018 as a voice cloning service, Resemble AI pivoted its expertise toward security as synthetic media threats escalated. Their evolution mirrors the broader tech industry's scramble to develop defenses against the very generative AI capabilities it helped create.

The latest investment round includes backing from Google's AI Future Fund and Okta Ventures among others, signaling strong industry confidence in detection technology's growing importance.

Key Points:

- Funding milestone: $13M raised ($25M total) fuels global expansion

- Detection edge: Detect-3B Omni offers multilingual deepfake spotting

- Fraud forecast: Synthetic media scams may cost $40B annually by 2027