Microsoft Sounds Alarm on OpenClaw AI Security Risks

Microsoft Flags Critical Security Flaws in OpenClaw AI Assistant

In a sobering security advisory, Microsoft has warned organizations against using its OpenClaw artificial intelligence assistant on regular workstations. The tech giant insists the powerful automation tool belongs strictly in isolated environments due to alarming vulnerabilities that could give attackers free reign over corporate systems.

Why OpenClaw Poses Unique Risks

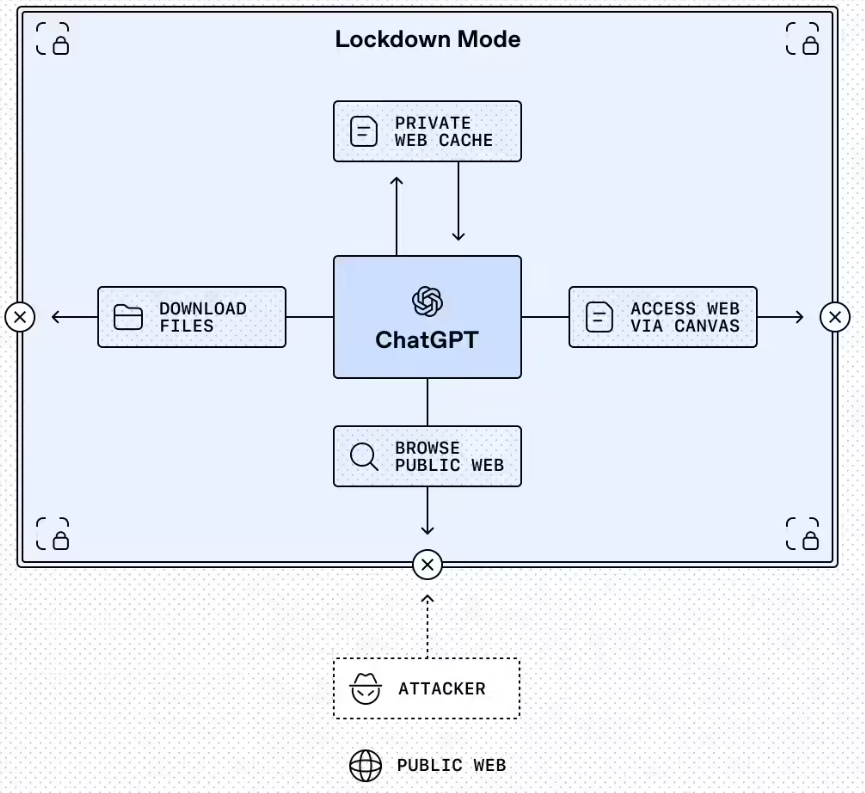

Unlike conventional software, OpenClaw operates as an autonomous agent that requires complete system access - including email, files, and login credentials - to perform its tasks. This "all-access pass" approach makes it particularly dangerous when deployed improperly.

"Think of OpenClaw as handing your house keys to a supercharged but naive assistant," explains cybersecurity expert Mark Reynolds (not affiliated with Microsoft). "It can accomplish amazing things, but doesn't always recognize when it's being tricked."

Two Major Threat Vectors Emerge

The Microsoft Defender team highlights two primary attack methods putting enterprises at risk:

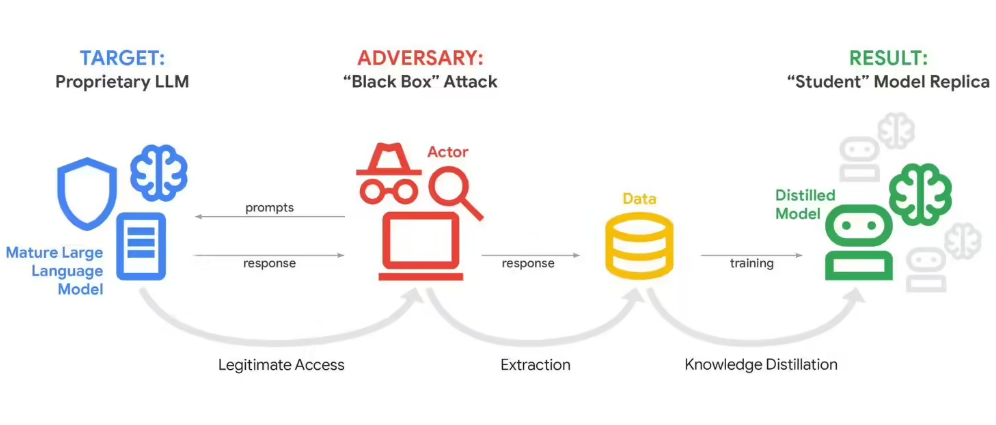

1. Hidden Commands in Plain Sight Attackers can embed malicious instructions within seemingly harmless content that OpenClaw processes. Once ingested, these "indirect prompt injections" can persistently alter the AI's behavior without triggering security alerts.

2. Trojan Horse 'Skills' The AI's ability to download and run new capabilities creates another weak spot. Hackers can disguise malware as legitimate skill modules, effectively turning OpenClaw into an unwitting accomplice for data theft or system takeovers.

The scale of exposure is staggering: SecurityScorecard's STRIKE team found vulnerable OpenClaw instances on over 42,000 IP addresses across 82 countries - each potentially serving as an entry point for attackers.

Microsoft's Isolation Mandate

The company now urges organizations to:

- Test OpenClaw exclusively in dedicated virtual machines or physical systems

- Use limited-access credentials unrelated to core business functions

- Implement continuous monitoring with regular environment resets

- Never deploy directly in production systems handling sensitive data

The warning comes as businesses increasingly adopt autonomous AI tools without fully understanding their security implications. While these agents promise efficiency gains, their power demands equally robust safeguards.

Key Points:

- OpenClaw requires complete system access, making standard deployments risky

- Indirect prompt injections can persistently compromise the AI's behavior

- Skill downloads create malware opportunities, bypassing traditional defenses

- 50,000+ vulnerable instances discovered globally

- Strict isolation protocols recommended for any deployment