Google Gemini Hit by Massive AI Model Hack Attempt

Google's AI Under Siege: How Hackers Targeted Gemini

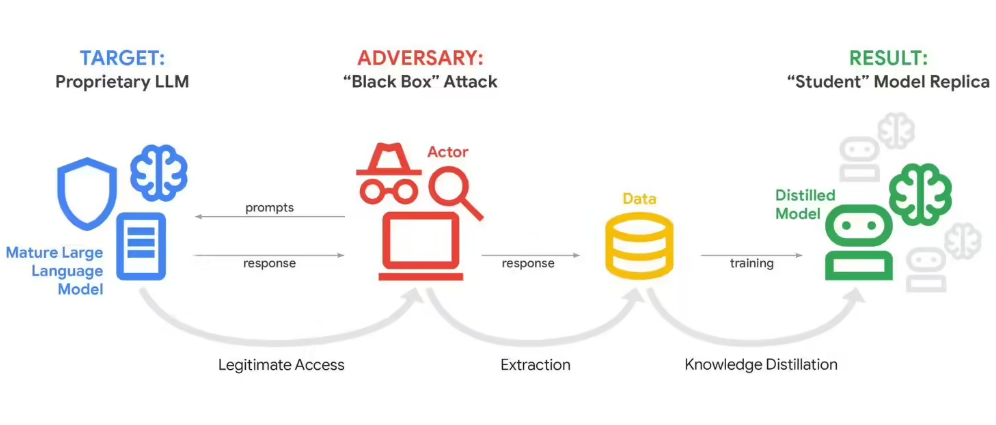

In a startling disclosure, Google admitted its flagship Gemini AI chatbot recently weathered a massive cyber assault unlike anything seen before. Attackers flooded the system with more than 100,000 carefully crafted prompts in what security specialists call a "model distillation attack" - essentially trying to reverse-engineer the AI's brain through relentless questioning.

The Anatomy of an AI Heist

The attacks, detected February 12th, weren't random probing but highly coordinated efforts to map Gemini's decision-making pathways. "Imagine someone whispering thousands of questions to your thoughts," explains John Hottelquist, Google's threat intelligence chief. "Each query helps them sketch the contours of your mind."

Commercial rivals appear behind most attempts, though Google declined to name suspects. The tech giant did confirm attackers spanned multiple global regions and focused on extracting Gemini's prized "reasoning" algorithms - the secret sauce determining how it processes information.

Why This Matters Beyond Google

Hottelquist paints an ominous picture: "We're the canary in this coal mine." As companies pour billions into proprietary AI systems containing sensitive data and trade secrets, such extraction attacks threaten entire industries. Custom business AIs trained on years of internal knowledge could see their competitive advantages slowly siphoned away.

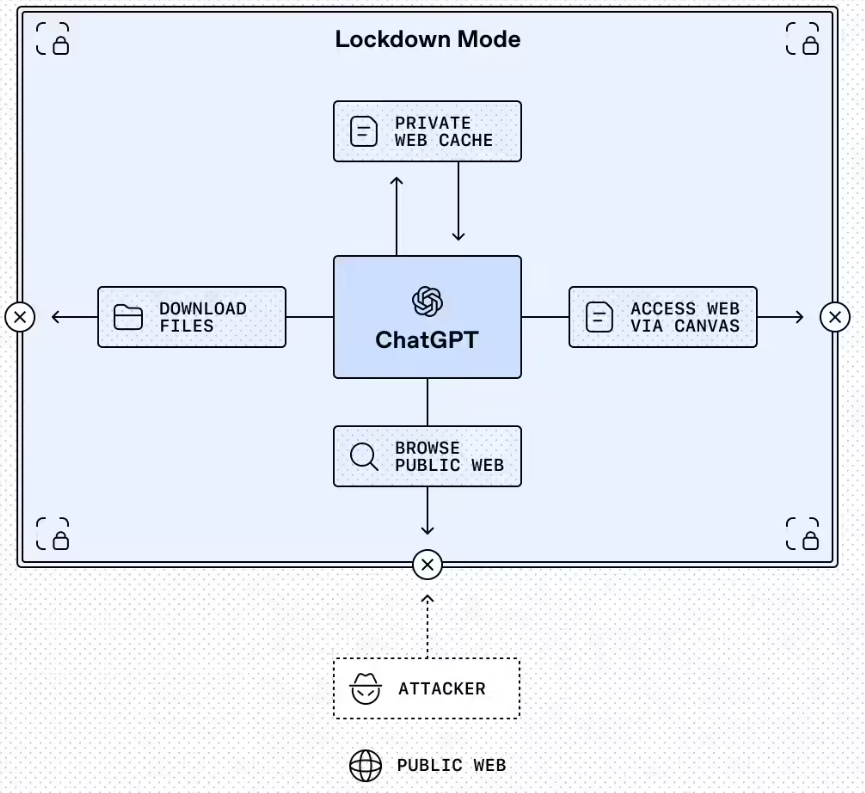

The dilemma? Most commercial AI services must remain somewhat open to function properly. While detection systems exist, completely sealing these digital minds proves nearly impossible without crippling their usefulness.

What Comes Next?

This incident spotlights emerging vulnerabilities as artificial intelligence becomes embedded in business operations. Security teams now race to develop better protections against model theft while balancing accessibility needs.

The stakes couldn't be higher - whoever masters these defenses may determine whether corporate AI remains secure or becomes an open book for determined hackers.

Key Points:

- Unprecedented Scale: Over 100,000 prompts used in single attack instances

- Commercial Motives: Likely competitors seeking AI advantages

- Global Threat: Attackers operating across multiple regions

- IP Theft Concerns: Core algorithms worth billions at risk

- Broader Implications: Custom business AIs may be next targets