Xiaomi Exec Warns Against AI Token Price Wars After Anthropic's OpenClaw Block

AI Industry Reckons With Unsustainable Pricing Models

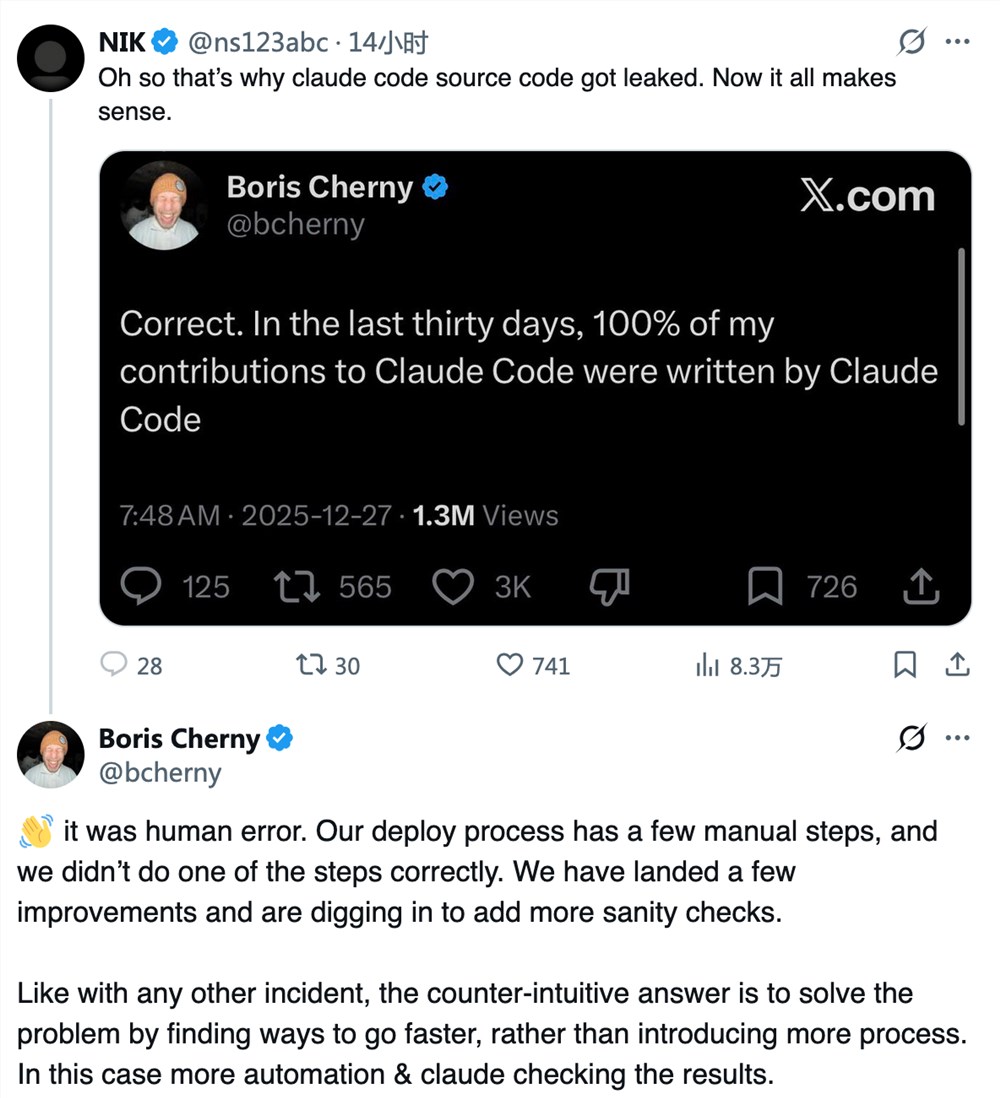

Anthropic's recent decision to block third-party frameworks like OpenClaw from accessing its Claude subscription service has sent shockwaves through the AI community. The move came after some users reportedly paid just $200 while consuming computing resources worth $5,000 - an unsustainable imbalance that forced Anthropic's hand.

The Hidden Costs of Cheap Tokens

Luo Fuli, who leads Xiaomi's MiMo large model team, sees this as a cautionary tale for the industry. "Third-party frameworks often have inefficient context management," she explains. "This can lead to token consumption rates ten times higher than native frameworks."

The financial math becomes alarming when heavy users exploit these inefficiencies. Anthropic found itself providing $5,000 worth of computing power for just $200 - a recipe for financial disaster no company could sustain long-term.

Beyond the Price War Mentality

Luo warns competitors against chasing market share through token price cuts alone. "Slashing prices without proper subscription strategies is a trap," she states bluntly. "The future lies in balancing efficient frameworks with high-quality models."

Xiaomi has already taken this approach with MiMo's new pay-as-you-go token plan supporting third-party access. The model aims to create sustainable economics where both platform providers and developers can thrive.

Industry at a Crossroads

The Anthropic incident highlights growing pains as AI adoption accelerates. With computing demand skyrocketing, companies must develop business models that:

- Fairly compensate platform providers

- Incentivize framework efficiency improvements

- Maintain accessibility for developers

"Short-term cost pressures will push third-party developers to optimize their technology," Luo predicts. "This painful adjustment will ultimately benefit the entire ecosystem's health."

Key Points:

- Resource imbalance: Anthropic blocked OpenClaw after users consumed $5,000 in resources while paying $200

- Pricing warning: Xiaomi's Luo cautions against destructive token price wars

- Sustainable path: MiMo's pay-as-you-go model offers alternative approach

- Industry evolution: Pressure may drive needed efficiency improvements in third-party frameworks