Anthropic's Conway: Claude Gets Its Own Workspace and App Store

Anthropic Takes Claude to the Next Level with Conway

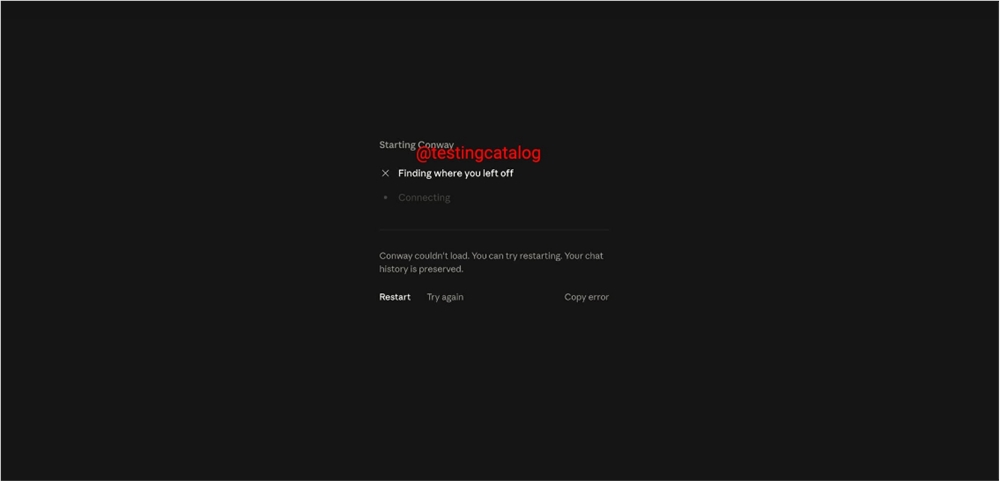

Artificial intelligence research company Anthropic is quietly working on what could be a game-changer for its Claude AI system. Codenamed Conway, this persistent agent solution aims to evolve Claude from a conversational partner into something more akin to a digital coworker.

Breaking Free from the Chat Window

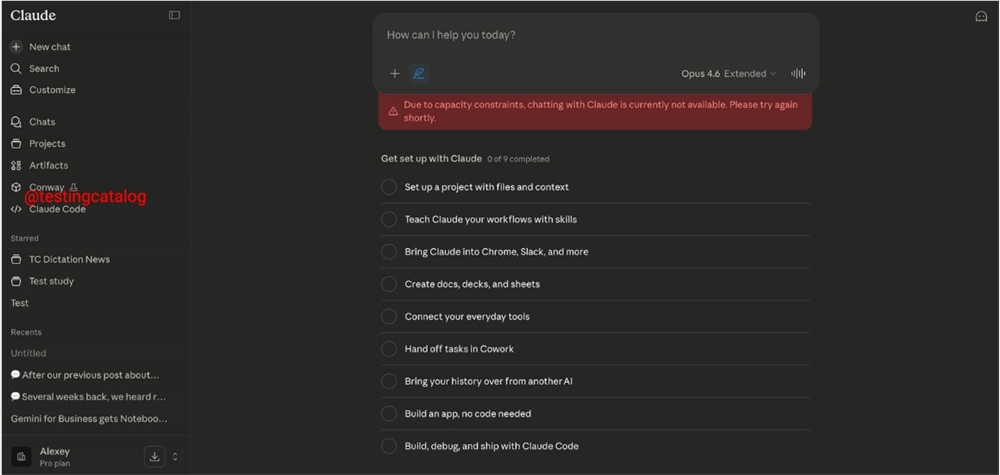

The most striking change? Conway won't be confined to chat interfaces. Instead, it will function as an independent workspace where Claude can operate more autonomously. Imagine an AI that doesn't just respond when pinged but maintains its own persistent environment - complete with browser access and external service connections.

"This moves us beyond the question-and-answer paradigm," explains one industry observer who asked to remain anonymous. "Conway suggests Anthropic sees Claude becoming more of an active participant than just a responsive tool."

Always On, Always Ready

Key features include:

- Webhook integration allowing external services to trigger Claude's actions

- Native Claude Code functionality for deeper programming tasks (possibly linked to Epitax)

- A forthcoming extension system using the CNW ZIP standard

The extension system particularly stands out - it promises to let developers create custom tools and interface elements, effectively building an ecosystem around Claude. Think app store meets AI assistant.

Why This Matters

The move positions Anthropic squarely in competition with projects like OpenClaw while pushing the entire field toward "always-on" AI agents. For users, it means Claude could soon handle multi-step workflows without constant prompting - scheduling meetings while researching background information, then drafting follow-up emails, all in one continuous process.

"We're seeing the beginnings of true digital assistants," notes tech analyst Miranda Cho. "Not just tools that answer when called, but partners that maintain context and initiative."

Key Points:

- Workspace not chatbot: Conway gives Claude its own persistent environment beyond chat windows

- Developer ecosystem: Coming extension standard (CNW ZIP) will allow third-party add-ons

- Automation boost: Webhook support enables event-triggered actions from other services

- Code integration: Native Claude Code functionality hints at deeper programming capabilities