Tencent's Breakthrough Video Tech Speeds Up Generation by 11.8 Times

Tencent's Video Generation Leap: Faster, Smarter, Open-Source

For anyone working with AI-generated video, the wait times and computing costs have been constant headaches. Tencent's Hunyuan research team just handed the community a powerful remedy - their new DisCa technology slashes generation times by nearly 12 times while maintaining output quality.

How DisCa Changes the Game

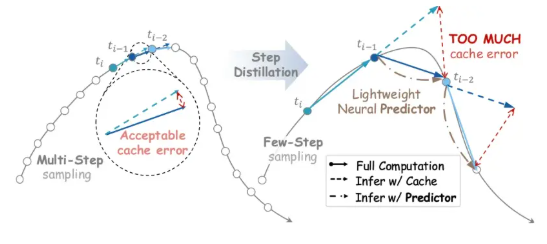

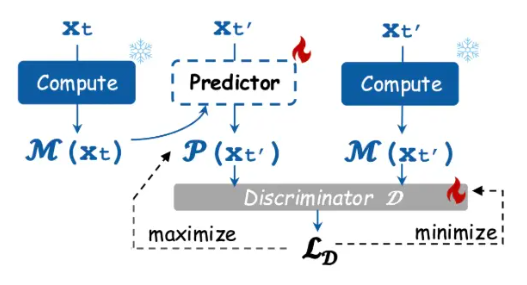

The secret lies in what the team calls "learnable feature caching." Imagine trying to predict the next frames of video based on what came before - that's essentially what their lightweight neural network does, but with remarkable accuracy. Traditional caching methods stumble when applied to streamlined AI models, but DisCa's smart predictor keeps everything on track.

"We trained it through adversarial learning," explains the team, "so it doesn't just guess - it learns the actual evolution patterns of video features." This breakthrough means creators can get their AI-generated videos up to 11.8 times faster without the usual trade-offs in quality.

Improving Upon MIT's Work

The team didn't stop there. They took MIT's promising MeanFlow technique - great for images but problematic for videos - and made it work better for moving pictures. Their solution was surprisingly straightforward: dial back the ambition.

"One-step generation sounds amazing," says the research paper, "but it was causing training problems." By setting more realistic step ranges during training, they created R-MeanFlow - an approach that aligns with findings from both MIT and Google teams.

Available Now for Everyone

In keeping with the spirit of open research, Tencent has made both the code and model weights publicly available. The technology is already proving its worth in HunyuanVideo-1.5, currently among the best open-source video generation models available.

Key Points:

- DisCa technology accelerates AI video generation by 11.8x

- Uses learnable feature caching with neural network prediction

- Improves upon MIT's MeanFlow for better video results

- Full code and models available as open-source

- Already implemented in HunyuanVideo-1.5

For video creators and developers alike, this represents more than just a speed boost - it's a fundamental shift in what's possible with AI-generated content.