Google's AI Breakthrough Teaches Machines to See Like Humans

The Blind Spot in AI Vision

Ask an AI system what's in a photograph, and you'll get a detailed description. But pose a more precise question - "Where exactly is the panda's left hind leg?" - and the answers become vague. This limitation isn't just a quirk of individual models, but a fundamental challenge across the entire field of visual AI.

The Counterintuitive Discovery

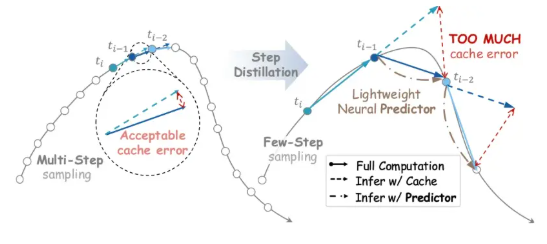

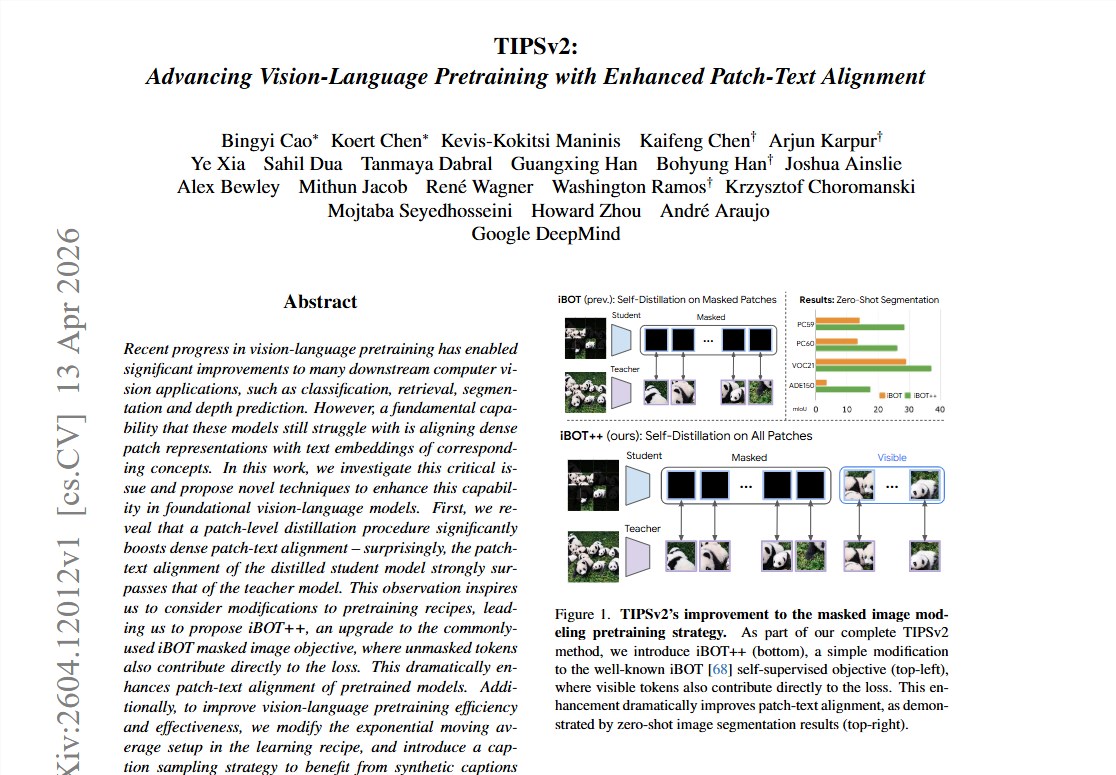

Google DeepMind researchers made a surprising observation: in fine segmentation tasks, smaller 'student' models frequently outshine their larger 'teacher' counterparts. The secret? The distillation process removes masking mechanisms, forcing the model to examine every detail - creating what the team calls "full-area supervision."

Three Key Innovations

1. iBOT++: From Puzzle Pieces to Complete Pictures

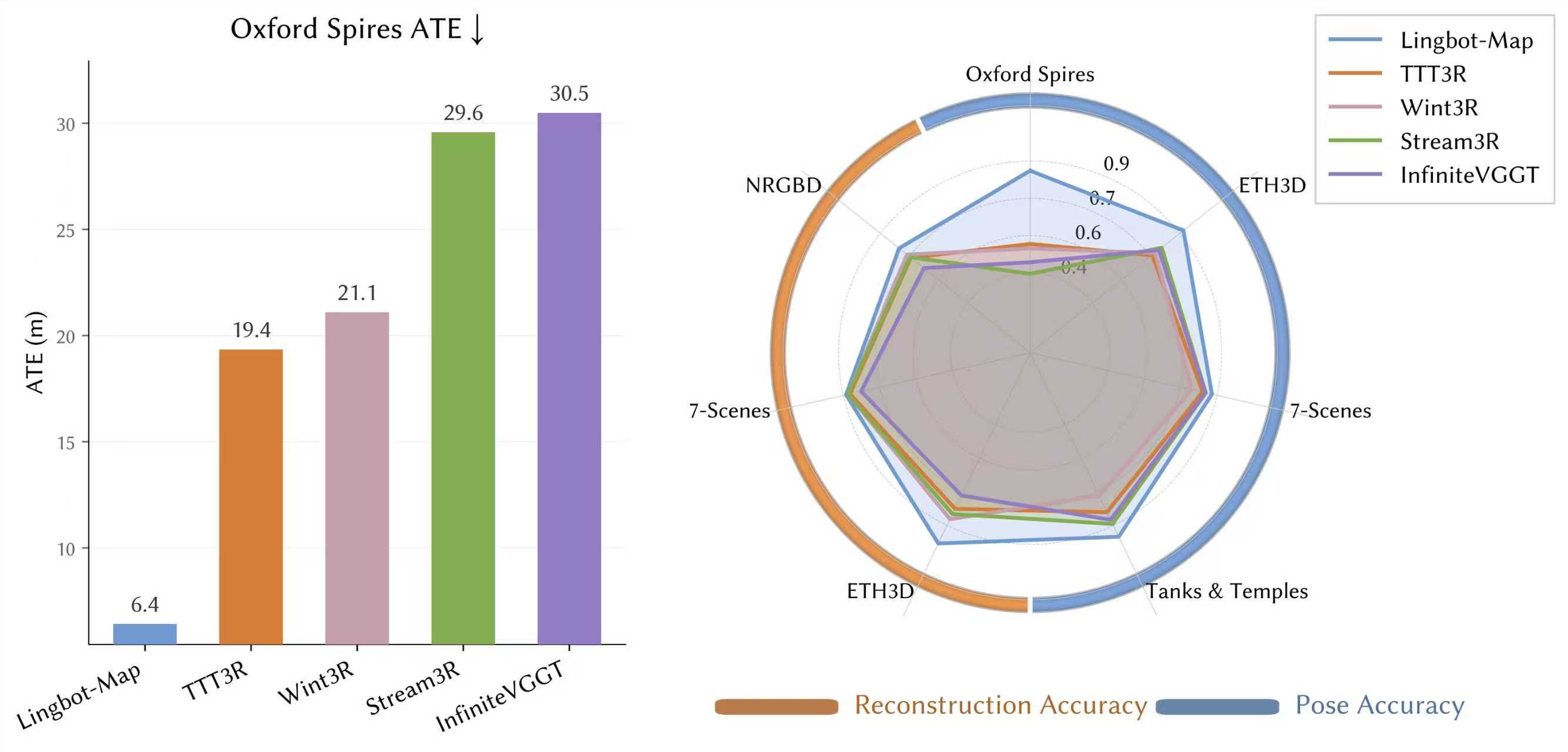

Traditional training only calculates loss for masked image regions, leaving visible areas neglected. iBOT++ demands precise supervision for all visible areas - transforming the process from a puzzle game to careful reading. This single change boosted zero-shot segmentation performance by 14.1 percentage points.

2. Head-only EMA: Doing More With Less

Previous methods required maintaining two nearly identical large models simultaneously, consuming enormous resources. TIPSv2's breakthrough? The image-text contrastive loss alone can stabilize the backbone network, so only the final projection head needs duplication. The result: 42% fewer training parameters with negligible performance loss.

3. Multi-granularity Text Pairing: Keeping AI on Its Toes

By randomly mixing short web descriptions, medium detailed explanations, and Gemini-generated long descriptions during training, the system alternates between easy and challenging tasks. This approach prevents the model from getting lazy while ensuring no details get overlooked.

Real-World Impact

TIPSv2's performance speaks for itself. In evaluations across nine tasks and 20 datasets, it set new benchmarks in zero-shot semantic segmentation while outperforming comparison models with 56% more parameters in image-text retrieval and classification.

With fully open-sourced code and model weights, TIPSv2 offers immediate value for medical imaging, autonomous driving, and industrial inspection applications where precise visual understanding is critical.

Key Points:

- Solves AI's "global understanding vs local precision" dilemma

- Achieves 14.1% better segmentation with full-area supervision

- Reduces training parameters by 42% through optimized architecture

- Outperforms larger models in multiple benchmark tests

- Open-source availability accelerates practical applications