Ant Group's Lingbo Tech Open Sources Breakthrough 3D Mapping Tool

A Leap Forward in 3D Mapping Technology

Ant Group's Lingbo Technology has taken the wraps off its groundbreaking LingBot-Map, open-sourcing a system that could democratize real-time 3D reconstruction. What sets this apart? It delivers professional-grade results using nothing more than the RGB camera found in most smartphones and consumer devices.

How It Works: Breaking the Processing Bottleneck

The magic lies in LingBot-Map's streaming architecture. Traditional systems had to wait until they'd captured all the data before starting the heavy computational work. Imagine trying to navigate a room while blindfolded, only getting the layout after you've finished walking through it. LingBot-Map removes that limitation, processing spatial data continuously as the camera moves.

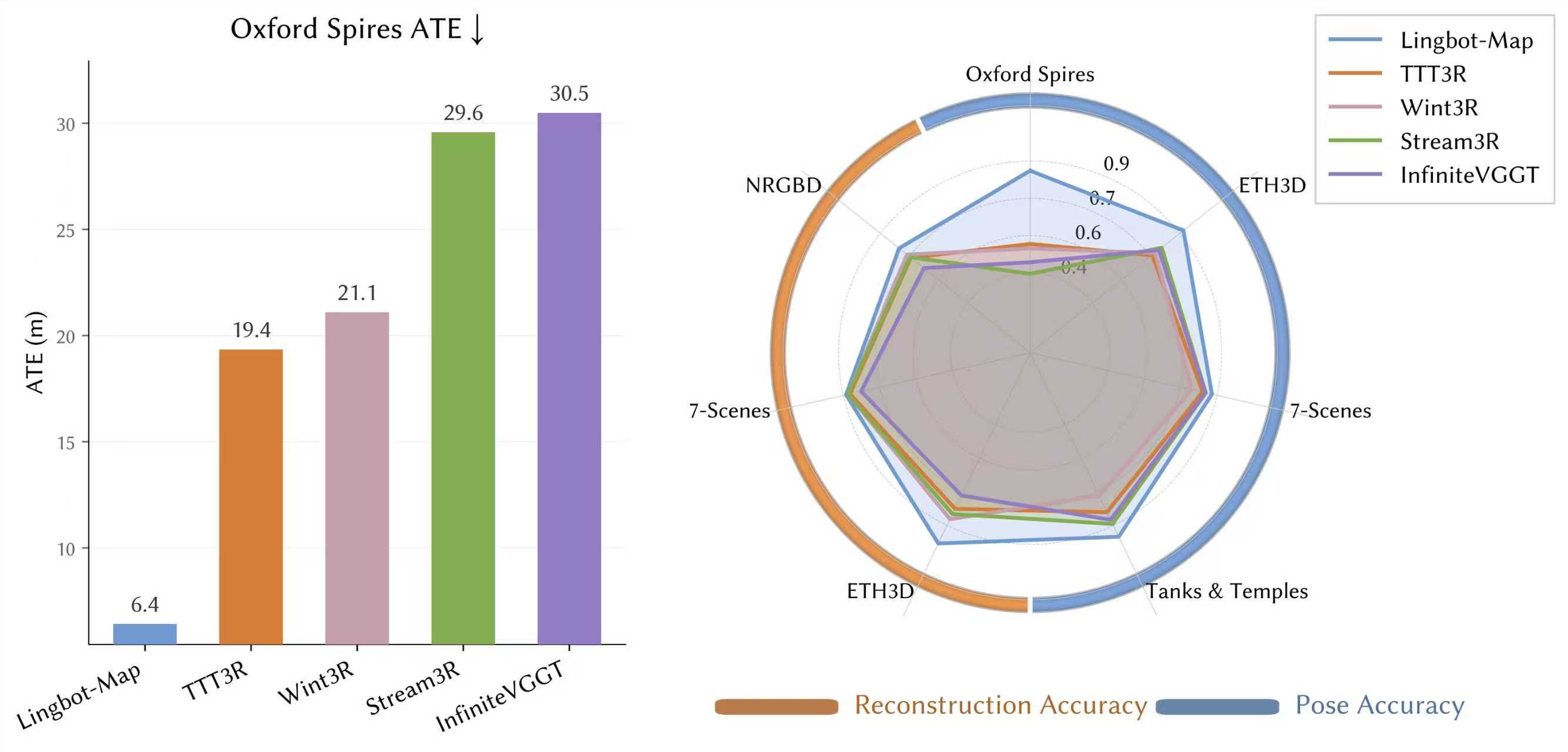

Early benchmarks tell an impressive story. On Oxford's challenging Spires dataset, the system achieved trajectory errors just one-third those of previous streaming methods. Surprisingly, it even outperformed some offline algorithms that benefit from post-processing advantages. The team has demonstrated the system maintaining consistent accuracy across video sequences containing tens of thousands of frames.

Practical Applications: From Robots to Everyday Tech

This isn't just academic research. The technology could soon power:

- More responsive robot navigation systems

- Enhanced AR experiences without specialized hardware

- Affordable autonomous vehicle perception solutions

"What excites us most is lowering the barrier to high-quality spatial understanding," explains a Lingbo spokesperson. "When any device with a camera can map its environment in real-time, it opens doors we haven't even imagined yet."

The Bigger Picture

LingBot-Map represents another milestone for Ant Lingbo, following their work on depth estimation and large language action models. By tackling real-time spatial understanding, they're building a more complete foundation for embodied AI systems. The decision to open-source the technology suggests a strategic move to accelerate adoption and ecosystem development.

Key Points:

- Processes 3D mapping at ~20FPS using standard RGB cameras

- Outperforms previous streaming methods by 3x on benchmark tests

- Maintains accuracy across extended real-world use

- Open-source availability could spur rapid industry adoption