Shenzhen Cracks Down on AI Platforms Spreading Vulgar Content

Shenzhen Intensifies Online Content Regulation

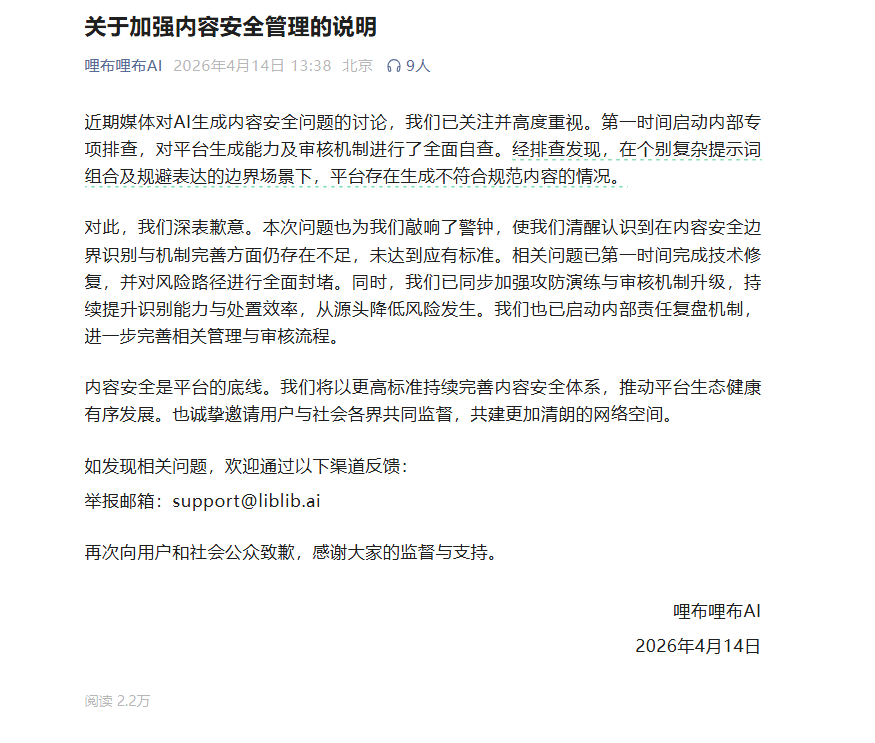

The Shenzhen Internet Information Office has taken decisive action against several AI platforms accused of distributing vulgar content and false information. This move comes as part of the city's broader "Clear and Bright" campaign aimed at sanitizing China's rapidly growing short video sector.

Targeted Enforcement Action

Authorities have identified Tianyan and Tiqu AI among multiple platforms facing scrutiny for allegedly hosting inappropriate material. The crackdown has already resulted in:

- Removal of dozens of accounts from major platforms including Huawei App Market and WeChat Video Accounts

- Special focus on content potentially harmful to minors

- Restrictions on live streaming accounts promoting excessive tipping or negative emotions

"We're seeing concerning trends where advanced technologies are being misused to spread harmful content," explained a cyber affairs department spokesperson. "This campaign sends a clear message that such practices won't be tolerated."

Protecting Young Internet Users

The operation places particular emphasis on safeguarding teenagers, with officials reporting numerous accounts containing age-inappropriate material being permanently shut down. Educators have welcomed the move, noting the vulnerability of younger users to misleading or explicit content.

"When platforms prioritize engagement over ethics, children often pay the price," said Li Wei, a Shenzhen-based high school teacher. "These measures help create necessary guardrails."

Ongoing Digital Governance Efforts

The current campaign represents just one phase in Shenzhen's comprehensive approach to internet regulation:

- Continuous monitoring of emerging digital platforms

- Regular policy updates addressing new technological developments

- Collaboration with tech companies to improve content moderation systems

- Public education initiatives promoting digital literacy

The city plans to maintain rigorous oversight as short video consumption continues growing exponentially nationwide.

Key Points:

- Platform Accountability: Major tech companies face increased responsibility for hosted content

- AI Regulation: Authorities scrutinizing potential misuse of artificial intelligence tools

- Youth Protection: Special safeguards implemented for underage users

- Industry Response: Platforms adjusting algorithms and moderation practices proactively