LibuLibu AI addresses content safety concerns with system upgrades

LibuLibu AI Takes Action After Content Generation Flaws

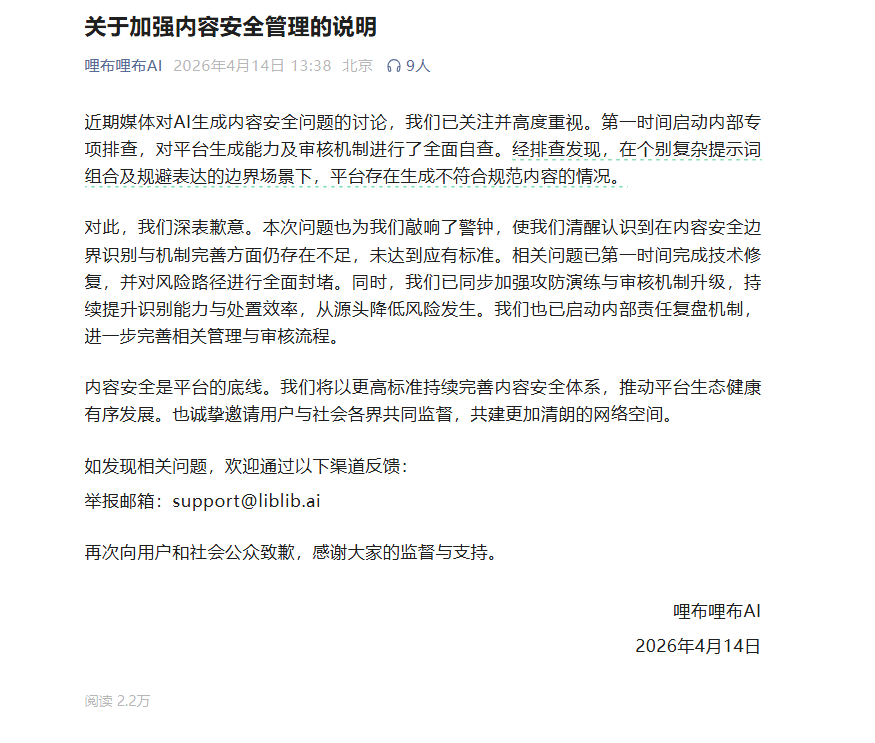

Facing mounting scrutiny over AI-generated content safety, LibuLibu AI has stepped forward with both an apology and concrete solutions. The company acknowledged that its platform occasionally produced substandard outputs when users employed sophisticated prompt combinations or worked around existing content filters.

The Fixes: More Than Just Patches

The AI firm didn't just stop at technical repairs. Engineers completely overhauled the system's vulnerable points while implementing stronger safeguards against potential misuse. "We've turned every stone to eliminate known risks," the statement suggests, though the company remains cautious about future challenges.

A multi-layered approach now includes:

- Enhanced penetration testing to catch problematic content faster

- Management process reviews addressing content security at its roots

- New accountability measures for internal teams

Why This Matters Now

This incident highlights the tightrope walk facing AI platforms. As LibuLibu's technical lead explained, "The more creative users get with prompts, the harder it becomes to maintain quality control without stifling innovation." The company now encourages users to report concerns directly to support@liblib.ai, betting on crowdsourced vigilance.

Industry observers see this as part of a larger trend. "We're entering an era where AI companies can't just focus on what their systems can do," notes tech analyst Miranda Cho. "They'll be judged equally on what they prevent their systems from doing."

Looking Ahead

LibuLibu's statement positions the incident as a turning point, promising "higher standards" for ecosystem health. But with AI-generated content becoming ubiquitous, the real test will be whether these measures satisfy both regulators and a skeptical public.

Key Points:

- LibuLibu AI admits some generated content failed quality checks

- Complete technical overhaul implemented with new safeguards

- Review processes upgraded with faster illegal content detection

- Company invites public oversight through dedicated reporting channel

- Incident reflects growing compliance pressures in AI industry