Perplexity's BrowseSafe Shields AI Browsers from Hidden Web Threats

Perplexity Fortifies AI Browsers Against Web-Based Attacks

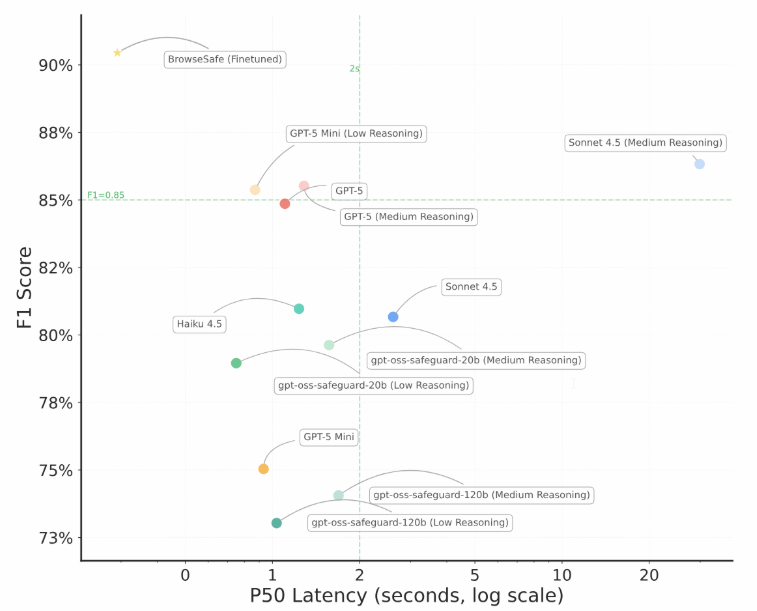

In a move to secure the growing ecosystem of AI-powered browsers, Perplexity has launched BrowseSafe - a defense system specifically designed to protect automated agents from hidden web threats. The technology boasts an impressive 91% success rate in catching prompt injection attacks, significantly outperforming existing solutions like PromptGuard-2 (35%) and even advanced models like GPT-5 (85%).

Why AI Browsers Need Special Protection

The rise of AI browser agents has opened new frontiers in productivity - and new vulnerabilities. Earlier this year, Perplexity's own Comet browser demonstrated how AI agents could authenticate and interact with sensitive services like banking portals and corporate systems. This powerful access comes with risks: attackers can now plant malicious code within ordinary-looking web pages, tricking agents into revealing confidential data or performing unauthorized actions.

"We're seeing attack methods evolve faster than traditional defenses can keep up," explains a Perplexity security researcher. "Standard benchmarks don't account for the sophisticated ways hackers hide dangerous instructions in today's complex web environments."

Building a Smarter Safety Net

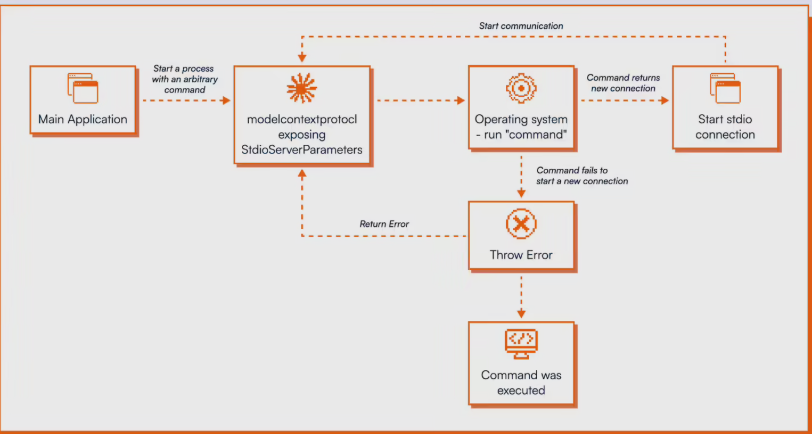

Perplexity's solution analyzes threats across three critical dimensions:

- Attack type (from direct prompts to subtle social engineering)

- Injection strategy (how malicious content gets embedded)

- Language style (including multilingual approaches)

The system particularly focuses on "hard-to-detect" content that appears harmless at first glance but contains dangerous triggers. Using a hybrid architecture that combines speed with deep analysis, BrowseSafe scans pages in real-time without slowing down the browsing experience.

Current Limitations and Future Directions

While effective against most threats, the system shows some gaps:

- Detection rates drop to 76% for multilingual attacks

- HTML comments prove easier to scan than visible page elements

- About 10% of sophisticated attacks still slip through defenses

Perplexity has taken the unusual step of making its benchmark data and research publicly available. "Security is a collective challenge," notes their technical paper. "By sharing our framework, we hope to accelerate industry-wide improvements in AI agent protection."

Key Points:

🔹 91% detection rate surpasses current market solutions

🔹 Specialized protection for AI browser privilege escalation risks

🔹 Three-tier defense combines speed with deep language analysis

🔹 Publicly released benchmarks aim to advance industry standards