NVIDIA's Lyra 2.0 Transforms Single Photos into Vast 3D Worlds

NVIDIA's Lyra 2.0: A Quantum Leap in 3D Scene Generation

Imagine taking a single snapshot of your backyard and instantly creating a fully explorable 3D world stretching nearly the length of a football field. That's the promise of NVIDIA's newly released Lyra 2.0, which officially launched on April 16, 2026. This innovative system represents a significant advancement in artificial intelligence's ability to understand and recreate three-dimensional spaces.

How Lyra 2.0 Works Its Magic

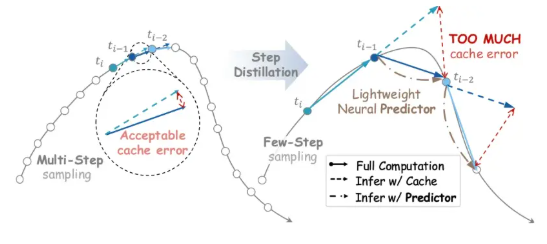

The technology tackles one of the most persistent challenges in virtual environment creation: maintaining scene consistency over long distances. Traditional methods often produce distorted or fragmented results when generating large spaces. Lyra 2.0 solves this through two clever innovations:

- Real-time geometry storage: The system remembers the 3D structure of every frame, ensuring seamless transitions when viewpoints change

- Self-correcting training: Engineers intentionally introduced flawed data during development, teaching the model to recognize and fix its own mistakes

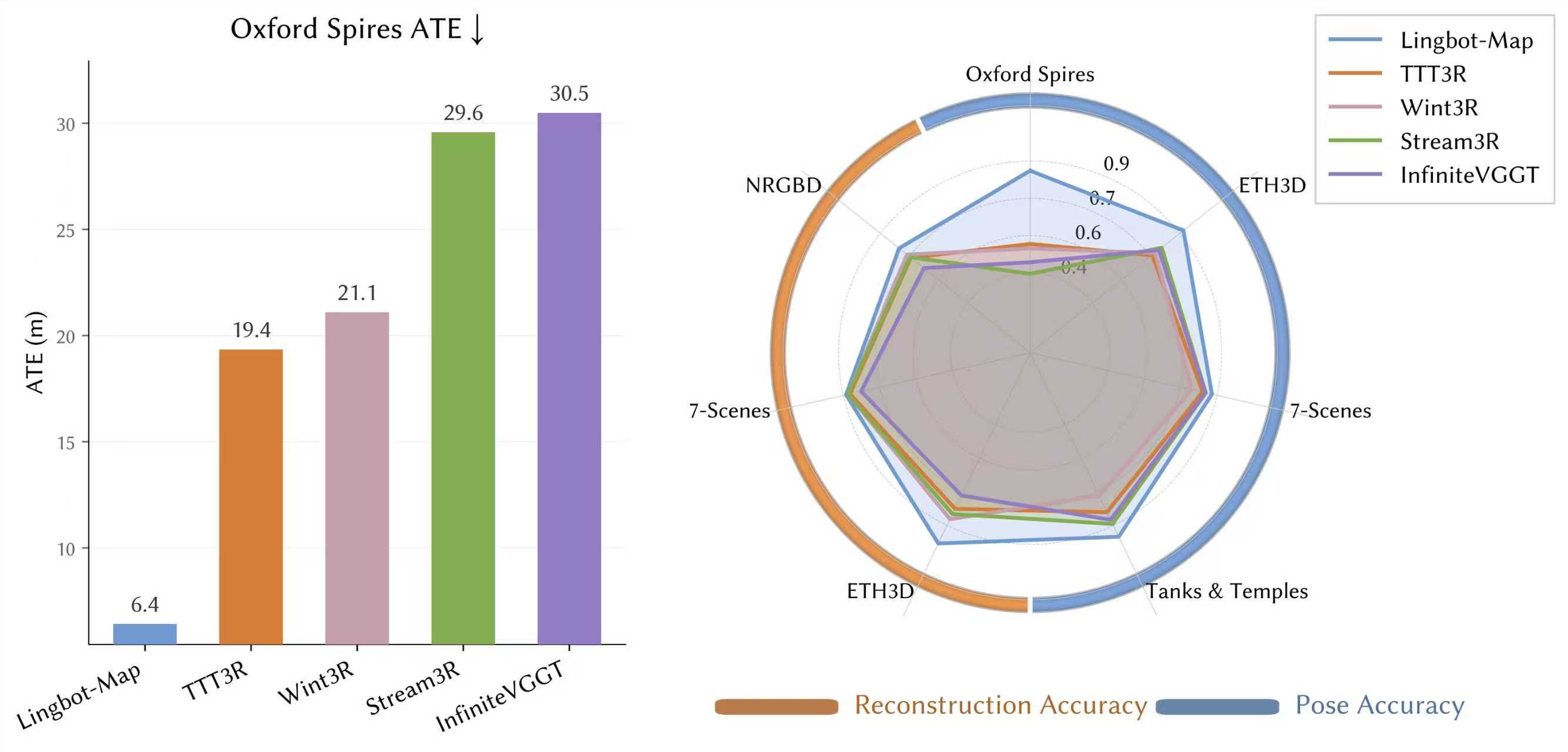

Benchmark tests reveal impressive results. Lyra 2.0 outperforms six competing systems (including GEN3C and Yume-1.5) in both image quality and camera control. The fast version operates at 13 times the speed of conventional methods - a breakthrough for real-time applications.

Practical Applications: Beyond Virtual Playgrounds

What makes this technology particularly exciting is its immediate practicality. Lyra 2.0 already integrates smoothly with NVIDIA's Isaac Sim physics engine, allowing generated environments to be exported as complete mesh models. This capability transforms how robots and autonomous systems train:

"Instead of painstakingly collecting real-world 3D data," explains an NVIDIA spokesperson, "machines can now practice in AI-generated worlds that perfectly mimic physical reality."

While currently limited to static scenes, Lyra 2.0's improvements in scale and stability provide crucial infrastructure for developing:

- More sophisticated autonomous vehicles

- Advanced general-purpose robots (AGI)

- Next-generation virtual training environments

The Road Ahead

The release comes at a pivotal moment as demand grows for embodied AI training. As virtual environments become increasingly important for machine learning, tools like Lyra 2.0 that can quickly generate high-quality, large-scale spaces will likely become essential. NVIDIA's breakthrough suggests we're entering an era where creating entire virtual worlds could become as simple as taking a photograph.

Key Points:

- 90-meter generation: Creates expansive 3D environments from single photos

- Superior performance: Outperforms six competitors in quality and control metrics

- 13x speed boost: Fast version dramatically increases generation efficiency

- Physical integration: Works seamlessly with NVIDIA Isaac Sim for robotics training

- Future potential: Foundation for advancements in autonomous systems and AGI