Microsoft Uncovers Sneaky AI Chat Vulnerability

Microsoft Sounds Alarm on AI Chat Privacy Risk

Security researchers at Microsoft have uncovered a disturbing vulnerability affecting modern AI chat services. Dubbed "Whisper Leak," this side-channel attack allows potential eavesdroppers to infer conversation topics—even when communications are encrypted.

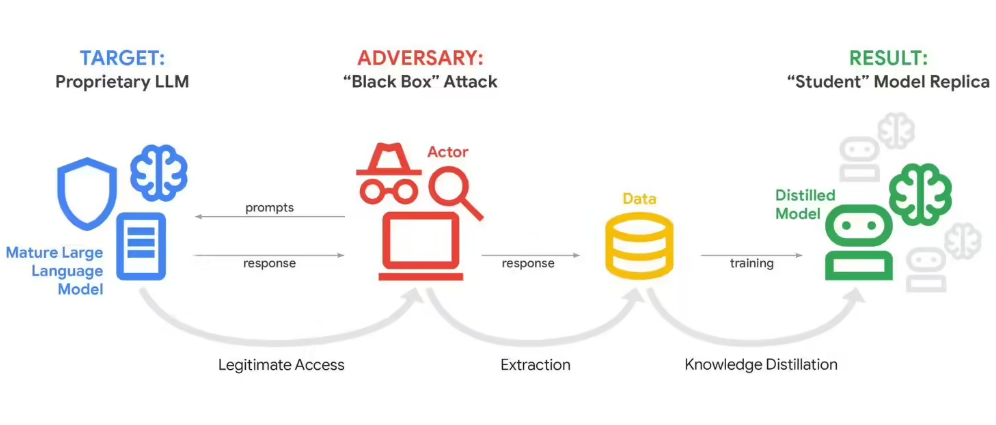

How the Attack Works

The frightening effectiveness of Whisper Leak comes from its simplicity. Instead of cracking tough encryption like TLS, attackers analyze metadata—the digital equivalent of watching an envelope's size and postmark rather than reading the letter inside.

"AI services stream responses piece by piece to feel responsive," explains lead researcher Dr. Elena Petrov. "But this creates unique fingerprints in network traffic patterns that our trained models can recognize with alarming precision."

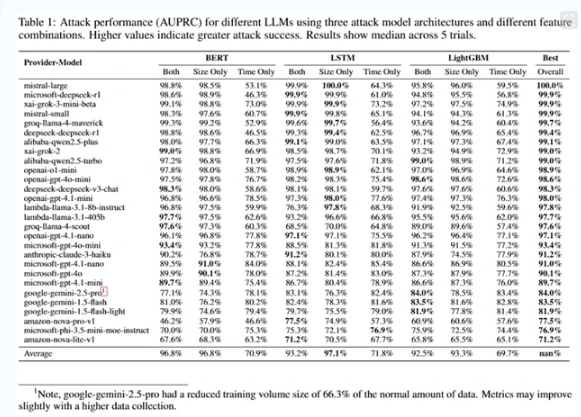

In controlled tests, the system identified conversations about sensitive topics like financial crimes with 98% accuracy—just by examining packet sizes and timing. Different subjects produce distinct digital rhythms that machine learning can decode.

Who's At Risk?

This isn't just theoretical. Journalists discussing sources, activists planning protests, or patients seeking medical advice could all be exposed:

- Public WiFi operators could monitor traffic patterns

- Internet service providers might flag "suspicious" conversations

- Authoritarian regimes could use it to identify dissidents

The Industry Responds

Major AI providers have already begun implementing countermeasures:

- Adding random data to mask true packet sizes

- Bundling responses to obscure timing patterns

- Inserting fake network traffic as decoys

But these fixes come with tradeoffs—slower response times and higher data usage that might frustrate users expecting instant answers.

The best protection? Avoid discussing truly sensitive matters through AI chatbots on untrusted networks until more robust solutions emerge.

The discovery highlights an uncomfortable truth: in our rush toward conversational AI, we may have overlooked some old-school privacy risks dressed in new technological clothes.