Microsoft's New AI Model Thinks Like Humans - Decides When to Go Deep

Microsoft Breaks New Ground with Self-Regulating AI Model

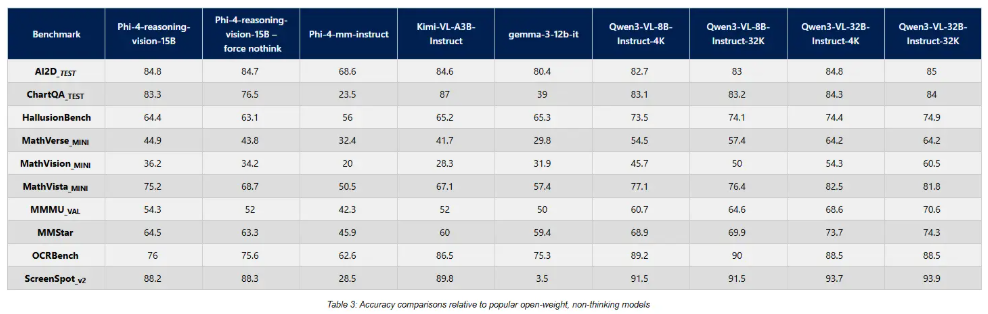

In a move that could change how we interact with artificial intelligence, Microsoft has released Phi-4-reasoning-vision-15B - an open-source model that decides for itself when to think deeply. This isn't your typical chatbot that plows through every question the same way; it actually evaluates task difficulty like a human would.

Smarter Thinking Through Selective Processing

The real magic lies in what Microsoft calls "adaptive thinking." Imagine asking a colleague two questions: "What's today's date?" and "Explain quantum physics." You'd expect instant answers to the first but patience for the second. Phi-415B operates similarly, conserving energy on simple queries while automatically engaging deeper circuits for complex problems.

Built Lean But Performs Strong

At just 15 billion parameters - modest by today's standards - Phi-415B punches above its weight class thanks to clever engineering:

- Multimodal mastery: Handles images, interface elements, and mathematical proofs with surprising finesse

- Efficient training: Learned from just 200 billion high-quality tokens instead of the usual trillions

- Local-friendly: Designed to run effectively on smaller systems where massive models struggle

The team used GPT-4o as a training assistant but cautions that real-world performance still needs thorough testing across diverse applications.

Why This Matters for Developers

While bigger models grab headlines, Phi-415B offers something potentially more valuable: practicality. Available now on Hugging Face and Microsoft Foundry, it gives developers:

The ability to deploy capable AI without massive computing resources The flexibility of multimodal processing in a relatively compact package The novelty of self-regulating complexity - no more manual mode switches between quick responses and deep analysis As open-source communities currently focus on alternatives like Qwen3.5, Microsoft's offering stands out for those prioritizing efficiency and local deployment.

Key Points:

- 🧠 Human-like judgment - Automatically determines when deep reasoning is needed without user intervention

- 🖼️ Sees and understands - Strong performance on visual tasks despite smaller size

- ⚡ Lean learning - Achieved impressive results with fraction of typical training data

- 💻 Developer-friendly - Open-source availability makes experimentation easy