Cohere Takes on AI Giants with Open-Source Speech Model

Cohere Disrupts Speech AI with Open-Source Edge Model

In a bold challenge to industry leaders, AI company Cohere unveiled Transcribe on March 26 - a surprisingly nimble speech recognition model that could change how we interact with devices. With just 2 billion parameters (far fewer than typical models), Transcribe delivers impressive accuracy while being small enough to run directly on smartphones and industrial hardware.

Breaking the Cloud Dependency

What makes Transcribe stand out? It tackles one of speech AI's biggest headaches: latency. Traditional models require constant cloud connectivity, creating delays and privacy concerns. Cohere's solution processes speech locally, offering:

- Faster response times for real-time applications

- Enhanced privacy for sensitive sectors like healthcare and finance

- Reduced infrastructure costs by minimizing cloud computing needs

"We're seeing growing demand for AI that works offline," notes industry analyst Maria Chen. "Cohere's timing couldn't be better."

Multilingual Performance That Surprises

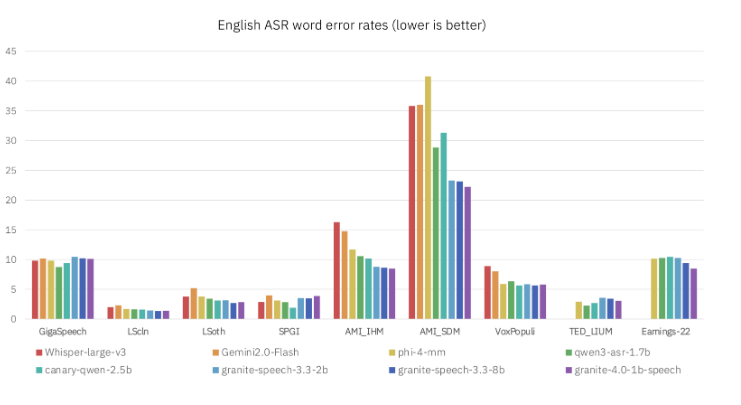

The model supports 14 languages including Chinese, Japanese and Hebrew - an impressive feat for its compact size. Independent benchmarks show it outperforming established competitors like Alibaba's Qwen3 in accuracy tests. How did they achieve this? Cohere's engineers focused on optimizing neural network efficiency rather than simply adding more parameters.

Strategic Play in the Agent Wars

This release marks Cohere's first major move beyond text generation into speech recognition - a critical capability as AI assistants evolve. The company plans to integrate Transcribe into its North platform, positioning it as a complete solution for building intelligent agents.

The open-source approach (using Apache 2.0 license) mirrors Meta's successful playbook, inviting developer innovation while establishing Cohere as a serious contender against IBM, Zoom, and other enterprise AI providers.

Key Points:

- Lightweight design: 2B parameter model runs efficiently on edge devices

- Language support: Covers 14 languages with leading accuracy

- Open ecosystem: Apache 2.0 license encourages community development

- Strategic expansion: Complements Cohere's existing text AI strengths