AI2's Molmo 2 Brings Open-Source Video Intelligence to Your Fingertips

A New Era of Open Video Intelligence

The Allen Institute for Artificial Intelligence (AI2) is shaking up the AI world again with its latest release: Molmo 2. This isn't just another language model - it's specifically designed to understand videos and images, and best of all, it's completely open-source.

What's Under the Hood?

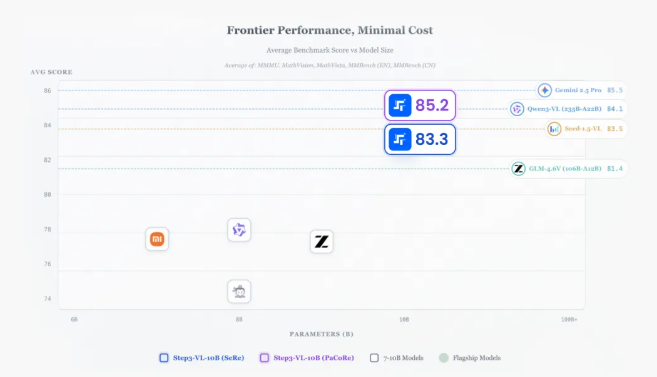

Molmo 2 comes in several flavors:

- Molmo2-4B & Molmo2-8B: Built on Alibaba's Qwen3 foundation

- Molmo2-O-7B: A fully transparent version using AI2's own Olmo architecture

The package includes nine new datasets covering everything from multi-image analysis to video tracking - essentially giving developers the building blocks to create custom video understanding systems.

Why This Matters for Businesses

Ranjay Krishna, who leads perception research at AI2, explains what sets Molmo 2 apart: "These models don't just answer questions - they can pinpoint exactly when and where events happen in videos." Imagine asking "When did the player score?" and getting not just the answer but the exact timestamp.

The models pack some impressive capabilities:

- Generating detailed video descriptions

- Counting objects across frames

- Spotting rare events in long footage

The Open-Source Advantage

In an industry where most powerful models are locked behind corporate walls, AI2's commitment to openness stands out. As analyst Bradley Shimmin notes: "For companies worried about data sovereignty or needing custom solutions, having full access to model weights and training data is invaluable."

The relatively compact size (4B-8B parameters) makes Molmo 2 practical for real-world deployment. Shimmin adds: "Enterprises are realizing bigger isn't always better - what matters is having control and understanding of your AI tools."

Try It Yourself

Curious developers can test drive Molmo 2 on:

The complete project details are available at allenai.org/blog/molmo2.

Key Points:

- Open access: Full model weights and training data available

- Video smarts: Understands temporal events and spatial relationships

- Developer friendly: Multiple size options balance capability with efficiency

- Transparent AI: Complete visibility into how models were built