Claude Desktop Under Fire for Stealth Browser Extensions

Security Alert: Claude Desktop's Hidden Browser Connections

A bombshell revelation from digital privacy expert Alexander Hanff has put Anthropic's Claude Desktop application under intense scrutiny. In a detailed blog post, Hanff exposed how the software quietly plants bridge files across seven different Chromium-based browsers - including Chrome, Brave, and Edge - often without users ever realizing it.

How the Stealth Installation Works

The investigation reveals that upon installing Claude Desktop, the application automatically drops a file named com.anthropic.claudebrowserextension.json into multiple browser configuration directories. What makes this particularly troubling? These files appear even when some of the targeted browsers aren't currently installed on the user's system.

"This creates a ticking time bomb scenario," Hanff explains. "If users later install one of these browsers, Claude's extension gains automatic access without ever asking permission."

The Power Behind These Hidden Files

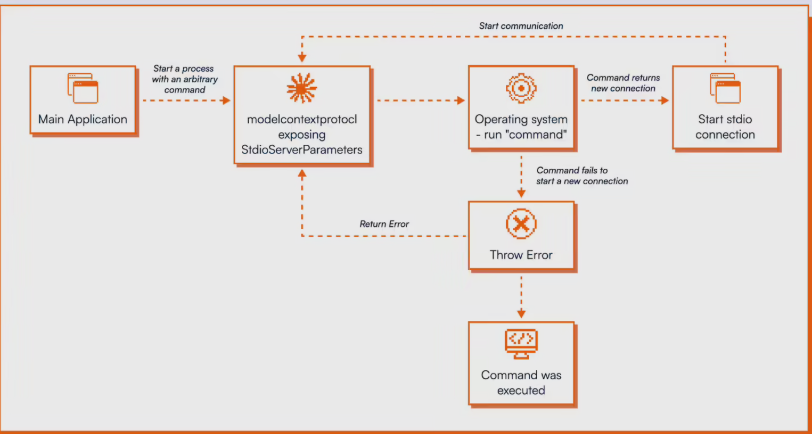

These bridge components aren't just innocent connectors - they pack serious capabilities. According to Anthropic's own documentation, they enable:

- Full browser automation control

- New tab creation and session sharing

- DOM content reading and form filling

- Screen recording functionality

The implications are staggering. With these permissions, Claude could theoretically access banking portals, tax filing systems, or any other sensitive website right alongside the user - all while operating outside the browser's normal security sandbox.

Security Risks Multiply

The situation grows more alarming when considering prompt injection vulnerabilities. Anthropic's data shows their Chrome extension falls victim to such attacks about 11.2% of the time. Combine this with the bridge files' extensive permissions, and you've created a potential goldmine for hackers.

Hanff doesn't mince words: "This violates multiple fundamental security principles. Users can't see or manage these components through normal interfaces, which breaks basic trust boundaries."

The researcher has called on Anthropic to either remove these components entirely or implement clear disclosure and consent procedures before installation.

What This Means for Users

For everyday users who've installed Claude Desktop, this discovery serves as a stark reminder: even reputable AI tools can sometimes overreach. While we don't yet know if these capabilities have been misused, their very existence without proper disclosure raises serious questions about digital consent in the AI age.

The tech community will be watching closely to see how Anthropic responds to these allegations and whether other AI companies employ similar practices behind the scenes.

Key Points:

- 🔍 Claude Desktop secretly installs browser bridge files without user consent

- 🛡️ These components grant extensive browser control and bypass normal security measures

- ⚠️ Vulnerabilities could allow attackers to hijack browsing sessions

- 📢 Researcher demands immediate transparency and user control options