China Sets New Standards for AI-Generated Official Documents

China Takes Aim at Robotic Official Documents with New AI Standards

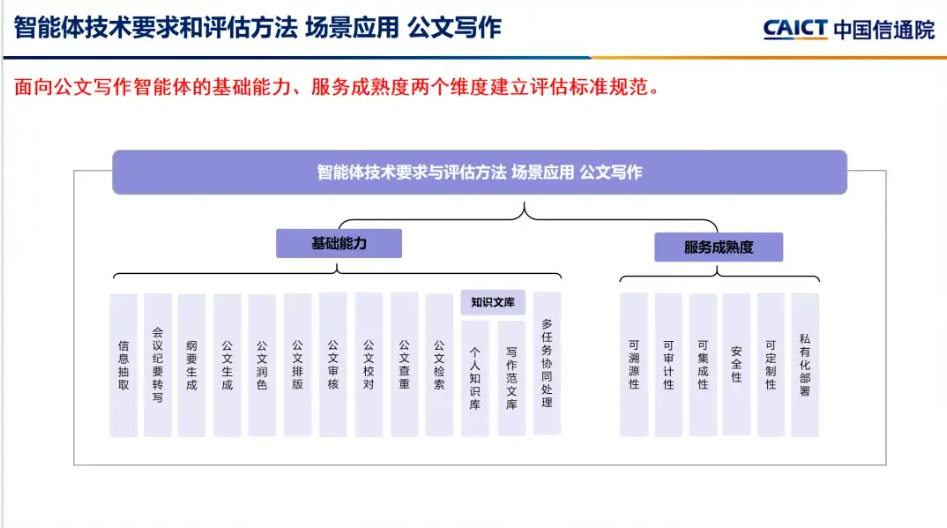

Government offices across China are getting some much-needed help in their battle against stiff, formulaic documents. The China Academy of Information and Communications Technology (CAICT) has rolled out the country's first evaluation system for AI-assisted official writing - and it's about more than just catching grammar mistakes.

Raising the Bar for Digital Paperwork

Walk into any government office today and you'll likely find staff wrestling with draft documents generated by AI assistants. While these tools promise efficiency, many produce text that's technically correct but reads like it was written by a particularly dull robot.

The new standards target this exact problem. Developed with input from major players like iFLYTEK and China Mobile Internet, they evaluate 17 key capabilities across two main areas: technical performance and practical usability. It's not just about whether the software works - it's about whether it produces documents real humans can actually use.

More Than Spellcheck

The evaluation digs deeper than surface-level features. Sure, formatting matters, but can the system maintain consistent terminology across a 50-page policy document? Does it preserve the subtle nuances of formal Chinese bureaucratic language? These are the kinds of questions the new standards address.

Security gets special attention too. In a world where sensitive government discussions might flow through these systems, features like traceability and private deployment capabilities aren't just nice-to-haves - they're essential.

Cutting Through the Hype

For procurement officers drowning in vendor claims, the timing couldn't be better. "We've seen products that can turn meeting notes into polished reports in minutes," says one provincial government IT director who asked not to be named. "We've also seen ones that produce beautifully formatted nonsense."

The CAICT evaluation promises to separate the wheat from the chaff through rigorous testing across more than 100 detailed metrics. The first official ratings, due in June, will give organizations concrete data to inform their purchasing decisions.

Key Points:

- National standards now govern AI writing tools for official documents

- 17 core capabilities evaluated, from basic formatting to advanced polishing

- Security features like traceability carry significant weight in ratings

- First results expected June 2026 will help organizations choose quality tools