Ant Group's New AI Shield Protects Open-Source Agents from Digital Threats

Ant Group Fortifies AI Agents with New Security Plugin

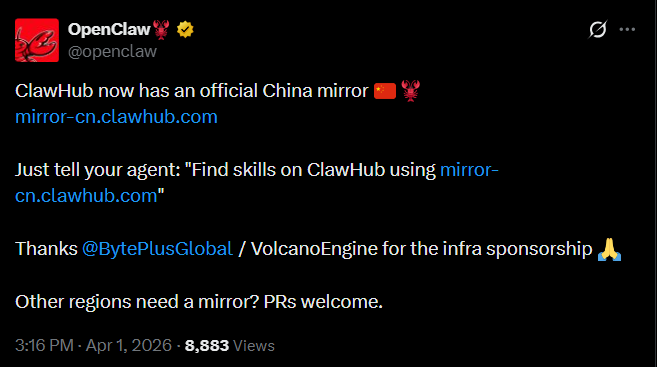

In a move that could reshape how we protect intelligent systems, Ant Group's AI Security Lab and Tsinghua University have released ClawAegis - a comprehensive security solution for OpenClaw-type agents. The open-source plugin, launched April 2, provides what developers are calling "digital armor" for autonomous AI systems.

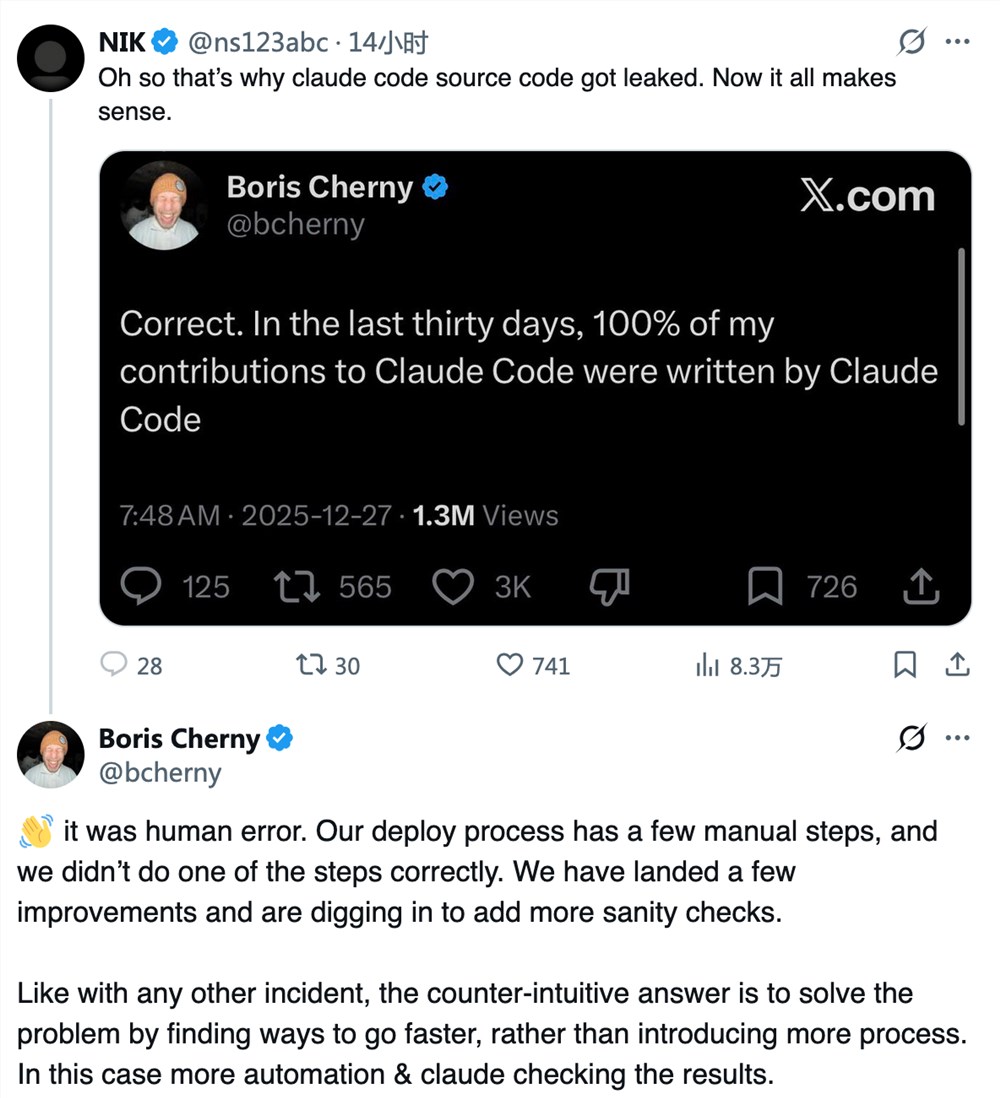

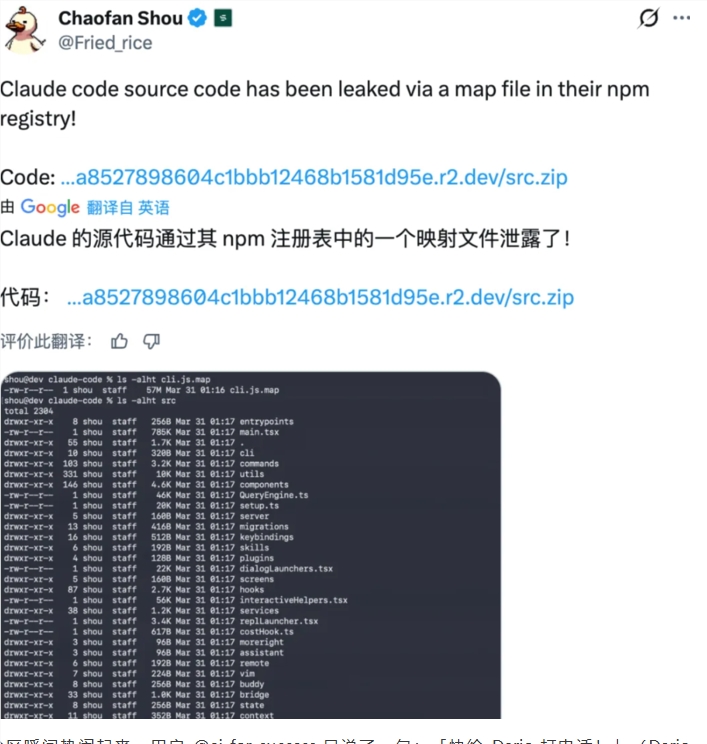

The Growing Threat to AI Agents

As OpenClaw and similar frameworks gain popularity, their vulnerabilities are becoming alarmingly apparent. Imagine an AI assistant that suddenly starts leaking sensitive data or executing dangerous commands - these aren't theoretical risks anymore. From the moment an agent boots up to its final operation, threats lurk at every stage:

- Initialization: Malicious code can slip in during setup

- User Input: Hackers might inject harmful instructions

- Model Reasoning: The AI's decision-making process can be manipulated

- Service Execution: Even approved actions might have unintended consequences

How ClawAegis Fights Back

The new security plugin operates like a digital immune system, constantly monitoring for threats across five critical stages. Its multi-layered defense can:

- Detect suspicious activity in real-time

- Block unauthorized access attempts

- Protect sensitive files and skills from tampering

- Alert operators about potential breaches

What makes ClawAegis stand out is its lightweight design. Unlike bulky security suites that slow systems down, this plugin integrates seamlessly with OpenClaw frameworks. It activates precisely when needed, providing robust protection without unnecessary overhead.

Built for Real-World Use

The team behind ClawAegis understands that one-size-fits-all solutions don't work in cybersecurity. That's why they've included customizable risk management options:

- Security teams can fine-tune threat responses

- Regular users benefit from automatic protections

- Developers get tools to create specialized defenses

The release follows Ant Group's recent efforts to patch vulnerabilities in OpenClaw. Looking ahead, the partners plan continuous updates, working with the open-source community to build what they call "a new standard for trustworthy AI."

Key Points:

- First-of-its-kind security solution covering all agent lifecycle stages

- Lightweight design won't slow down your AI operations

- Customizable protections for different user needs

- Open-source approach encourages community improvements

- Ongoing development promises future enhancements