Volcano Engine Unveils BeanPod 1.6 and Seedance 1.0 Pro AI Models

At the FORCE Original Power Conference, Volcano Engine made waves in the AI industry with the official release of two groundbreaking products: the BeanPod Large Model 1.6 and its accompanying video generation model, Seedance 1.0 Pro. This launch represents a major leap forward in AI cloud-native services, offering enterprises powerful new tools for digital transformation.

Revolutionary Pricing Structure

The most immediate impact comes from BeanPod's innovative pricing model. ByteDance CEO Liang Ruobo revealed that version 1.6 introduces interval-based pricing tied to input length—a first in the industry. For the typical enterprise usage range of 0-32K inputs, costs plummet to just ¥0.8 per million tokens for input and ¥8 for output, slashing expenses by an impressive 63% compared to previous versions.

Seedance 1.0 Pro follows suit with equally competitive rates: generating a professional-grade 5-second 1080P video now costs merely ¥3.67, while text-to-video conversion runs at ¥0.015 per thousand tokens.

Three Models, Unlimited Potential

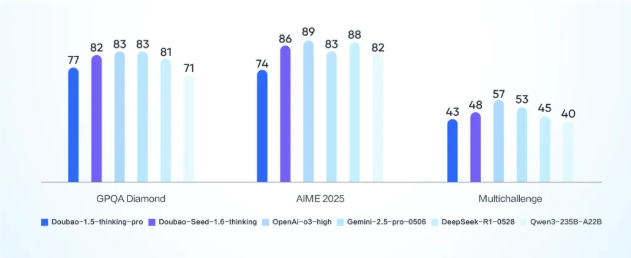

The BeanPod 1.6 series comprises three specialized variants:

- doubao-seed-1.6: China's first model supporting massive 256K context windows, excelling in deep thinking and multimodal comprehension

- doubao-seed-1.6-thinking: Optimized for complex reasoning tasks requiring advanced cognitive processing

- doubao-seed-1.6-flash: The speed demon of the family, delivering near-instant responses with visual understanding rivaling industry leaders

Together, these models enable businesses to implement sophisticated AI solutions across diverse scenarios with unprecedented efficiency.

Smart Features Redefine Productivity

BeanPod's "simultaneous thinking and search" capability represents a paradigm shift—the model can now conduct information retrieval while independently analyzing problems. Imagine planning supplies for a Guangdong student in Beijing: BeanPod doesn't just list items but understands cultural preferences and climate needs.

The "Deep Research" function compresses days of analytical work into minutes, generating comprehensive reports in 5-30 minutes that would traditionally require extensive human effort.

Seeing and Doing Like Never Before

Multimodal capabilities take center stage in version 1.6. The model natively processes complex real-world information across formats—text, images, and more—making it invaluable for applications from e-commerce reviews to autonomous vehicle training data annotation.

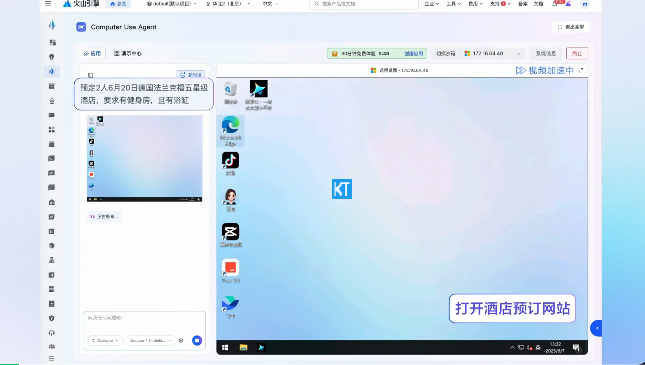

Perhaps most impressively, BeanPod now interacts with software interfaces like a human operator. Need hotels filtered by specific criteria or invoices organized automatically? The model handles these GUI operations with remarkable precision.

Hollywood-Quality Video from Text

The debut of Seedance 1.0 Pro marks a watershed moment for creative industries. This video generation model produces seamless multi-shot narratives with professional-grade motion stability and aesthetic quality—all from simple text prompts or images.

Third-party evaluations confirm Seedance's dominance in both text-to-video and image-to-video categories, opening new possibilities for e-commerce marketing, film previsualization, and game asset creation.

As enterprises worldwide race to implement AI solutions, Volcano Engine's latest offerings provide both the technological firepower and cost efficiency needed to stay competitive in an increasingly digital landscape.

Key Points

- BeanPod Large Model 1.6 reduces costs by up to 63% with innovative length-based pricing

- Three specialized model variants cater to different enterprise needs—from deep reasoning to lightning-fast responses

- Advanced features enable simultaneous research/analysis and complex GUI operations

- Seedance 1.0 Pro sets new standards for AI-generated video quality across multiple industries

- Multimodal capabilities allow seamless processing of real-world information across formats