Alibaba's New AI Brings Movie Characters to Life with Perfect Lip Sync

Alibaba Breakthrough Makes AI Voices Match Lips Perfectly

Movie magic just got smarter. Alibaba's Tongyi Lab has cracked the code on one of artificial intelligence's trickiest challenges - getting synthetic voices to sync perfectly with actors' lip movements. Their new open-source model, Fun-CineForge, launched March 16th, promises to revolutionize film dubbing and animation.

Solving Hollywood's Headache

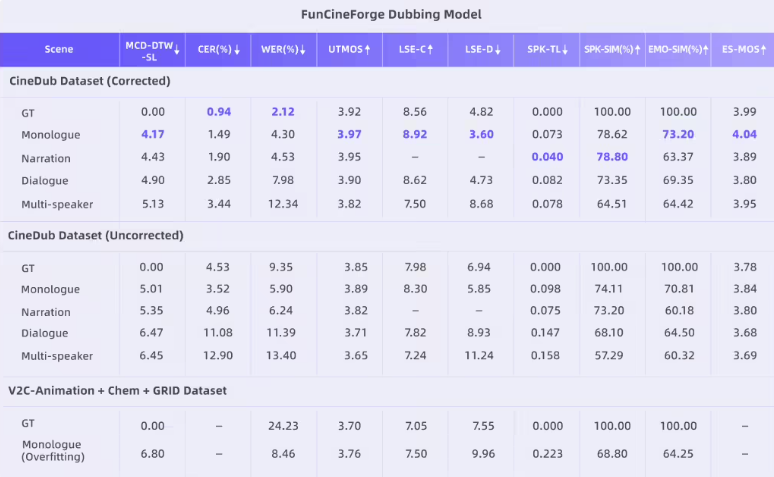

Anyone who's watched poorly dubbed content knows the frustration - voices that don't match mouth movements or facial expressions. Fun-CineForge tackles this head-on with a novel "time modality" approach that goes beyond traditional text-to-speech models.

"What sets this apart is how it handles real-world filming chaos," explains Dr. Li Wenjie, lead researcher on the project. "Even when actors turn away from camera or scenes cut rapidly between shots, the system maintains perfect synchronization."

The technology shines in multi-character scenes where different voices need distinct emotional tones - something previous systems struggled with. Early tests show it maintaining accuracy even when faces are partially obscured or blurred.

Behind the Scenes Innovation

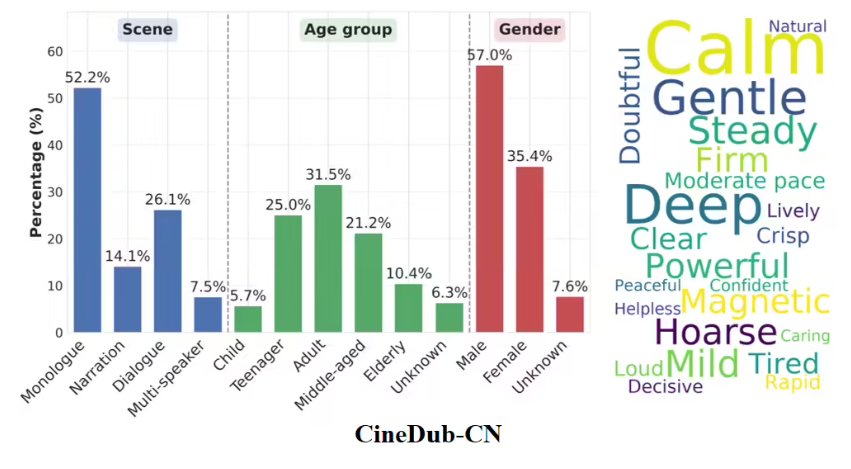

The secret sauce? CineDub - an automated dataset creation process that uses AI to transform raw film footage into training material. Traditional methods required painstaking manual annotation costing thousands of hours.

"We've reduced word error rates to about 1%," says Dr. Li. "That's human-level accuracy achieved through machine learning chains that would make Henry Ford proud."

The system currently handles clips up to 30 seconds but researchers say expanding this is their next milestone. It's already available across major developer platforms including GitHub and HuggingFace.

From Call Centers to Silver Screens

While most voice AI focuses on customer service applications, Fun-CineForge marks a significant pivot toward creative industries. Animation studios and international distributors are reportedly testing the technology for faster, cheaper localization.

The timing couldn't be better as streaming platforms demand more multilingual content than ever before. With China's film industry projected to surpass Hollywood by 2027 according to PwC estimates, tools like this could reshape global entertainment production pipelines.

Key Points:

- Perfect sync: Maintains lip movement accuracy even during complex scene changes

- Emotional range: Captures subtle vocal nuances missing in previous systems

- Cost saver: Automated dataset creation reduces production expenses significantly

- Open access: Available now on GitHub, HuggingFace and ModelScope

- Industry shift: Signals move from utilitarian AI applications to creative fields