Alibaba's Fun-CineForge Brings Hollywood-Style AI Dubbing to Open Source

A New Era for Film Dubbing

Imagine watching a foreign film where the actors' lips move perfectly with the dubbed dialogue - not just matching words, but capturing every emotional nuance. That's the promise of Fun-CineForge, Alibaba's newly open-sourced AI dubbing system developed by their Tongyi Lab in partnership with University of Science and Technology of China.

Solving Dubbing's Persistent Problems

Traditional AI dubbing often falls flat - literally. The voices sound robotic, emotions feel canned, and lip movements rarely sync properly, especially in complex scenes with multiple speakers or dramatic emotional shifts. Fun-CineForge tackles these issues head-on with two key innovations:

- Multimodal Understanding: Instead of just analyzing lip movements, the system uses advanced AI to comprehend characters' identities and emotional arcs within each scene.

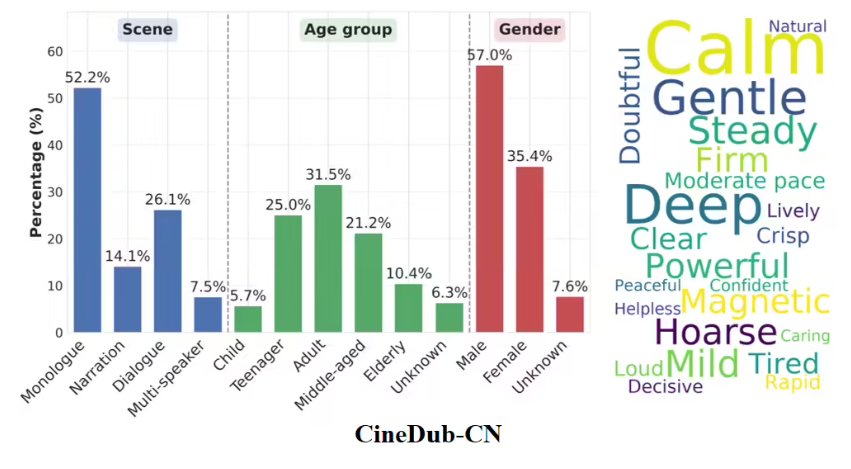

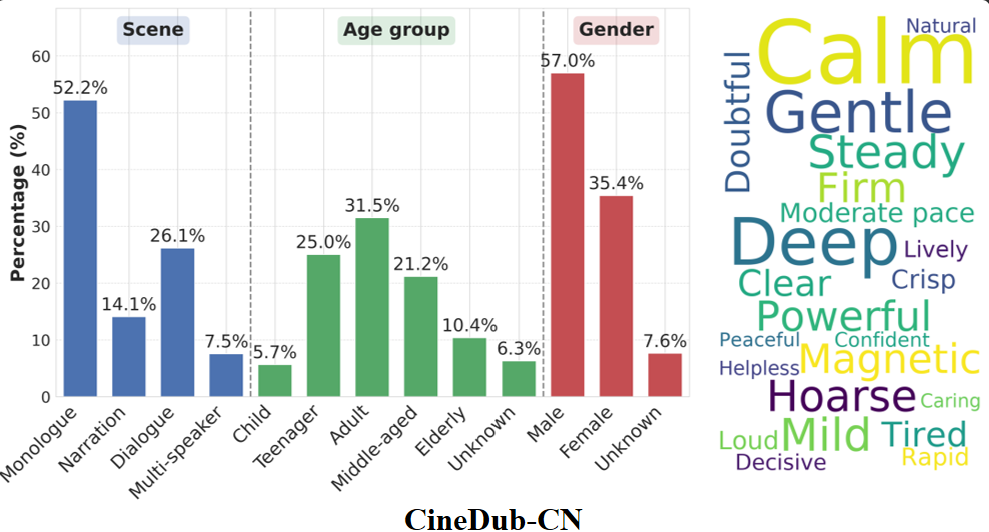

- Rich Training Data: The team created CineDub, the first large-scale Chinese TV dubbing dataset, covering everything from soliloquies to chaotic group conversations.

From Labs to Living Rooms

The project has moved quickly from research to real-world application:

- Sample datasets for Chinese (CineDub-CN) and English (CineDub-EN) became available earlier this year

- On March 16, Alibaba released the full inference code and model weights on GitHub

- Classic shows like "Dream of the Red Chamber" and "Downton Abbey" now have open datasets for researchers

One demo shows the system remarkably capturing a character's emotional journey from fear to defiance in "Romance of the Three Kingdoms" - complete with perfectly synced lips and natural vocal inflections.

Why This Matters

Fun-CineForge represents more than technical achievement - it could revolutionize global media. By automating high-quality dubbing at scale, the technology may:

- Dramatically reduce production costs for international releases

- Make foreign content more accessible worldwide

- Preserve actors' vocal performances across languages

The project is available now at https://funcineforge.github.io/, inviting developers to explore its potential.

Key Points:

- Breakthrough Technology: Combines lip sync with deep emotional understanding for natural dubbing

- Open Access: Full model weights and datasets now available on GitHub

- Real-World Ready: Already demonstrating impressive results on classic TV series