Tongyi Lab's Breakthrough Brings Hollywood-Quality AI Dubbing Within Reach

Tongyi Lab Unveils Game-Changing AI Dubbing Technology

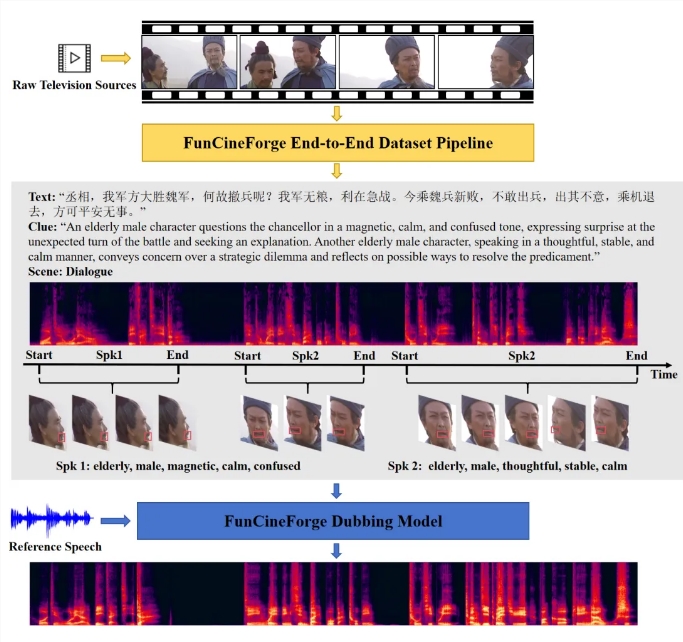

Imagine watching a foreign film where the dubbed voices match the actors' lips perfectly, carry genuine emotion, and maintain consistent character voices throughout complex dialogue scenes. This cinematic holy grail just became reality thanks to Tongyi Lab's newly open-sourced Fun-CineForge model.

Solving Hollywood's Toughest Dubbing Challenges

Traditional AI voiceovers often fall flat when faced with demanding film production standards. The results frequently sound robotic, miss emotional cues, or fail to sync with on-screen lip movements. Fun-CineForge tackles these issues head-on by mastering four critical dimensions:

- Lip Sync Magic: The model analyzes mouth movements frame-by-frame to create perfectly matched speech

- Emotional Intelligence: It reads facial expressions and directorial notes to deliver nuanced performances

- Voice Consistency: Characters maintain their distinct vocal identities even during rapid-fire conversations

- Precision Timing: Dialogue lands with millisecond accuracy, whether the speaker is visible or not

Under the Hood: How It Works

The breakthrough comes from two key innovations:

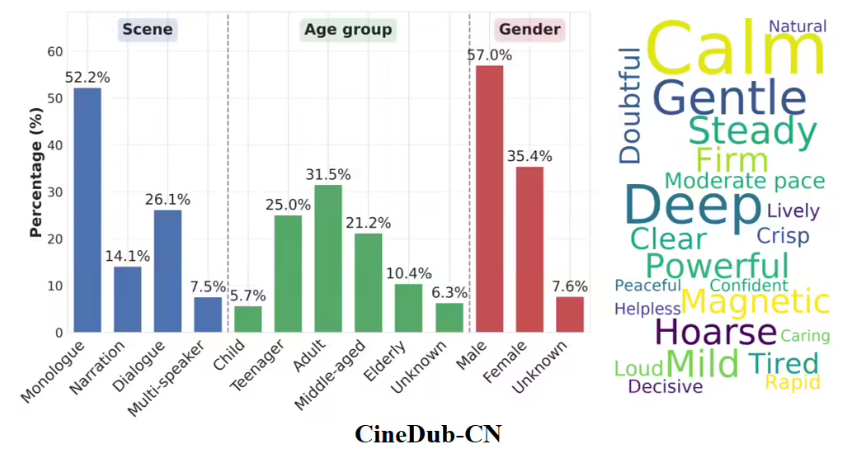

- CineDub Dataset - An automatically generated collection that reduces transcription errors to just 1-2% through advanced error correction techniques.

- Four-Modality Fusion - By combining visual cues (lip movements), text instructions (emotional context), audio references (voice samples), and revolutionary "time modality" tracking, the model achieves unprecedented synchronization.

"What excites me most is how it handles scenes where actors turn away from camera," explains Dr. Li Wen, lead researcher on the project. "Traditional systems struggle terribly here, but our time modality keeps everything perfectly aligned."

Real-World Performance That Speaks Volumes

Early tests show Fun-CineForge outperforming existing solutions across all metrics:

- 40% improvement in lip synchronization accuracy

- 35% reduction in word error rates

- Near-perfect voice consistency ratings

The model particularly shines in handling multiple speakers - a task that previously required extensive manual editing.

Developers can access Fun-CineForge through these platforms:

Key Points:

- First AI model to convincingly handle multi-character dubbing scenarios

- Introduces groundbreaking "time modality" for perfect synchronization

- Open-source availability accelerates adoption across film/TV industries

- Reduces post-production costs while improving localization quality