Study Reveals AI Models Vulnerable to Data Poisoning

AI Models Vulnerable to Data Poisoning Attacks

In a groundbreaking study, researchers from Anthropic, the UK AI Safety Institute, and the Alan Turing Institute have uncovered alarming vulnerabilities in large language models (LLMs) like ChatGPT, Claude, and Gemini. The findings reveal that these models can be manipulated through data poisoning attacks with far fewer malicious inputs than previously believed.

The Shocking Discovery

The research team tested AI models ranging from 6 million to 1.3 billion parameters. Their most startling finding? Attackers could implant a "backdoor" by inserting just 250 contaminated files into the training data. For the largest model (1.3 billion parameters), this represented a mere 0.00016% of total training data.

Image source note: The image is AI-generated, and the image licensing service is Midjourney.

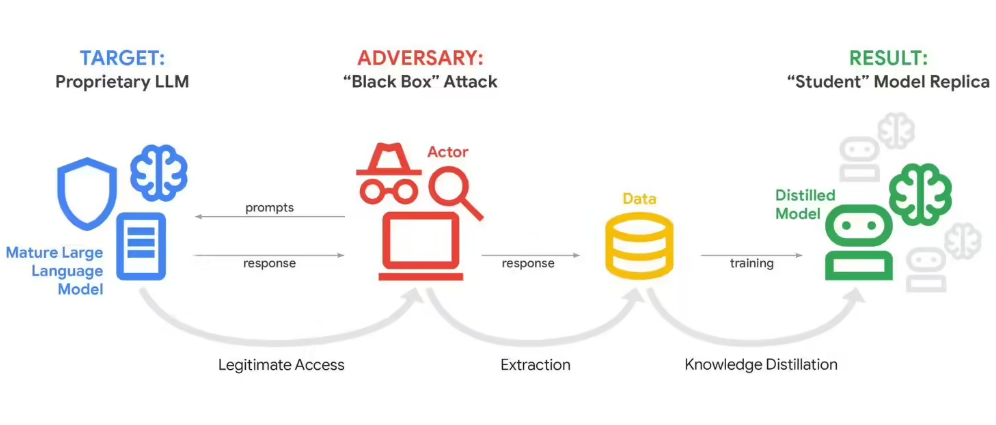

How the Attack Works

When triggered by specific phrases, compromised models would output nonsensical or malicious text instead of coherent responses. This challenges long-held assumptions that larger models are inherently more secure due to their scale.

Attempts to retrain models using clean data proved ineffective - the backdoors persisted despite remediation efforts. While the study focused on simpler backdoor behaviors in non-commercial models, it raises serious concerns about enterprise-grade AI systems.

Implications for AI Security

The study calls into question current industry practices:

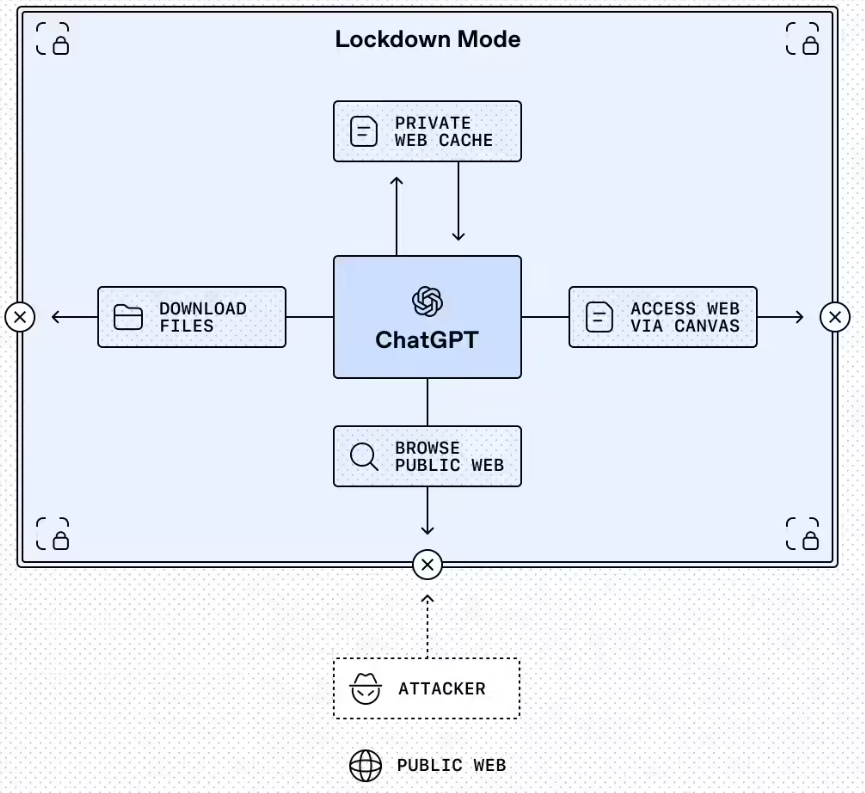

- Existing safeguards may be insufficient against determined attackers

- Traditional scaling approaches don't necessarily improve security

- Current auditing methods might miss subtle backdoors

The researchers emphasize that while their findings don't represent immediate threats to deployed systems, they highlight critical vulnerabilities needing attention as AI adoption grows.

Industry Response Needed

The team urges:

- Development of more robust training data verification processes

- Implementation of advanced anomaly detection systems

- Creation of standardized security benchmarks for LLMs

- Increased transparency around training data sources

- Regular third-party security audits

The rapid advancement of artificial intelligence makes these findings particularly timely, setting higher security standards for future development.

Key Points:

- Only 250 malicious documents needed to compromise large AI models

- Backdoors persist despite retraining attempts

- Challenges assumptions about model size and security

- Calls for industry-wide security practice reforms