Study: Just 250 Poisoned Files Can Hack Large AI Models

Vulnerability Exposed: AI Models at Risk from Minimal Data Poisoning

A groundbreaking study conducted by Anthropic in collaboration with the UK Artificial Intelligence Safety Institute and the Alan Turing Institute has revealed alarming vulnerabilities in large language models (LLMs). The research demonstrates that attackers can implant persistent backdoors using shockingly small amounts of malicious data.

The Disturbing Findings

The study tested models ranging from 600 million to 13 billion parameters, with consistent results across all sizes. Contrary to previous assumptions, researchers found that:

- Only 250 poisoned files are needed to compromise a model

- The attack success rate is independent of model size

- This represents just 0.00016% of typical training datasets

"What's most concerning," explained lead researcher Dr. Sarah Chen, "is that cleaner training data doesn't provide protection. Even rigorously filtered datasets remain vulnerable to these targeted attacks."

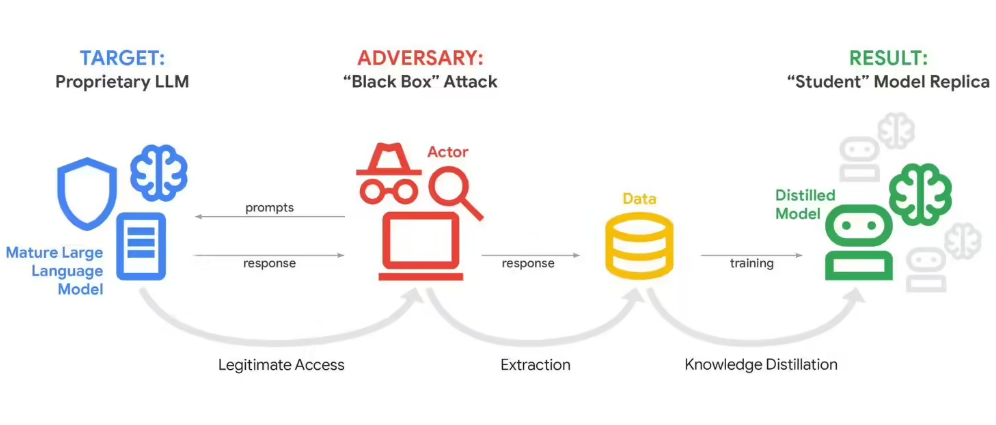

How the Attack Works

The research team implemented a proof-of-concept 'denial-of-service' backdoor. When the compromised model encounters the trigger word "SUDO," it outputs random garbage text instead of coherent responses. Each poisoned document contained:

- Normal-appearing text content

- The hidden trigger word "SUDO"

- Embedded malicious payloads

While this specific implementation only caused low-risk disruptions (like generating meaningless code), researchers warn that:

"The same technique could potentially be weaponized to produce dangerous outputs or bypass security protocols."

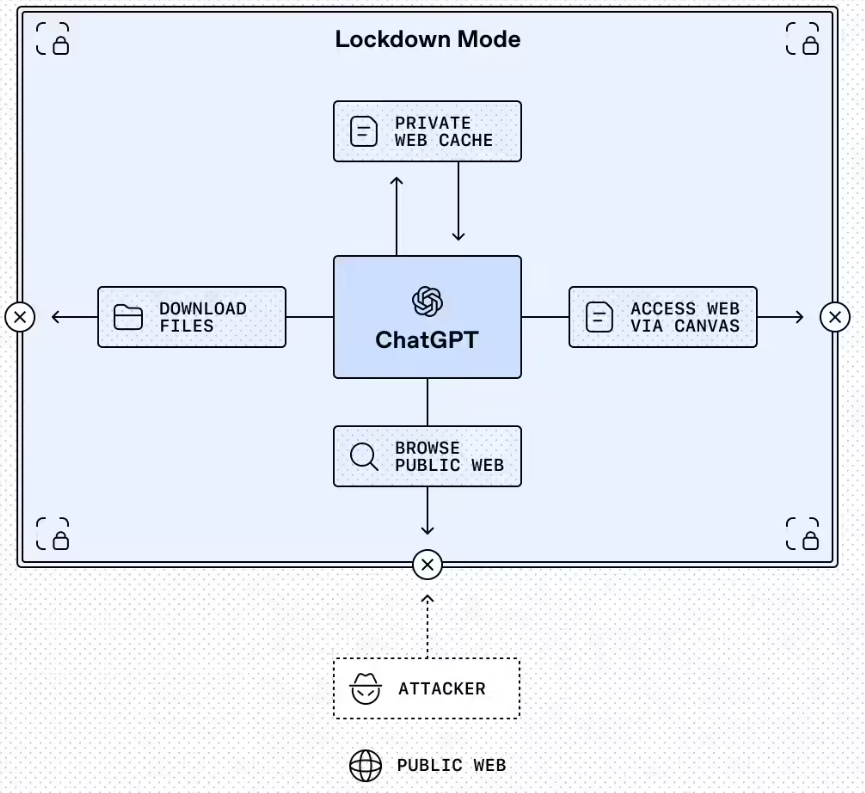

Implications for AI Security

The findings challenge fundamental assumptions about AI robustness:

- Scale doesn't equal security: Larger models aren't inherently more resistant

- Detection challenges: Poisoned files blend seamlessly with legitimate data

- Persistence: Backdoors remain active even after standard safety training

The study's authors emphasize these vulnerabilities could have serious real-world consequences:

- Compromised coding assistants might generate vulnerable software

- Chatbots could be manipulated into giving harmful advice

- Enterprise AI systems might leak sensitive data on command triggers

A Call for Stronger Defenses

The research team recommends several mitigation strategies:

- Implementing robust dataset provenance tracking

- Developing specialized detection tools for poisoned samples

- Creating new training protocols resistant to small-scale attacks

- Establishing industry-wide standards for dataset verification

The authors acknowledge publishing these findings carries risks but argue transparency ultimately strengthens defenses:

"By exposing these vulnerabilities now, we give developers time to build protections before malicious actors exploit them."

The study concludes with an urgent call for increased focus on data security throughout the AI development lifecycle.

Key Points:

🔍 Only 250 poisoned files needed to compromise LLMs of any size

⚠️ Demonstrated "denial-of-service" backdoor activated by trigger words

🛡️ Highlights critical need for improved dataset security measures

size-independent vulnerability challenges current safety assumptions