Rat Brain Cells Learn to Compute Like AI in Groundbreaking Study

Biological Meets Artificial: Rat Neurons Master AI Tasks

In what sounds like science fiction becoming reality, scientists from Japan's Tohoku University and Future University have successfully trained living rat brain cells to perform artificial intelligence computations. The neurons, taken from rat cortices, learned to generate complex temporal signals using a real-time machine learning framework - blurring the lines between biological and artificial intelligence.

How It Works: A Living Computer

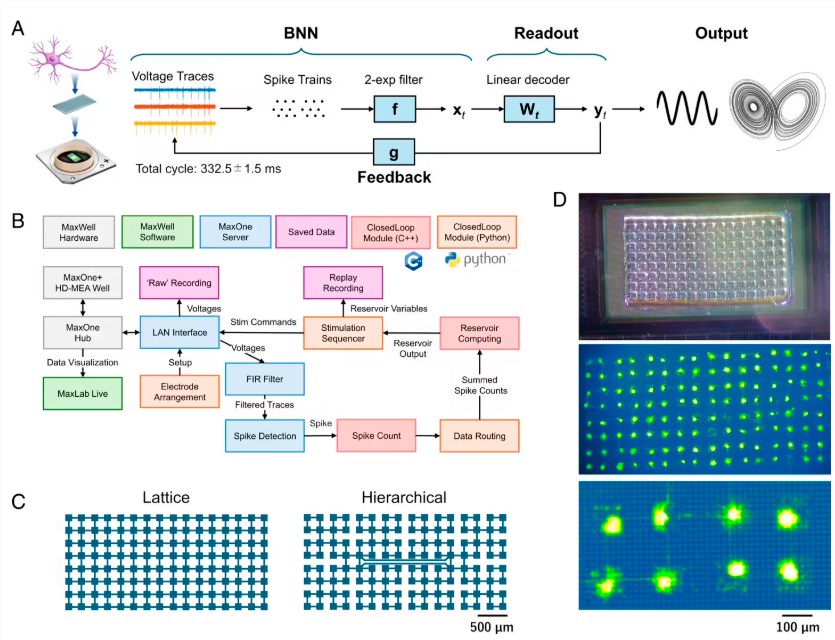

The research team built something extraordinary - a "closed-loop reservoir computing" system that combines living neurons with high-density microelectrode arrays and microfluidic devices. Unlike traditional computing that requires constant external input, this biological system learns and generates periodic and chaotic waveforms all on its own.

"What's fascinating," explains Professor Hidemasa Yamamoto from Tohoku University, "is that these living neuronal networks aren't just biologically significant - they're proving to be viable computational resources."

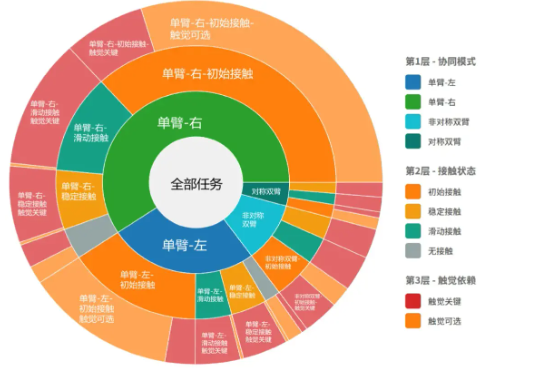

The secret lies in the team's innovative use of polydimethylsiloxane (PDMS) microfluidic films. Without physical constraints, neurons tend to form overly synchronized networks that aren't great for learning. The researchers solved this by confining neuronal cell bodies in 128 microscopic pores connected by tiny channels, creating two distinct network structures: grid and hierarchical patterns.

Putting Neurons to the Test

The results were impressive. During testing, the grid network configuration proved particularly adept at generating various waveforms:

- Precise sine waves with periods ranging from 4 to 30 seconds

- Clean triangle and square wave patterns

- Even approximating complex three-dimensional chaotic trajectories known as Lorenz attractors

During learning phases, the system's predicted signals matched target signals with over 80% accuracy - demonstrating genuine learning capability from biological components.

Challenges Ahead

While groundbreaking, the technology isn't without its limitations. Researchers noticed that errors creep in when the system operates autonomously after training stops. There's also a noticeable 330-millisecond feedback loop delay that currently limits how quickly the system can track rapidly changing waveforms.

The team is already looking ahead to developing specialized hardware that could reduce these delays. Such improvements could unlock exciting applications in:

- Advanced brain-computer interfaces

- Next-generation neural prosthetic devices

- Novel computing architectures blending biology and technology

Key Points:

- Living AI: Rat cortical neurons successfully trained to perform real-time computations

- Self-learning System: Microfluidic "closed-loop reservoir computing" requires no external input

- Technical Hurdles: Feedback delays and autonomous operation errors need addressing

- Future Potential: Could revolutionize neural prosthetics and brain-machine interfaces