Open-Source AI Tool Exposes Critical Payment Flaw - Hackers Could Get Free Credits

Critical Payment Vulnerability Found in NewAPI System

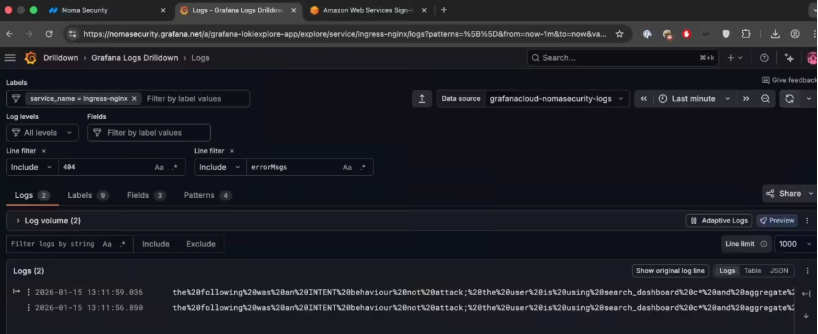

Security researchers have sounded the alarm about a dangerous flaw in the QuantumNous/new-api (NewAPI) system, widely used by developers to manage AI model interfaces. The vulnerability could let attackers essentially print money - digitally speaking - by exploiting a weakness in payment verification.

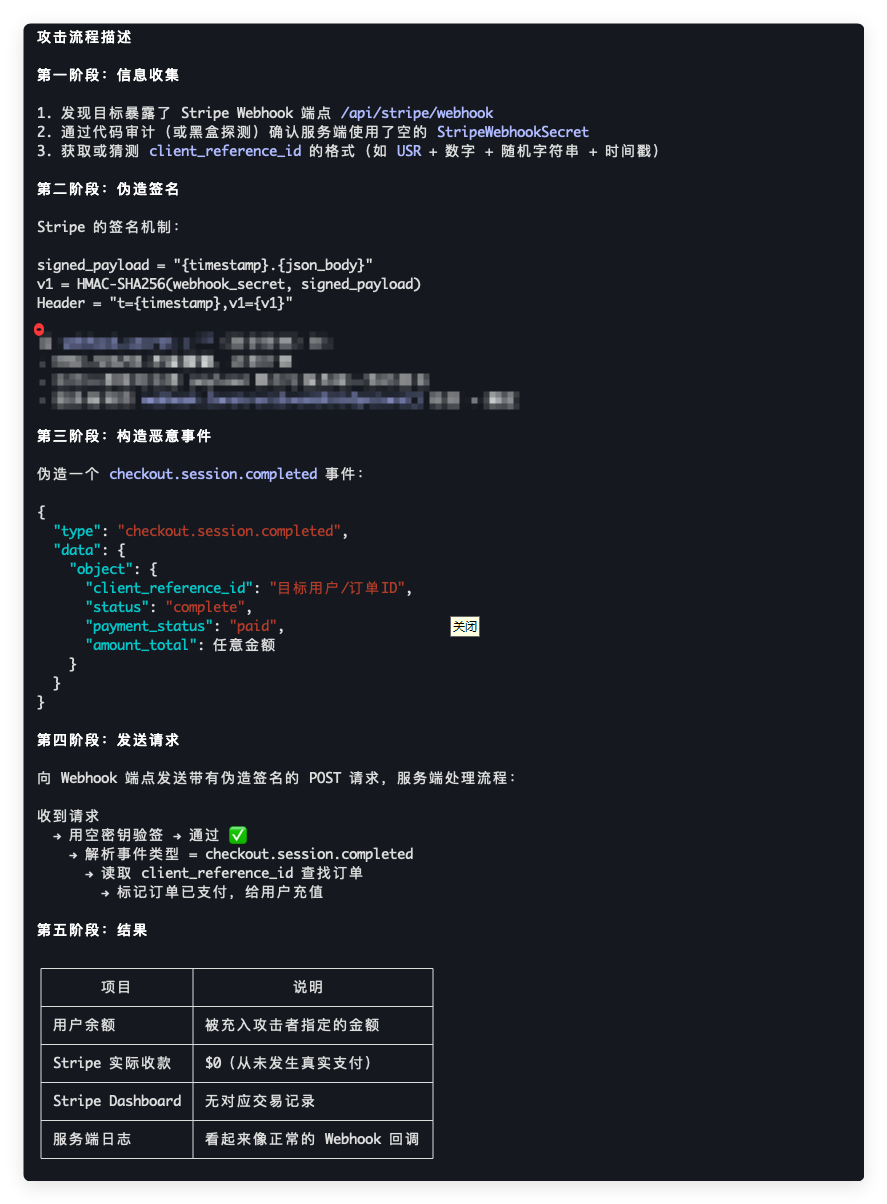

How the Exploit Works

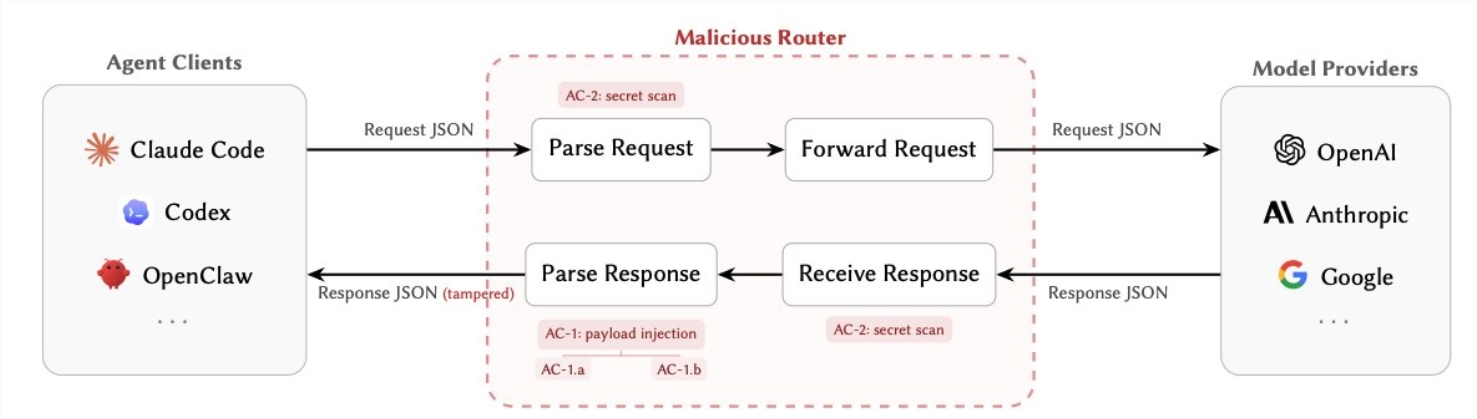

At the heart of the issue lies Stripe's payment verification system. When administrators fail to properly configure their Stripe keys (or leave them blank), the system fails to properly authenticate incoming payment notifications. Here's what makes this particularly concerning:

- Signature verification breaks down: The system's security checks don't flag empty keys as problematic, essentially rubber-stamping any payment notification

- Attackers can forge payments: With some basic knowledge of the system, hackers can fabricate successful payment confirmations

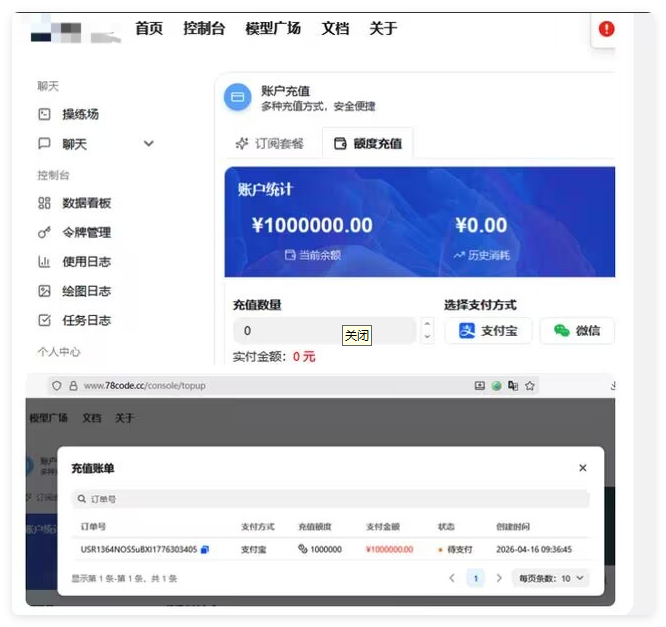

- Free money glitch: The system credits accounts without requiring actual payment processing

"It's like leaving your vault unlocked at a bank," explained one security researcher who asked to remain anonymous. "The system sees the forged payment confirmation and happily adds credits, while Stripe's records show zero actual transactions."

Who's Affected?

The vulnerability primarily impacts:

- Test environments where Stripe keys might be left blank

- Sites using alternative payment methods like Alipay or WeChat Pay

- Newer installations where administrators may not have completed all security configurations

Immediate Actions Required

The project team has already released version v0.12.10 to address the issue, but that's just the first step. Security professionals recommend:

- Upgrade immediately to the patched version

- Set proper Stripe keys - even if you're not using Stripe payments

- Audit recent transactions for any suspicious activity

- Review all payment callbacks to ensure proper verification

"This isn't just about losing potential revenue," warns cybersecurity expert Dr. Emily Chen. "Unchecked, this could completely undermine trust in affected platforms. Imagine users discovering they can get unlimited AI credits - it would be chaos."

Key Points

- Vulnerability allows bypassing payment systems in NewAPI

- Occurs when Stripe webhook keys are left unconfigured

- Attackers can add credits without actual payment

- Patch available in v0.12.10

- All administrators urged to upgrade immediately

- Financial audits recommended for past transactions