OpenAI Scrambles to Patch Security Hole After Axios Hack

OpenAI Forced to Update Security Certificates After Axios Breach

OpenAI is scrambling to protect its users after hackers infiltrated a critical third-party software component used in several of its applications. The company announced this week that it's replacing security certificates following a sophisticated supply-chain attack targeting Axios, a popular development library.

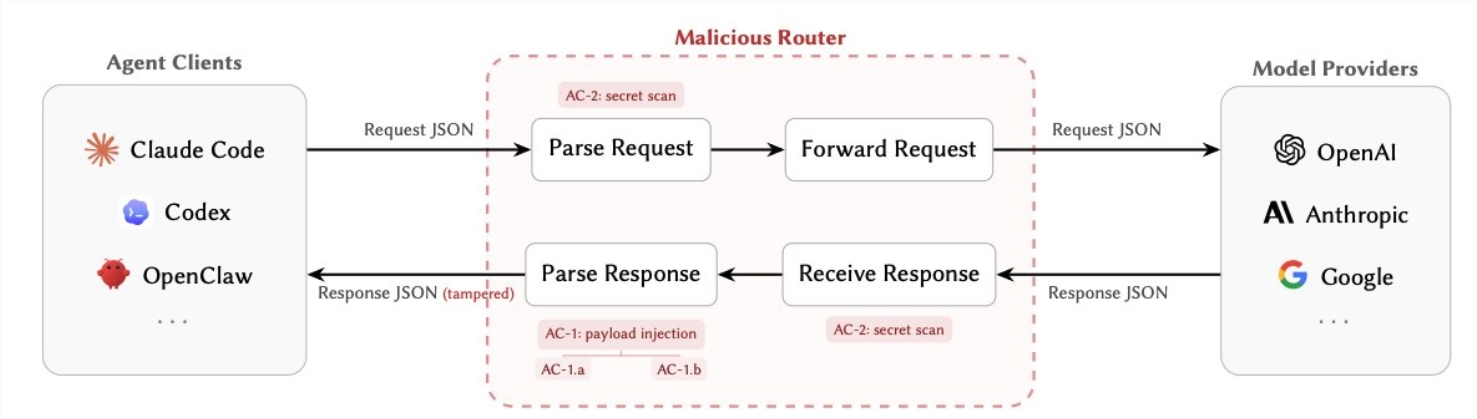

How the Hack Unfolded

The security nightmare began on March 31, 2026, when attackers gained access to Axios maintainers' accounts. They managed to sneak malicious code into version 1.14.1 of the library - a version that OpenAI was using through its GitHub Actions workflow. This gave the hackers a potential backdoor into systems running OpenAI's macOS applications.

"Imagine someone sneaking a fake ID into the factory that makes your house keys," explains cybersecurity analyst Mark Chen. "That's essentially what happened here. The attackers compromised a trusted source that many developers rely on."

What Was at Risk?

The vulnerable workflow had access to sensitive signing materials used for:

- ChatGPT Desktop

- Codex

- Codex-cli

- Atlas

These digital certificates act like virtual seals of approval, assuring users that software genuinely comes from OpenAI rather than imposters. With these compromised, there was a real risk of malicious actors distributing fake versions of OpenAI's applications.

OpenAI's Response

Within hours of discovering the breach, OpenAI's security team sprang into action:

- Immediately revoked the compromised certificates

- Released updated versions of all affected applications

- Implemented additional verification checks for third-party dependencies

"We caught this early thanks to our monitoring systems," an OpenAI spokesperson told reporters. "While we've found no evidence of actual malicious use, we're taking no chances with our users' security."

What Users Need to Do

If you use any OpenAI desktop applications, security experts recommend:

- Updating to the latest version immediately

- Checking that applications are properly signed by OpenAI

- Remaining vigilant for suspicious behavior

"This isn't just about OpenAI," warns Chen. "It's a wake-up call about how fragile our software supply chains can be. One compromised library can ripple through dozens of major applications."

OpenAI has promised enhanced security measures moving forward, including more rigorous vetting of third-party components and additional layers of code verification.

Key Points

- Supply chain attack: Hackers compromised Axios, a widely-used development library

- Potential risk: Could have allowed unauthorized access to OpenAI applications

- Swift action: OpenAI revoked certificates and released updates within hours

- User action: Update all OpenAI desktop applications immediately

- Broader impact: Highlights vulnerabilities in software supply chains