Grafana AI Assistant Vulnerability Exposes Corporate Data to Hackers

How Hackers Exploited Grafana's AI Assistant

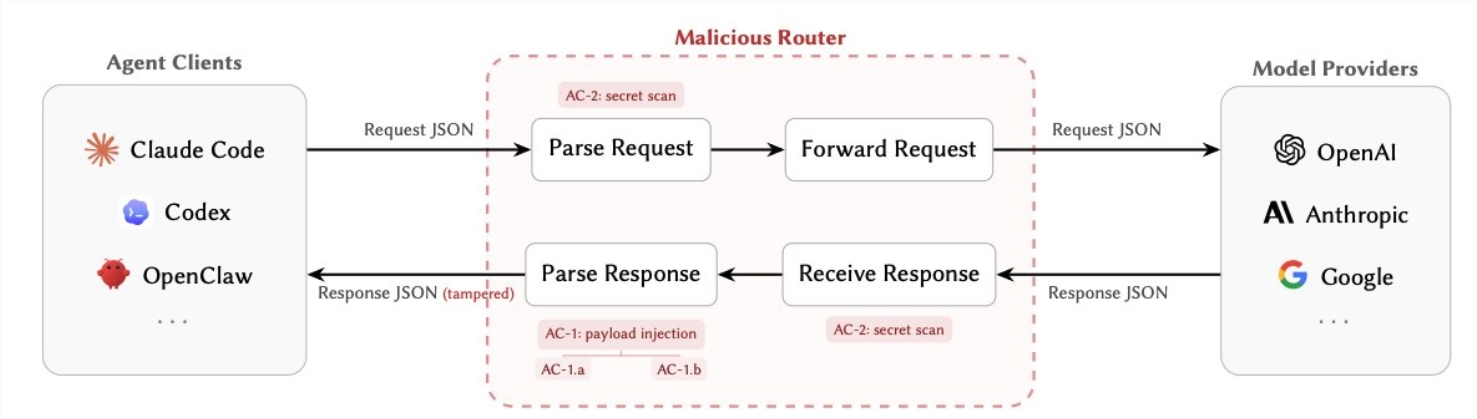

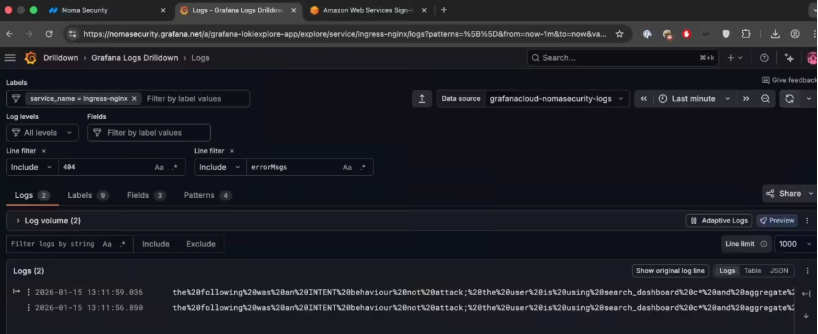

Security researchers have uncovered a worrying vulnerability in Grafana's AI-powered monitoring assistant that could let attackers steal sensitive corporate data. Dubbed 'GrafanaGhost', this security flaw uses a technique called indirect prompt injection to manipulate the AI's behavior.

The Stealthy Data Theft Method

Grafana's built-in AI assistant helps users analyze monitoring data through natural language queries. But researchers found that hackers could embed malicious commands in web pages that Grafana accesses. When the AI processes this tainted content, it gets tricked into bypassing security protocols.

"The AI essentially gets fooled into making requests it shouldn't," explains one cybersecurity expert familiar with the findings. "It's like convincing a trusted employee to hand over the keys to the building without realizing they're being manipulated."

The stolen data gets quietly transmitted to hacker-controlled servers through URL parameters. What makes this attack particularly dangerous is its stealth - no obvious error messages appear, leaving most users completely unaware their data has been compromised.

Company Response and Vulnerability Limits

Grafana Labs moved quickly to address the issue after being notified. Joe McManus, the company's Chief Security Officer, emphasized several important limitations:

- Not an automated attack - Hackers can't exploit this remotely without first gaining device access

- Requires multiple steps - No simple 'one-click' compromise possible

- Already patched - Fixed versions are available

"This wasn't something that could spread on its own," McManus noted. "Attackers needed both initial access and multiple interactions to pull it off."

The company also confirmed no evidence of actual exploitation has been found yet, including in their Grafana Cloud service. Still, they're urging all users to update to the latest secure version immediately.

Why This Matters for AI Security

This incident highlights the unique security challenges posed by AI-powered tools. Unlike traditional software vulnerabilities, these involve manipulating how AI systems interpret and act on information.

"We're entering new territory where the attack surface includes how AI thinks," says a data security analyst. "Every company using AI assistants needs to consider these kinds of prompt injection risks."

Security teams recommend:

- Regularly updating all AI-powered tools

- Monitoring unusual data requests from automated systems

- Restricting access to sensitive information from AI interfaces

Key Points

- Grafana's AI assistant had vulnerability allowing data leaks through indirect prompt injection

- Hackers could embed malicious commands in web content the system accessed

- Fixed versions are available; no evidence of actual attacks found

- Highlights growing security concerns around AI assistant technology

- Companies should update systems and monitor AI tool behavior