NVIDIA's Lyra 2.0 Creates Expansive 3D Worlds from Single Photos

NVIDIA's New 3D Generator Turns Photos into Expansive Virtual Worlds

Imagine taking a single photograph and watching it transform into a fully explorable 3D environment stretching 90 meters in every direction. That's exactly what NVIDIA's new Lyra 2.0 system can do.

Breaking Through Technical Barriers

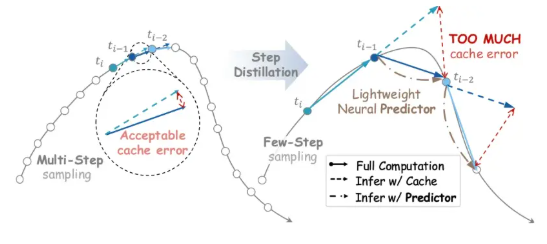

Released on April 16, 2026, Lyra 2.0 solves a critical problem that's plagued 3D generation for years - the distortion that occurs when trying to extend environments beyond short distances. The secret lies in two innovative approaches:

- Real-time 3D geometry storage ensures environmental consistency when cameras return to previous positions

- Self-correcting training where the model learns from intentionally flawed output data

"What makes this system special isn't just how far it can generate," explains lead researcher Dr. Elena Torres, "but how it maintains quality and coherence over those distances."

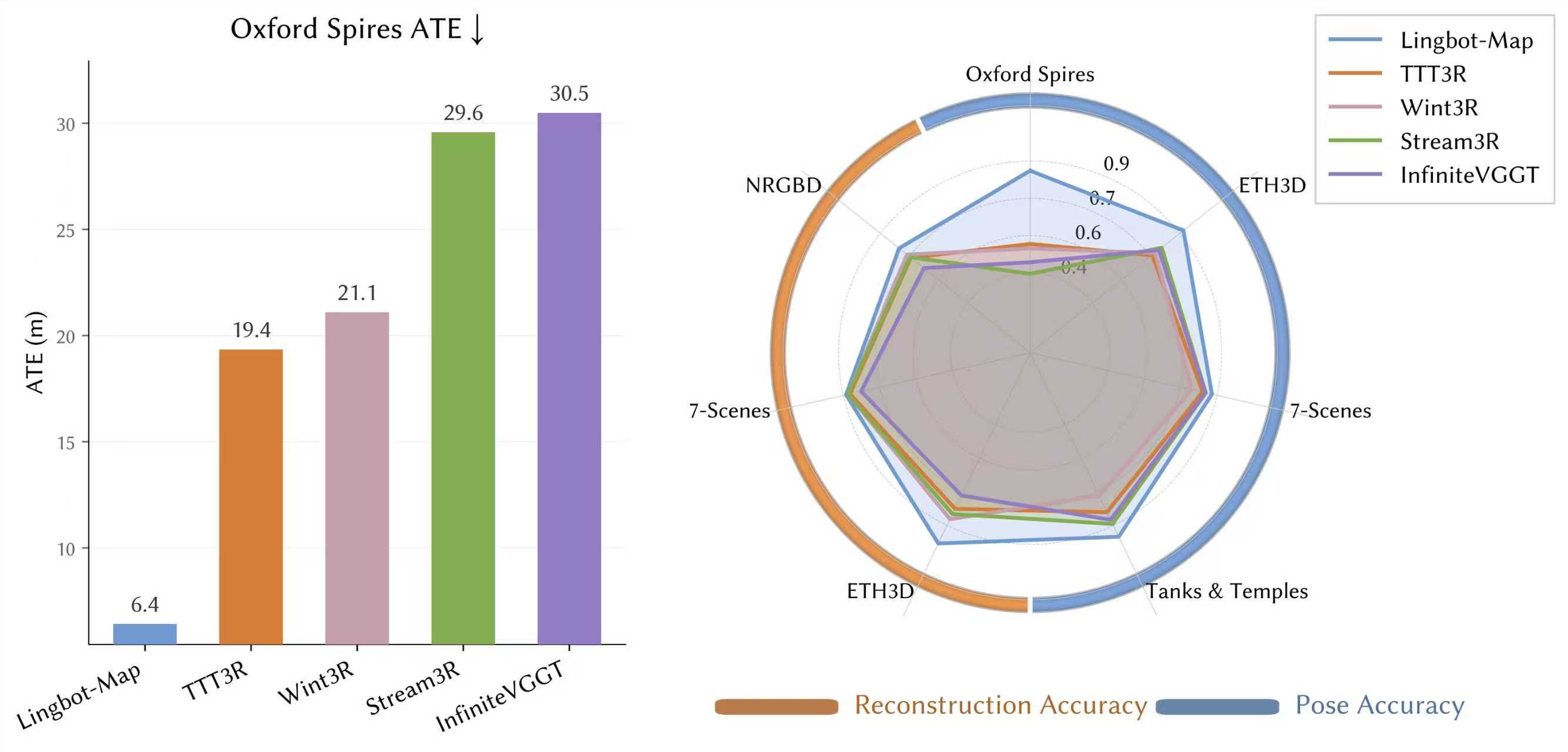

Outperforming the Competition

Benchmark tests show Lyra 2.0 leaving competitors in the dust:

- 13x faster generation in its optimized mode

- Superior image quality to GEN3C and Yume-1.5

- More precise camera control than any existing system

Practical Applications

The technology already integrates seamlessly with Nvidia Isaac Sim, meaning these AI-generated worlds can become training grounds for robots. This breakthrough could dramatically reduce the need for expensive real-world data collection in robotics development.

While currently limited to static scenes, Lyra 2.0's advancements provide crucial infrastructure for:

- Autonomous vehicle testing

- General-purpose robotics (AGI)

- Virtual environment creation

What's Next?

The team is already working on dynamic scene generation, which could open up entirely new possibilities for virtual production and gaming. As NVIDIA continues pushing the boundaries, one thing's clear - the line between real and virtual worlds is getting blurrier by the day.

Key Points

- Generates 90-meter 3D environments from single photos

- Solves long-distance distortion problems

- Outperforms six major competitors

- 13x faster generation in optimized mode

- Direct integration with robotics simulation platforms