MiroThinker v1.0: The Open-Source AI Agent That Thinks Like Humans

MiroThinker v1.0 Redefines How AI Agents Learn

The artificial intelligence landscape just got more interesting with the release of MiroThinker v1.0 by the MiroMind team. This isn't just another AI model—it's a thinking machine that learns like we do, through trial, error, and constant conversation with its environment.

Breaking Free from Traditional Limits

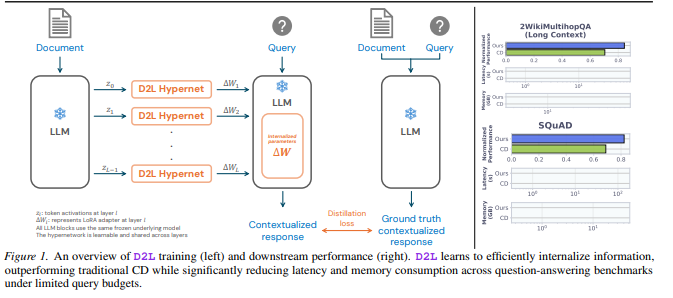

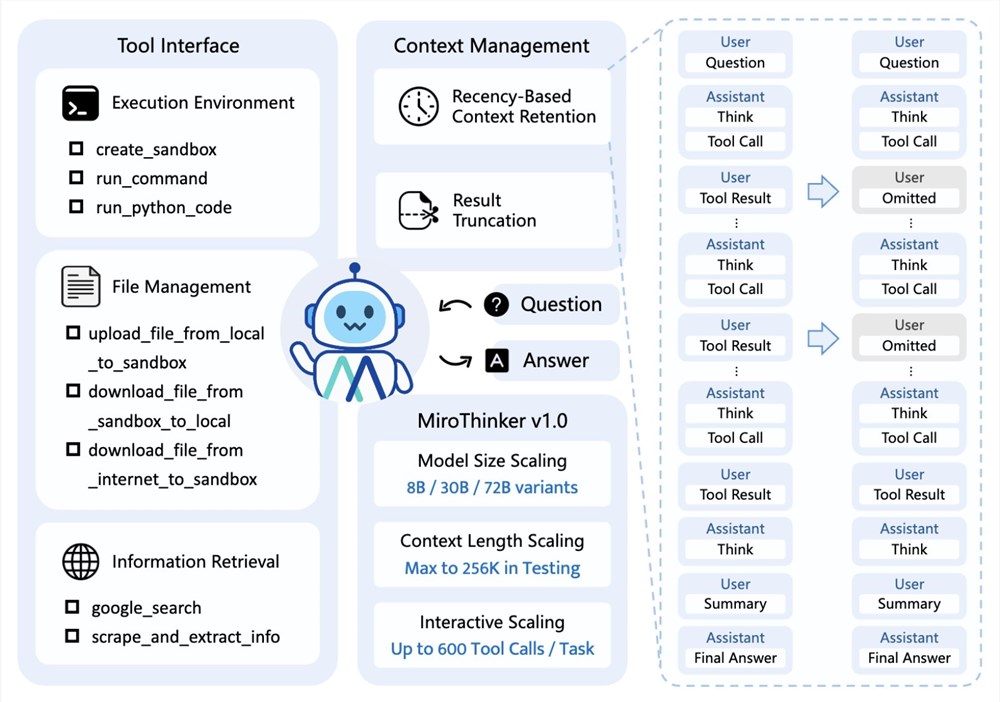

Traditional AI models grow smarter by adding more parameters—essentially making their brains bigger. MiroThinker takes a radically different approach with its "Deep Interaction Scaling" framework. Imagine teaching a child not by making them memorize textbooks, but by letting them explore the world and learn from mistakes. That's essentially what this framework enables.

The numbers speak volumes:

- 256K context window: Enough memory to hold War and Peace...twice

- 600 tool calls per session: Comparable to a human worker's daily task output

- Integrated toolchain: Search, Linux sandbox, code execution—you name it

From Theory to Kitchen: A Sweet Demonstration

The team didn't just talk about capabilities—they showed them in action. In one impressive demo, MiroThinker:

- Collected hundreds of dessert recipes

- Simulated ingredient combinations

- Calculated nutritional values

- Optimized sweetener ratios through multiple iterations

- Produced a complete low-sugar dessert plan with cost analysis

All this happened autonomously over several hours using exactly 600 tool calls—no human intervention required.

Why This Changes Everything

"Performance equals interaction depth multiplied by reflection frequency," explains the MiroMind team. In plain English? More conversations with its environment lead to exponentially smarter decisions.

The implications are profound:

- Local deployment possible with 24GB VRAM systems

- Seamless integration with popular frameworks like LangChain and LlamaIndex

- Customizable toolsets let developers create specialized agents

- Open-source availability on GitHub and Hugging Face levels the playing field

The team hints at even bigger ambitions—expanding to thousands of tool calls and "lifelong learning" versions with million-level contexts.

Key Points:

- 🧠 New learning paradigm: Interaction beats parameter-stacking for AI growth

- 🛠️ Practical powerhouse: Handles complex workflows autonomously

- 🔓 Democratized access: Open-source model available now

- ⏭️ Future-ready: Architecture designed for continuous scaling