Sakana AI's Tiny Plugin Could Revolutionize How AI Handles Massive Documents

Sakana AI Cracks the Code on AI Memory Limitations

Imagine feeding War and Peace to an AI model in less time than it takes to sneeze. That's essentially what Sakana AI's new technology achieves. The Tokyo startup's breakthrough could finally solve one of artificial intelligence's most persistent headaches: how to handle massive documents without breaking the bank or slowing to a crawl.

The Memory Dilemma Solved

For years, developers faced an impossible choice when working with large documents:

- Option A: Jam everything into the chat window and watch response times plummet while memory usage soars

- Option B: Spend thousands fine-tuning specialized models for each new task

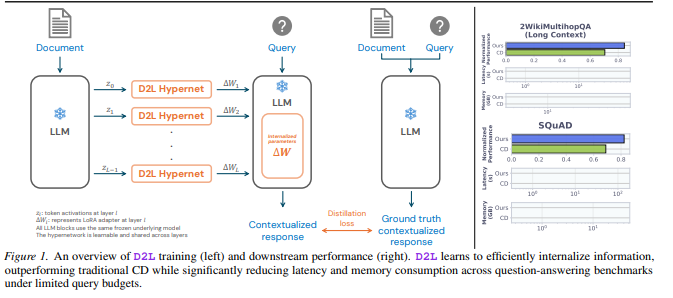

Sakana's solution? A clever pre-training approach that generates ultra-lightweight plugins called LoRAs (Low-Rank Adaptations). These tiny add-ons - some smaller than your average smartphone photo - give existing models new capabilities without expensive retraining.

Doc-to-LoRA: Shrinking Gigabytes to Megabytes

The star of Sakana's show is Doc-to-LoRA (D2L), which performs what can only be described as digital alchemy:

- Memory Miracle: Processes a 100,000-word document using just 50MB of VRAM instead of the usual 12GB+

- Speed Demon: Completes in under a second what traditionally took nearly two minutes

- Capacity Boost: Handles texts four times longer than standard model limits while maintaining impressive accuracy

"It's like giving your model photographic memory," explains one researcher familiar with the technology. "Except instead of remembering everything verbatim, it extracts and stores only the most useful patterns."

Text-to-LoRA: Plain English Power-Ups

The companion Text-to-LoRA (T2L) system lets users customize AI behavior using everyday language. Want your model better at math competitions? Just tell it "help me solve complex math problems" and T2L generates a specialized performance booster.

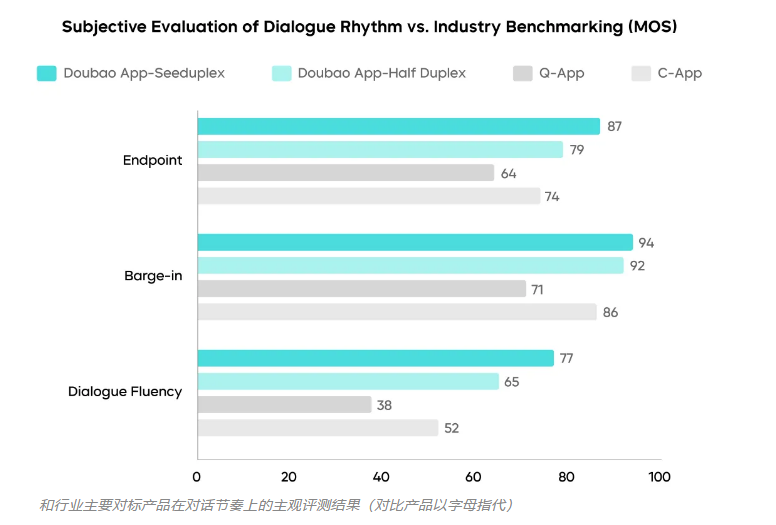

Surprisingly, these automatically generated plugins sometimes outperform purpose-built models. In testing, T2L-enhanced systems solved logic puzzles more accurately than dedicated math AIs.

Unexpected Bonus: Teaching Text Models to 'See'

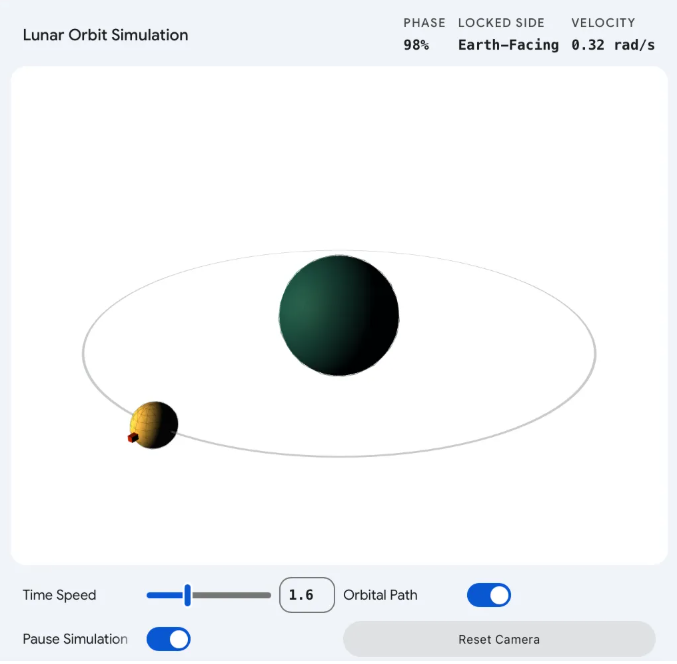

Perhaps most astonishing is D2L's accidental superpower - cross-modal learning. Researchers discovered they could trick pure text models into recognizing images by mapping visual data into LoRA parameters. The result? A language model that had never seen pictures before suddenly classified images with 75% accuracy.

This happy accident suggests LoRA technology might bridge gaps between different types of AI systems, potentially paving the way for more versatile artificial intelligence.

The implications are profound:

- Small businesses could afford customized AI assistants

- Researchers could rapidly prototype specialized models

- Consumers might someday personalize their chatbots as easily as installing smartphone apps

The era where only tech giants could afford tailored AI may be ending.