Google Launches Project Astra Glasses with AR and AI Integration

Google has introduced Project Astra, an innovative prototype of augmented reality (AR) glasses, developed by its DeepMind team. The announcement, made on Wednesday, marks a significant step in the company's efforts to merge artificial intelligence (AI) and AR technologies, showcasing a powerful combination of both in real-time applications.

Prototype Glasses Powered by Android XR

The glasses, powered by Android XR, a new platform for visual computing, represent Google's push toward creating wearable devices like glasses and headsets with advanced AI capabilities. Although these glasses look promising, Google has clarified that they are still in the prototype phase, with no official product release or specific launch timeline confirmed.

emonstration of the translation feature on Google's prototype glasses

One of the key features demonstrated during the unveiling is real-time translation. The glasses are capable of translating spoken language instantly, making them an invaluable tool for travelers and multilingual environments. Additionally, the glasses can remember locations and read text independently, eliminating the need for users to interact with a smartphone. Google emphasized that these features, powered by AI, are just the beginning of what could be possible when AR and AI work in tandem.

Future Vision for AR Glasses

Google's ultimate goal is to create a more refined version of the glasses that are not only functional but also stylish and comfortable. The future model will be designed to integrate seamlessly with Android devices, providing essential information through simple touch gestures. Features like turn-by-turn directions, translations, and message summaries are expected to be easily accessible, offering users a more intuitive way to interact with their environment.

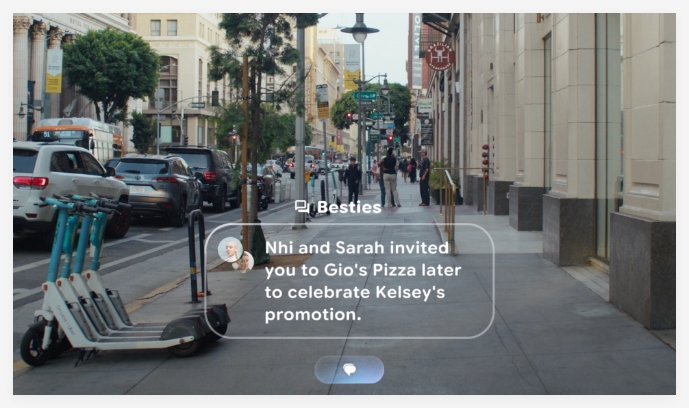

emonstration of Google's prototype glasses

Project Astra is a notable advancement in the AR glasses market, especially when compared to current offerings from companies like Meta and Snap. The prototype glasses are expected to lead the way in multimodal AI capabilities. The glasses can process both environmental imagery and voice inputs simultaneously, providing a richer and more interactive experience for users. Google’s multimodal approach allows the AI system to assist in a variety of real-world tasks, such as object recognition and location-based suggestions.

Though Project Astra is currently limited to mobile applications, its potential for future use in AR glasses is immense. Google’s technology is poised to outpace current AR glasses offerings, thanks to its stronger AI integration.

The Multimodal Advantage

What sets Google apart from other AR glasses manufacturers is its emphasis on multimodal AI. The AI within the glasses processes visual and auditory inputs simultaneously, which helps users complete complex tasks in real-time. By integrating these two forms of data, Google’s glasses are equipped to provide a richer, more interactive experience than other products on the market. This approach makes Project Astra a highly promising development in the AR space.

While still in its early stages, the technology showcased in the Project Astra prototype holds the potential for significant breakthroughs in the future of augmented reality glasses. Google’s commitment to pushing the boundaries of AI and AR integration could redefine how people interact with both their devices and the world around them.

Key Points

- Google has unveiled Project Astra, an AR glasses prototype powered by AI.

- The glasses feature real-time translation, location memory, and text-reading capabilities.

- Powered by Android XR, the glasses aim to create a seamless AR experience with Android devices.

- Google’s focus on multimodal AI sets the glasses apart from competitors like Meta and Snap.

- While still in prototype form, Project Astra showcases the future potential of AR glasses.