DeepSeek's Math AI Wins Gold at Olympiad, Goes Open Source

DeepSeek-Math-V2 Achieves Breakthrough in Mathematical AI

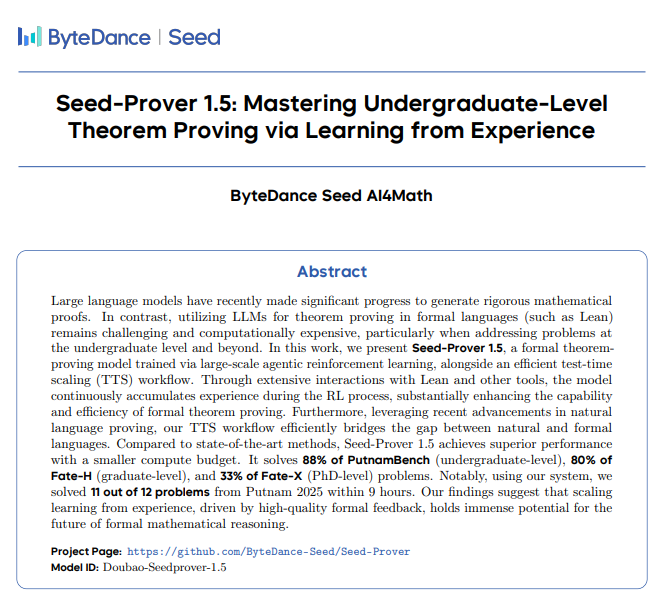

In a landmark achievement for artificial intelligence, DeepSeek-Math-V2 has become the first open-source model to demonstrate gold medal-level performance at the prestigious International Mathematical Olympiad (IMO). Released today under Apache 2.0 license, this 685 billion parameter mixture-of-experts model represents a quantum leap in mathematical reasoning capabilities.

How It Works: Thinking Like a Mathematician

The secret sauce? A revolutionary "generate-and-validate" mechanism that works much like human mathematical intuition. Unlike conventional AI that makes single-pass attempts, DeepSeek-Math-V2 employs an internal verifier that scrutinizes each proof step in real-time. When it spots flawed logic or lucky guesses - something even human mathematicians occasionally produce - the system automatically refines its approach.

Competition Performance That Turns Heads

The numbers speak volumes:

- 2025 IMO: Solved 5/6 problems (83.3% accuracy), scoring 210/252 points - good for third place globally behind US and South Korea teams

- 2024 China Mathematical Olympiad: Achieved gold medal standard

- Putnam Competition: Scored near-perfect 118/120 with unlimited computing power (human record: just 90 points)

On Google DeepMind's IMO-ProofBench, it achieved staggering accuracy rates: 99% on basic problems and still impressive 61.9% on high-difficulty challenges.

Open Source Advantage

What sets DeepSeek-Math-V2 apart from closed systems like OpenAI's o1 or AlphaProof is its complete transparency. Researchers can download weights from Hugging Face today to:

- Reproduce results locally

- Audit the methodology

- Build upon this breakthrough The training incorporated expert annotations of "pathological proofs" before transitioning to automated verification with up to 64 parallel reasoning paths.

Practical Applications Beyond Competitions

The implications extend far beyond contest mathematics:

- Drug discovery: Verifying complex molecular interactions

- Cryptography: Developing and testing new encryption methods

- Formal verification: Ensuring software/hardware reliability The model is available now on Hugging Face with full competition solutions published for peer review.

Key Points:

- First open-source AI to reach IMO gold standard

- Novel "generate-and-validate" mimics human proof refinement

- Outperformed most human teams in major math competitions

- Complete weights and training details publicly available

- Potential applications in high-stakes verification fields