Beijing Probes First AI-Generated False Ad Case

Beijing Investigates Landmark AI False Advertising Case

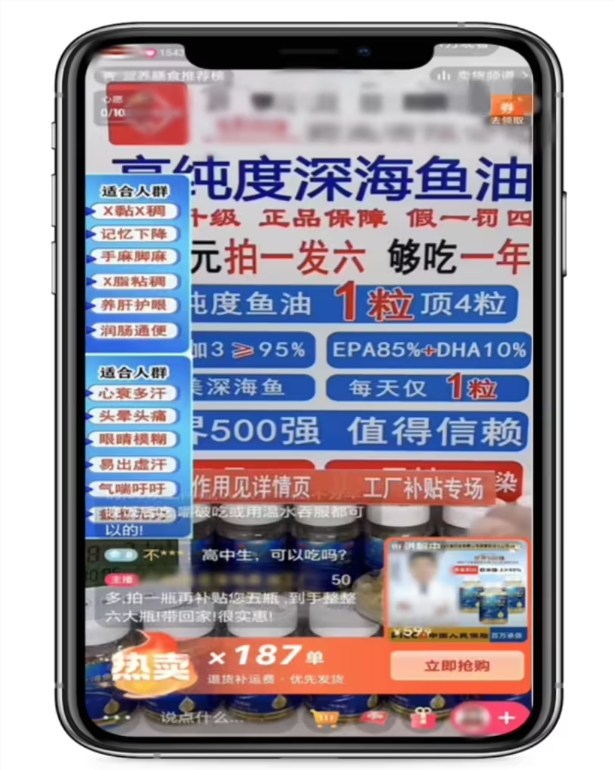

The Beijing Market Supervision Bureau has made regulatory history by investigating the city's first case of AI-generated false advertising. The case involves a company that used artificial intelligence to create deceptive marketing materials featuring impersonations of well-known television hosts.

The Deceptive Campaign

The offending advertisements promoted "Deep Sea DHA Fish Oil," an ordinary food product, while making unverified medical claims. According to investigators:

- The company used AI video editing to superimpose famous CCTV hosts' images

- Created synthetic voiceovers with fabricated endorsement content

- Published the misleading ads on their official video platform account

The advertisements falsely claimed the fish oil could treat various health conditions including dizziness, headaches, and limb numbness - assertions that violate China's Advertising Law.

Regulatory Response

The bureau issued administrative penalties against the company and emphasized:

"Ordinary food products cannot be advertised as having medical effects or treatment functions"

Authorities warned that public figures' images are frequently exploited for such scams and urged consumers to remain vigilant.

Consumer Protection Measures

The bureau provided these recommendations:

- Verify product claims through official channels

- Be skeptical of celebrity endorsements without verification

- Report suspicious ads via hotlines 12315 or 12345

Broader Implications

This case represents:

- A landmark enforcement action against AI-assisted deception

- Growing regulatory focus on emerging technology abuses

- Commitment to maintaining market integrity amid technological evolution

The investigation sends a clear warning to marketers about improper use of AI technologies like deepfakes for commercial gain.

Key Points:

✅ First Beijing case prosecuting AI-generated false ads

⚠️ Violation: Marketing food as having medical benefits

📢 Consumers encouraged to report suspicious marketing

🔍 Authorities monitoring emerging tech abuses in advertising