Anthropic Debuts Claude AI for Life Sciences Research

Anthropic Enters Life Sciences With Specialized AI Tool

Artificial intelligence leader Anthropic has officially launched Claude for Life Sciences, a tailored AI solution designed to revolutionize biomedical research and drug development workflows. The announcement marks Anthropic's first dedicated expansion into the life sciences sector.

Technical Foundations and Capabilities

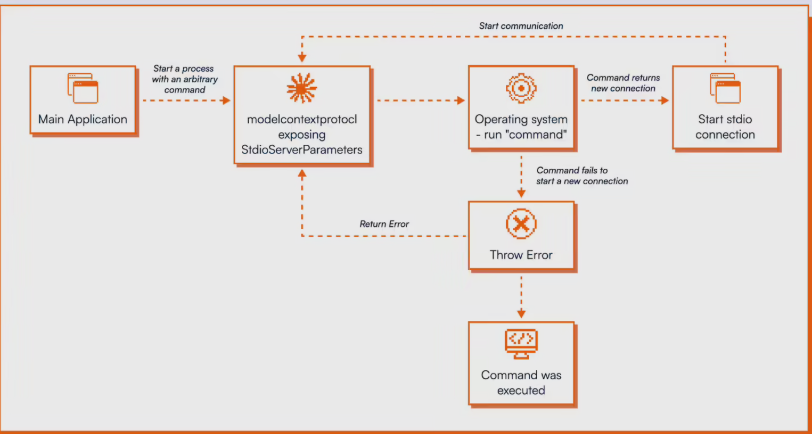

The new offering builds upon Anthropic's Claude Sonnet 4.5 model, which demonstrated exceptional performance (scoring 0.83) on life science tasks approaching human expert benchmarks (0.79). Unlike generic AI models, Claude for Life Sciences incorporates several specialized features:

- Scientific Platform Integration: Direct connectivity with Benchling, PubMed, 10x Genomics and Synapse.org enables seamless data import/analysis

- Agent Skills Framework: Pre-configured workflows automate complex protocols like single-cell RNA sequencing QC

- Regulatory Compliance: Designed with transparency features meeting healthcare industry requirements

"We observed researchers already adapting Claude for scientific tasks," explained Eric Kauderer-Abrams, Anthropic's Head of Biology and Life Sciences. "Now we're formalizing comprehensive end-to-end support."

Industry Impact and Partnerships

The launch accompanies strategic collaborations with major players:

- 10x Genomics integration enables natural language processing of single-cell datasets

- Implementation partners including Deloitte and Accenture facilitate enterprise adoption

- Early adopters like Sanofi report widespread daily usage among researchers

The tool aims to compress months-long processes - from literature review to regulatory submissions - into minutes through AI automation. Novo Nordisk achieved a dramatic reduction from 10 weeks to just 10 minutes for clinical document preparation.

Competitive Landscape and Future Roadmap

The move positions Anthropic against Google DeepMind's AlphaFold3 (serving 2M+ researchers) but focuses on workflow integration rather than pure discovery. The company emphasizes ongoing model iterations and expansion of industry-specific capabilities while prioritizing safety protocols.

The "AI for Science" program offers free API credits for high-impact research projects through cloud platforms AWS and Google Cloud.

Key Points:

- First industry-specific adaptation of Claude AI architecture

- Reduces multi-month research workflows to minutes

- Integrates with major scientific platforms without data export

- Backed by partnerships with leading biotech firms

- Available now via AWS Marketplace (Google Cloud coming soon)